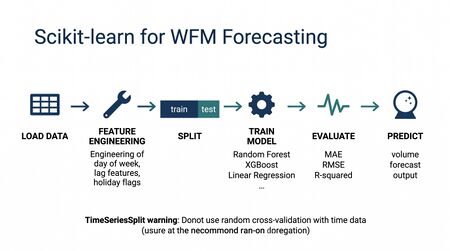

Scikit-learn for WFM Forecasting

Scikit-learn is the most widely used general-purpose machine learning library in [[Python for Workforce Management|Python]], providing efficient implementations of classification, regression, clustering, and model evaluation algorithms under a consistent, well-documented API.[1] For workforce management practitioners, scikit-learn serves as the practical bridge between understanding ML concepts in the abstract and building working models that forecast contact volumes, predict agent attrition, classify contact types, and evaluate model performance with rigorous statistical methods.

Unlike specialized time-series libraries such as statsmodels or Prophet, scikit-learn is a general-purpose toolkit. It does not natively handle temporal dependencies—a limitation that matters in WFM, where most data is inherently sequential. Despite this, scikit-learn remains the go-to starting point for WFM modeling because its regression and classification algorithms handle the tabular, feature-engineered datasets that WFM analysts typically prepare. A workforce planner who transforms time-series data into features (day-of-week indicators, lag values, rolling averages) can use scikit-learn's random forests or gradient boosting models to build forecasts that often outperform simpler statistical methods, particularly when external variables like marketing campaigns or product launches influence demand.[2]

This article introduces scikit-learn through the lens of workforce management applications. It assumes familiarity with Python and basic machine learning concepts, and focuses on the practical patterns that WFM professionals need to build, evaluate, and deploy ML models for operational use.

What Is Scikit-learn

Scikit-learn (often abbreviated sklearn) is an open-source Python library originally developed at INRIA, the French National Institute for Research in Digital Science and Technology, as part of a Google Summer of Code project in 2007. It was publicly released in 2010 and has since become the de facto standard for classical machine learning in Python, with over 55,000 GitHub stars and citations in thousands of academic papers.[1]

The library is sometimes called the "Swiss Army knife" of machine learning because it provides a broad set of tools under one roof:

- Supervised learning algorithms — Linear regression, logistic regression, support vector machines, decision trees, random forests, gradient boosting, k-nearest neighbors, and more.

- Unsupervised learning algorithms — K-means clustering, DBSCAN, principal component analysis (PCA), and other dimensionality reduction methods.

- Model selection tools — Cross-validation, grid search, randomized search for hyperparameter tuning.

- Preprocessing utilities — Scaling, normalization, encoding categorical variables, imputing missing values.

- Evaluation metrics — Mean absolute error, root mean squared error, R-squared, accuracy, precision, recall, F1-score, ROC curves, and many more.

- Pipeline infrastructure — Chaining preprocessing and modeling steps into reproducible, deployable units.

What makes scikit-learn particularly valuable for WFM practitioners—who are typically not software engineers—is its consistent API design. Every model in scikit-learn follows the same pattern: instantiate, fit, predict. Once a practitioner learns this pattern with linear regression, they can swap in a random forest or gradient boosting model by changing a single line of code. This consistency dramatically reduces the learning curve and encourages experimentation.[2]

Scikit-learn does not include neural networks beyond a basic multi-layer perceptron, deep learning frameworks, or native time-series modeling. For deep learning, practitioners use TensorFlow or PyTorch. For time-series-native approaches, statsmodels (ARIMA, exponential smoothing) or Prophet are more appropriate. Scikit-learn occupies the space of classical, tabular ML—which covers the majority of WFM modeling tasks.

The Scikit-learn Workflow

Every scikit-learn project follows a six-step workflow that maps cleanly to the analytical process WFM professionals already understand. This workflow is not unique to scikit-learn—it reflects the standard ML pipeline—but scikit-learn provides tools for every step.

Step 1: Load Data

Data typically arrives as a Pandas DataFrame loaded from a CSV, database query, or API. In WFM, this is usually interval-level volume data, agent-level performance records, or historical schedule and adherence data.

import pandas as pd

# Load historical interval-level data

df = pd.read_csv('interval_volumes.csv', parse_dates=['interval_start'])

Step 2: Preprocess

Raw data must be cleaned and transformed into features the model can use. This includes handling missing values, encoding categorical variables, and scaling numerical features. Scikit-learn provides dedicated transformer classes for each operation.

from sklearn.preprocessing import StandardScaler, OneHotEncoder

from sklearn.impute import SimpleImputer

# Scale numerical features to zero mean, unit variance

scaler = StandardScaler()

X_scaled = scaler.fit_transform(X_numerical)

Step 3: Split

Data is divided into training and test sets so the model can be evaluated on data it has never seen. For time-series data in WFM, this split must respect temporal ordering—random splitting would leak future information into the training set, producing misleadingly optimistic results.

from sklearn.model_selection import train_test_split

# For non-temporal data (e.g., agent attrition prediction)

X_train, X_test, y_train, y_test = train_test_split(X, y, test_size=0.2, random_state=42)

# For time-series data: split by date, not randomly

train = df[df['date'] < '2025-01-01']

test = df[df['date'] >= '2025-01-01']

Step 4: Train

The model is fit to the training data. In scikit-learn, this is always a single method call.

from sklearn.ensemble import GradientBoostingRegressor

model = GradientBoostingRegressor(n_estimators=200, max_depth=5, random_state=42)

model.fit(X_train, y_train)

Step 5: Evaluate

Predictions are generated on the test set and compared to actual values using appropriate metrics. For WFM forecasting, mean absolute error (MAE) and root mean squared error (RMSE) are standard. For classification tasks like attrition prediction, precision, recall, and F1-score matter more than raw accuracy.

from sklearn.metrics import mean_absolute_error, mean_squared_error

import numpy as np

y_pred = model.predict(X_test)

mae = mean_absolute_error(y_test, y_pred)

rmse = np.sqrt(mean_squared_error(y_test, y_pred))

Main article: Model Evaluation and Validation

Step 6: Predict

The trained model generates predictions on new, unseen data—next week's intervals, next quarter's staffing needs, or a current agent's attrition risk.

# Predict next week's volumes

next_week_features = prepare_features(next_week_dates)

next_week_forecast = model.predict(next_week_features)

This six-step workflow is deliberately simple. The complexity in WFM modeling lies not in the scikit-learn mechanics but in the feature engineering, validation strategy, and domain knowledge required to build models that are actually useful in production.

Regression Models for WFM

Regression models predict continuous numerical values and are the workhorses of WFM forecasting. Scikit-learn provides multiple regression algorithms, each with different strengths for WFM applications.

Main article: Regression for Forecasting

Linear Regression

Linear regression models the relationship between features and the target variable as a weighted sum. It is the simplest regression approach and serves as the baseline against which more complex models are compared.

WFM application: Predicting average handle time (AHT) based on agent tenure, call type, and time of day. If the relationship between these features and AHT is approximately linear—more experienced agents handle calls faster, certain call types consistently take longer—linear regression captures it efficiently.

Linear regression has the advantage of interpretability. Each feature receives a coefficient that directly indicates its direction and magnitude of influence. A WFM manager can examine the model and see that each additional month of agent tenure reduces predicted AHT by 8.3 seconds, or that billing calls add 45 seconds compared to the baseline call type. This transparency is valuable when presenting forecasting methodology to operations leadership.

The limitation is that linear regression cannot capture non-linear relationships or interactions between features without explicit feature engineering. If the effect of agent tenure on AHT diminishes after 12 months (a common pattern), the model will not discover this on its own.

Random Forests

Random forests construct multiple decision trees, each trained on a random subset of the data and features, then average their predictions. This ensemble approach reduces overfitting and handles non-linear relationships naturally.[3]

WFM application: Forecasting daily contact volume using a mix of calendar features (day of week, month, holiday proximity), operational features (marketing campaigns active, product releases), and historical features (same-day-last-week volume, same-day-last-year volume). Random forests handle the interactions between these features—a Monday after a holiday weekend behaves differently than a regular Monday—without the analyst needing to manually engineer every interaction term.

Random forests also provide feature importance scores that rank which inputs contribute most to predictions. In WFM, this reveals whether day-of-week, marketing campaigns, or seasonal patterns drive the majority of volume variation—information that directly informs planning and communication with stakeholders.

The primary trade-off is reduced interpretability compared to linear regression. A random forest with 200 trees cannot be explained as a simple equation, though feature importance and partial dependence plots partially address this.

Gradient Boosting

Gradient boosting builds trees sequentially, with each new tree trained specifically to correct the errors of the preceding ensemble. Scikit-learn provides GradientBoostingRegressor, and the library is also compatible with XGBoost and LightGBM—external gradient boosting libraries with additional performance optimizations.[4]

WFM application: Multi-interval volume forecasting where accuracy at the 15- or 30-minute interval level is critical for intraday staffing. Gradient boosting often achieves lower error rates than random forests on structured tabular data because the sequential error-correction mechanism effectively learns the residual patterns that simpler models miss.

In practice, gradient boosting is the algorithm most likely to win a "model shootout" on WFM forecasting problems. However, it requires more careful hyperparameter tuning than random forests, and its sequential training cannot be fully parallelized, making it slower to train on large datasets.

| Algorithm | Interpretability | Non-linear Capability | Tuning Complexity | Typical WFM Use |

|---|---|---|---|---|

| Linear Regression | High | Low | Low | AHT prediction, simple trend models |

| Random Forest | Medium | High | Medium | Volume forecasting, feature importance analysis |

| Gradient Boosting | Low | High | High | High-accuracy interval-level forecasting |

Classification Models for WFM

Classification models predict discrete categories rather than continuous values. While less prominent than regression in WFM, classification addresses several important operational challenges.

Attrition Prediction

Agent attrition is one of the most expensive problems in contact center operations. Classification models can identify agents at elevated risk of leaving within a specified window (30, 60, or 90 days), enabling proactive retention efforts.

Main article: Attrition and Retention

Features typically include tenure, recent adherence trends, schedule bid satisfaction, commute distance or remote status, performance trajectory (improving vs. declining), overtime hours, and manager tenure. The model outputs either a binary prediction (will leave / will stay) or, more usefully, a probability score that allows HR and operations to prioritize outreach.

Scikit-learn's RandomForestClassifier and GradientBoostingClassifier both perform well on this task. The critical consideration is class imbalance—in most contact centers, monthly attrition runs 3–8%, meaning the "will leave" class is heavily outnumbered. Scikit-learn provides the class_weight='balanced' parameter and integrates with the imbalanced-learn library (imblearn) for techniques like SMOTE that address this imbalance.

Contact Type Classification

Routing contacts to the correct skill group or queue based on early interaction signals (IVR selections, initial speech-to-text content, customer attributes) is a classification problem. A well-trained model reduces misroutes, which directly improves first contact resolution and reduces handle time.

Quality Scoring

Automated quality scoring classifies interactions as meeting or not meeting quality standards based on features extracted from the interaction: silence percentage, hold time, sentiment scores, talk-to-listen ratio, and compliance keyword detection. This enables 100% quality coverage rather than the 2–5% manual sampling typical in most contact centers.

Feature Engineering for WFM

Feature engineering—transforming raw data into meaningful inputs for the model—is consistently the step that most influences model performance in WFM applications. Scikit-learn provides tools for feature transformation, but the design of features requires domain knowledge that only WFM practitioners possess.[5]

Calendar Encoding

Raw dates are meaningless to ML models. They must be decomposed into components that capture the patterns WFM professionals recognize intuitively:

- Day of week — Encoded as seven binary (one-hot) columns. Monday volume patterns differ fundamentally from Wednesday patterns in most contact centers.

- Hour of day — For interval-level models, encoded similarly. Can also be encoded cyclically using sine/cosine transforms to preserve the circular relationship (hour 23 is close to hour 0).

- Week of year — Captures seasonal patterns.

- Month of year — Coarser seasonal signal.

- Day of month — Captures billing-cycle effects in financial services and utilities.

- Quarter end/start indicators — Binary flags for industries with quarterly patterns.

# One-hot encode day of week

df['dow'] = df['date'].dt.dayofweek

dow_encoded = pd.get_dummies(df['dow'], prefix='dow')

# Cyclical encoding for hour of day

import numpy as np

df['hour_sin'] = np.sin(2 * np.pi * df['hour'] / 24)

df['hour_cos'] = np.cos(2 * np.pi * df['hour'] / 24)

Holiday Flags

Holidays disrupt normal volume patterns in ways that vary by industry. Feature engineering should include:

- Is_holiday — Binary flag for the holiday itself.

- Days_before_holiday — Integer distance to the next upcoming holiday. Volume often increases in the days preceding holidays (customers calling before offices close).

- Days_after_holiday — Integer distance from the most recent holiday. Post-holiday volume spikes are common as customers address issues accumulated during the closure.

- Holiday_type — Not all holidays have equal impact. A major holiday (Christmas, Thanksgiving in the US) suppresses volume far more than a minor observance. Encoding holiday type or expected impact level improves specificity.

Lag Features

Lag features encode historical values at specific offsets—the same interval last week, two weeks ago, or last year. These are among the most powerful features for volume forecasting because contact center volume exhibits strong autocorrelation.

# Same day last week

df['volume_lag_7d'] = df.groupby('interval_of_day')['volume'].shift(7)

# Same day four weeks ago

df['volume_lag_28d'] = df.groupby('interval_of_day')['volume'].shift(28)

Lag features require careful handling to avoid data leakage. The lag values must always come from the past relative to the prediction target—never from the future. When building multi-step forecasts (predicting multiple days ahead), lag values for days beyond the first prediction must use the model's own predictions rather than actuals, which can introduce compounding errors.

Rolling Statistics

Rolling (moving) averages, standard deviations, and other window-based statistics smooth noise and capture local trends:

- Rolling mean (7-day, 28-day) — Captures the recent trend level.

- Rolling standard deviation — Captures recent volatility, useful for probabilistic forecasting approaches.

- Rolling min/max — Establishes recent range.

- Exponentially weighted mean — Gives more weight to recent observations, similar to the logic behind exponential smoothing.

# 7-day rolling average of daily volume

df['volume_rolling_7d'] = df['daily_volume'].rolling(window=7).mean()

# Exponentially weighted mean with span of 7 days

df['volume_ewm_7d'] = df['daily_volume'].ewm(span=7).mean()

Interaction and Derived Features

Domain knowledge often suggests interaction features that pure algorithms may not discover efficiently:

- Day-of-week × hour-of-day — Monday mornings behave differently than Monday afternoons.

- Holiday proximity × day-of-week — The Monday after a Thursday holiday carries a different pattern than a normal Monday.

- Campaign active × channel — A marketing email campaign may spike chat volume without affecting voice volume.

These interaction features often improve model performance because they encode the combinatorial patterns that experienced WFM analysts carry in their heads.

Model Selection and Hyperparameter Tuning

Choosing the right algorithm and tuning its settings is a critical step that separates useful models from mediocre ones. Scikit-learn provides systematic tools for both.

Cross-Validation

Cross-validation evaluates model performance by repeatedly splitting data into training and validation folds, training on each training fold, and evaluating on the held-out fold. The average performance across folds provides a more reliable estimate than a single train/test split.

For WFM time-series problems, standard k-fold cross-validation is inappropriate because it ignores temporal ordering. Scikit-learn's TimeSeriesSplit provides time-respecting cross-validation that always trains on past data and validates on future data:

from sklearn.model_selection import TimeSeriesSplit, cross_val_score

tscv = TimeSeriesSplit(n_splits=5)

scores = cross_val_score(model, X, y, cv=tscv, scoring='neg_mean_absolute_error')

print(f"Mean MAE across folds: {-scores.mean():.2f}")

This mirrors how WFM models are actually used—always trained on historical data and applied to future periods. Using random k-fold cross-validation on time-series data will consistently overestimate model accuracy.

Main article: Model Evaluation and Validation

GridSearchCV

Grid search systematically evaluates every combination of specified hyperparameter values using cross-validation, selecting the combination that produces the best score.

from sklearn.model_selection import GridSearchCV

param_grid = {

'n_estimators': [100, 200, 500],

'max_depth': [3, 5, 7, 10],

'learning_rate': [0.01, 0.05, 0.1]

}

grid_search = GridSearchCV(

GradientBoostingRegressor(random_state=42),

param_grid,

cv=TimeSeriesSplit(n_splits=5),

scoring='neg_mean_absolute_error',

n_jobs=-1 # Use all CPU cores

)

grid_search.fit(X_train, y_train)

print(f"Best parameters: {grid_search.best_params_}")

Grid search becomes expensive as the number of hyperparameters and values increases. For large search spaces, RandomizedSearchCV samples a specified number of random combinations, often finding near-optimal settings in a fraction of the time.

Practical Guidance

For most WFM modeling tasks, the following approach yields good results without excessive computation:

- Start with a baseline. Linear regression or a simple random forest with default parameters establishes the performance floor.

- Try gradient boosting. Fit a

GradientBoostingRegressororGradientBoostingClassifierwith moderate defaults (200 estimators, max_depth=5, learning_rate=0.1). - Tune the top candidate. Use

RandomizedSearchCVwithTimeSeriesSplitto search a reasonable hyperparameter space. - Evaluate on held-out test data. The final performance estimate must come from data that was not used during any phase of model selection or tuning.

Spending weeks tuning hyperparameters rarely improves WFM model accuracy as much as investing the same time in better feature engineering or cleaner input data.

Evaluating WFM Models

Model evaluation in WFM requires metrics that match how forecasts and predictions are actually used operationally.

Regression Metrics (Forecasting)

| Metric | Formula | WFM Interpretation |

|---|---|---|

| Mean Absolute Error (MAE) | actual − predicted| | Average forecast miss in the same units as the target (e.g., calls, seconds). Directly interpretable by operations leaders. |

| Root Mean Squared Error (RMSE) | Square root of average squared errors | Penalizes large errors more heavily than MAE. Useful when big forecast misses (under-staffing by 50 agents) are disproportionately costly. |

| Mean Absolute Percentage Error (MAPE) | actual − predicted| / actual × 100 | Expresses error as a percentage. Intuitive for stakeholders but undefined when actual = 0 and distorted by low-volume intervals. |

| R-squared (R²) | 1 − (sum of squared errors / total variance) | Proportion of variance explained by the model. Values above 0.85 are typical for well-engineered WFM volume forecasting models. |

For WFM forecasting, MAE is generally the most useful primary metric because it is expressed in the same units as the target variable and is robust to low-volume intervals. MAPE is popular in WFM reporting but should be used cautiously on interval-level data where low-volume periods produce misleadingly high percentage errors.

Classification Metrics

| Metric | WFM Interpretation |

|---|---|

| Precision | Of agents the model flagged as attrition risks, what percentage actually left? High precision means fewer false alarms that waste HR resources. |

| Recall | Of agents who actually left, what percentage did the model identify? High recall means fewer surprises—attrition the model missed entirely. |

| F1-Score | Harmonic mean of precision and recall. Balances both concerns. Useful as a single summary metric. |

| ROC-AUC | Area under the receiver operating characteristic curve. Measures the model's ability to distinguish between classes across all probability thresholds. Values above 0.75 indicate useful discrimination. |

In attrition prediction, the precision-recall trade-off has direct operational implications. A model with high recall but low precision flags many agents, creating intervention fatigue. A model with high precision but low recall misses too many departures. The right balance depends on the cost of false positives (unnecessary retention interventions) versus false negatives (unplanned attrition).

Pipeline Pattern

Scikit-learn's Pipeline class chains preprocessing steps and the final model into a single object. This pattern is essential for production-ready WFM models because it ensures that preprocessing transformations applied during training are identically applied during prediction—eliminating a common source of bugs.

from sklearn.pipeline import Pipeline

from sklearn.compose import ColumnTransformer

from sklearn.preprocessing import StandardScaler, OneHotEncoder

from sklearn.ensemble import GradientBoostingRegressor

# Define preprocessing for different column types

numerical_features = ['volume_lag_7d', 'volume_lag_28d', 'volume_rolling_7d']

categorical_features = ['dow', 'holiday_type']

preprocessor = ColumnTransformer(

transformers=[

('num', StandardScaler(), numerical_features),

('cat', OneHotEncoder(handle_unknown='ignore'), categorical_features)

]

)

# Chain preprocessing and model into a single pipeline

pipeline = Pipeline([

('preprocessor', preprocessor),

('model', GradientBoostingRegressor(n_estimators=200, max_depth=5, random_state=42))

])

# Train the entire pipeline

pipeline.fit(X_train, y_train)

# Predict with the entire pipeline — preprocessing is automatic

forecast = pipeline.predict(X_new)

The pipeline pattern provides three practical benefits for WFM teams:

- Reproducibility. The entire modeling process—from raw features to predictions—is encapsulated in a single object that can be serialized (saved to disk) and loaded for repeated use.

- Leak prevention. Preprocessing parameters (scaling means and standard deviations, encoding categories) are learned only from training data, preventing information from the test set from influencing the model.

- Deployment simplicity. A serialized pipeline can be deployed to a production environment where it receives raw feature data and returns predictions without requiring separate preprocessing code.

import joblib

# Save the trained pipeline

joblib.dump(pipeline, 'volume_forecast_pipeline.joblib')

# Load and use in production

loaded_pipeline = joblib.load('volume_forecast_pipeline.joblib')

predictions = loaded_pipeline.predict(new_data)

For WFM teams transitioning from spreadsheet-based forecasting to ML-based approaches, the pipeline pattern represents the critical shift from "analyst runs a notebook manually each week" to "a scheduled process generates forecasts automatically." This transition is a key step in the AI scaffolding maturity model.

Limitations for Time Series

Scikit-learn's most significant limitation for WFM applications is its lack of native time-series support. This limitation manifests in several concrete ways that practitioners must understand and work around.

No Temporal Awareness

Scikit-learn algorithms treat each row of data as an independent observation. They have no concept of temporal ordering, autocorrelation, or seasonality. A random forest processing Monday's data does not "know" that Sunday's data came immediately before it—unless the analyst explicitly engineers lag features and rolling statistics to encode that temporal context.

This means the practitioner bears full responsibility for encoding temporal information through feature engineering. While this is achievable (as described in the Feature Engineering section), it adds complexity and creates opportunities for errors, particularly data leakage through improperly constructed lag features.

No Native Decomposition

Classical time-series methods like ARIMA and exponential smoothing explicitly decompose data into trend, seasonal, and residual components. Scikit-learn models must learn these patterns implicitly from features, which sometimes produces less stable extrapolation beyond the training period's range.

When to Use Alternatives

| Scenario | Recommended Approach | Rationale |

|---|---|---|

| Simple univariate volume forecasting | statsmodels (ARIMA, ETS) | Explicit temporal modeling, confidence intervals built in, minimal feature engineering required. |

| Forecasting with strong known seasonality | Prophet or statsmodels | Additive/multiplicative seasonal decomposition handled automatically. |

| Volume forecasting with many external drivers | Scikit-learn (gradient boosting) | Handles high-dimensional feature spaces and non-linear interactions naturally. |

| Classification (attrition, quality scoring) | Scikit-learn | Purpose-built for classification with extensive metric and evaluation support. |

| Probabilistic forecasting with full distributions | Specialized libraries (MAPIE, conformal prediction) | Scikit-learn produces point predictions; obtaining calibrated prediction intervals requires additional tooling. |

| Deep learning sequence models | TensorFlow, PyTorch | LSTM and transformer architectures for complex sequential patterns are beyond scikit-learn's scope. |

Main article: Deterministic vs Probabilistic Models

The pragmatic approach used by many WFM data science teams is to benchmark scikit-learn's feature-engineered models against native time-series methods on the same data. When external variables significantly influence volume (marketing, product launches, weather), scikit-learn approaches frequently win. When the forecasting problem is primarily about extrapolating historical patterns, native time-series methods are often simpler and equally accurate.

For probabilistic forecasting—generating prediction intervals rather than point estimates—scikit-learn is not sufficient on its own. Libraries like MAPIE (Model Agnostic Prediction Interval Estimator) can wrap scikit-learn models to produce conformal prediction intervals, but this adds a layer of complexity that native Bayesian or time-series approaches handle more naturally.

Integration with the WFM Technology Stack

Scikit-learn models do not exist in isolation. In a production WFM environment, they integrate with the broader technology stack:

- Data sourcing — Pandas for data extraction and manipulation from WFM platforms, ACD systems, and HR databases.

- Feature stores — Centralized repositories of pre-computed features that ensure consistency between training and prediction.

- Model serving — Serialized pipelines deployed via REST APIs (using Flask or FastAPI) or batch scoring processes scheduled through cron or orchestration tools.

- Monitoring — Tracking model performance over time to detect drift. When a model's production MAE diverges significantly from its validation MAE, retraining is needed.

- Retraining — Scheduled or triggered retraining as new data accumulates. Most WFM models benefit from weekly or monthly retraining to incorporate recent patterns.

This integration architecture aligns with the AI scaffolding framework's emphasis on building ML capabilities incrementally—starting with manual notebooks, progressing to scheduled batch processes, and ultimately deploying real-time prediction services.

See Also

- Python for Workforce Management

- Machine Learning Concepts

- Machine Learning for Volume Forecasting

- Regression for Forecasting

- Forecasting Methods

- Model Evaluation and Validation

- Attrition and Retention

- Probabilistic Forecasting

- Deterministic vs Probabilistic Models

- Pandas and Data Manipulation for WFM

- AI Scaffolding Framework

References

- ↑ 1.0 1.1 Pedregosa, Fabian, et al. "Scikit-learn: Machine Learning in Python." Journal of Machine Learning Research 12 (2011): 2825–2830.

- ↑ 2.0 2.1 Géron, Aurélien. Hands-On Machine Learning with Scikit-Learn, Keras, and TensorFlow, 3rd ed. O'Reilly Media, 2022. ISBN 978-1098125974.

- ↑ Hastie, Trevor, Robert Tibshirani, and Jerome Friedman. The Elements of Statistical Learning: Data Mining, Inference, and Prediction, 2nd ed. Springer, 2009. ISBN 978-0387848570.

- ↑ Chen, Tianqi, and Carlos Guestrin. "XGBoost: A Scalable Tree Boosting System." Proceedings of the 22nd ACM SIGKDD International Conference on Knowledge Discovery and Data Mining (2016): 785–794.

- ↑ Zheng, Alice, and Amanda Casari. Feature Engineering for Machine Learning: Principles and Techniques for Data Scientists. O'Reilly Media, 2018. ISBN 978-1491953242.