Algorithmic Fairness and Bias in Workforce Scheduling

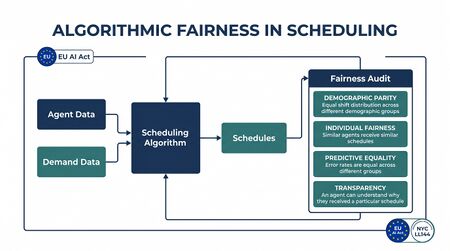

Algorithmic fairness and bias in workforce scheduling refers to the study and governance of systematic disparities — intentional or emergent — that arise when automated or algorithmic systems are used to generate, assign, or optimize employee work schedules. As Schedule Optimization platforms have become standard in contact center and back-office operations, questions about whether these systems produce equitable outcomes across demographic groups have moved from academic interest to legal obligation. The European Union Artificial Intelligence Act of 2024 classifies employment-related AI systems — including those used for work scheduling and task allocation — as high-risk applications subject to transparency, audit, and human oversight requirements.[1] New York City Local Law 144, effective July 2023, requires bias audits for automated employment decision tools used within the city.[2] For workforce management practitioners, algorithmic fairness is no longer a theoretical concern but a compliance requirement whose operational implications must be understood and addressed.

Defining Fairness in Scheduling

The term "fairness" admits multiple mathematically distinct definitions in the algorithmic decision-making literature, and this plurality is not merely semantic — different definitions are incompatible with each other under conditions that frequently arise in real workforce scheduling problems.

Equal Treatment (Individual Fairness)

Individual fairness, formalized by Dwork et al. (2012), requires that similar individuals receive similar treatment — that two agents with the same skills, tenure, availability constraints, and performance record receive comparable schedule assignments regardless of demographic characteristics.[3] In practice, "similar individuals" requires a metric over the agent population, and the choice of metric is value-laden: including seniority in the similarity measure will reproduce seniority-based outcomes, while excluding it will produce outcomes that seniority-system advocates consider unfair.

Equitable Outcomes (Group Fairness)

Group fairness definitions require that scheduling outcomes be statistically comparable across demographic groups. The most commonly applied group fairness criteria in employment contexts include:

- Demographic parity: the proportion of agents receiving desirable shifts (e.g., day shifts, weekends off) should be equal across demographic groups, regardless of other factors.

- Equalized odds (Hardt, Price, & Srebro, 2016): the rate at which qualified agents receive desirable shifts should be equal across groups, conditioning on qualification.[4]

- Disparate impact (the 4/5ths rule): a scheduling outcome produces disparate impact if the selection rate for a protected group is less than 80% of the selection rate for the most-selected group. This operational definition derives from EEOC enforcement guidelines and is the standard applied in U.S. employment discrimination law.

Preference Satisfaction

A third conception of scheduling fairness — less formalized in the algorithmic literature but central to agent experience — concerns whether agents' stated schedule preferences are honored at comparable rates across demographic groups. Schedule Bidding and Preference Based Scheduling systems that allocate desirable shifts based on preference bids may appear neutral while producing unequal outcomes if certain demographic groups systematically have fewer available preference slots, less flexibility to bid for desirable windows, or less familiarity with bidding system mechanics.

The Impossibility Results

Kleinberg, Mullainathan, and Raghavan (2017) proved that multiple group fairness criteria cannot be simultaneously satisfied when the base rates of a measurable attribute differ across groups — a condition that holds generically in real-world populations.[5] Specifically, calibration, predictive parity, and equalized false positive rates cannot all hold simultaneously except in degenerate cases. In scheduling terms, this means that an algorithm satisfying demographic parity for weekend-off assignments cannot simultaneously satisfy equalized odds for overtime allocation if underlying qualification rates differ by group.

This impossibility result has profound practical implications: organizations must choose which fairness definition to prioritize and accept that others will be compromised. This is a values choice, not a technical one, and should involve legal, HR, and operational leadership rather than being resolved implicitly by algorithm designers. The impossibility result does not mean fairness efforts are futile; it means that fairness must be specified, not assumed.

How Bias Enters Scheduling Algorithms

Historical Data Encoding Past Discrimination

Scheduling optimization algorithms trained on or calibrated against historical data inherit the distributional patterns of that data. If historical scheduling practices assigned night shifts disproportionately to demographic groups with less organizational power — new hires, part-time workers, workers with caregiving responsibilities — and if the algorithm optimizes against historical cost and coverage patterns, it will tend to reproduce those assignments. Raghavan, Barocas, Kleinberg, and Levy (2020) document this mechanism in hiring algorithms, but the logic applies directly to scheduling: systems that learn "who typically works which shifts" from historical data encode historical inequities as optimization targets.[6]

Proxy Variables

Scheduling algorithms use many inputs that appear neutral but correlate with protected characteristics. Seniority is the most common example: agents with more seniority typically receive preference in shift bidding and schedule assignments. Because seniority correlates with age, and because historically underrepresented demographic groups often have lower average seniority in established organizations, seniority-based scheduling systems can produce demographic disparities in shift quality without any intent to discriminate. Other proxy variables include part-time status (overrepresented among caregiving-responsible workers), shift preference constraints (agents who cannot work certain windows due to caregiving or religious observance), and performance scores (if historical performance evaluation incorporates subjective ratings that embed bias, using performance scores as scheduling inputs propagates that bias).

Ajunwa (2020) argues that the proliferation of automated employment decision tools creates a "quantified worker" problem — the translation of embodied, contextual human attributes into algorithmic features — that systematically disadvantages workers whose characteristics do not fit the historical norm used to calibrate the system.[7]

Seniority Systems and Demographic Stratification

Seniority-based scheduling — where agents with higher tenure receive priority in shift selection — is widely practiced and often negotiated through collective bargaining agreements. The demographic stratification problem arises when the workforce's seniority distribution is itself a product of historical hiring and retention patterns. If an organization hired predominantly from one demographic group in earlier decades and has more recently diversified its workforce, then seniority-based scheduling will systematically assign less desirable schedules to newer, more demographically diverse cohorts — not because the algorithm is discriminatory in design, but because it operationalizes a historical pattern.

This mechanism is well-documented in labor economics literature on employment discrimination and seniority systems. The policy tension between contract-based seniority rights and demographic equity outcomes is genuine and is not resolved by declaring the algorithm "neutral."

The Regulatory Landscape

EU AI Act (2024)

The EU AI Act categorizes "AI systems used for employment, workers management, and access to self-employment" as high-risk (Annex III, point 4), specifically including systems used for work scheduling and task allocation. High-risk classification imposes requirements including risk management systems (documented identification and mitigation of discriminatory outcomes), data governance (training data examined for possible biases), transparency (workers must be informed when AI systems are used in decisions affecting them), human oversight (meaningful human review capability for consequential decisions), and accuracy monitoring.[8] The Act applies to systems deployed in the EU regardless of provider jurisdiction.

NYC Local Law 144

New York City Local Law 144 (effective July 5, 2023) requires employers using automated employment decision tools (AEDTs) to conduct annual bias audits by an independent auditor, publish audit summaries, and notify employees before using such tools.[9] Schedule optimization systems that substantially replace planner judgment in shift assignment likely qualify as AEDTs under this definition.

EEOC Algorithmic Guidance

The U.S. Equal Employment Opportunity Commission issued technical assistance guidance in 2023 clarifying that algorithmic tools used in employment decisions — including scheduling — are subject to Title VII of the Civil Rights Act if they produce disparate impact against protected classes. The 4/5ths (80%) rule, historically applied to hiring and promotion, applies equally to scheduling outcomes affecting compensation, terms, and conditions of employment. EEOC guidance notes that employer liability is not diminished by the use of a third-party algorithm vendor: the employer is responsible for disparate impact in tools it uses, regardless of who built them.

Emerging State Laws

Multiple U.S. states (including California, Illinois, and Washington) have introduced or passed legislation regulating algorithmic employment decisions or requiring transparency in automated HR tools. The landscape is evolving rapidly; practitioners should consult employment counsel for jurisdiction-specific obligations.

Fairness-Efficiency Tradeoffs

Bertsimas, Farias, and Trichakis (2011) quantify what they term the "price of fairness" — the reduction in aggregate optimization objective that results from imposing fairness constraints in resource allocation problems.[10] Their analysis shows that the price of fairness varies dramatically across problem structures: in some cases equitable allocations cost very little in aggregate efficiency; in others the cost is substantial. For workforce scheduling specifically, equalizing weekend-off distribution across demographic groups may cost very little in total schedule quality if the scheduling problem has sufficient degree of freedom; equalizing overtime allocation may cost more if agents differ systematically in skill profile and availability; eliminating seniority priority may increase dissatisfaction among senior workers while improving equity for junior cohorts.

The "price of fairness" framing reframes the question usefully: rather than treating fairness as a constraint that reduces optimization quality, it quantifies the cost explicitly, allowing decision-makers to assess whether the equity benefit justifies the efficiency cost. This is a values judgment that belongs to organizational leadership, not algorithm designers. The tradeoff is also not always real: in many scheduling problems, fairness constraints are non-binding at optimality, or the binding cost is operationally negligible. Assuming significant cost before measuring it is a common error that leads organizations to underinvest in fairness governance.

Audit Methodologies

Disparate Impact Testing

The primary audit methodology for scheduling fairness is disparate impact testing using the 4/5ths rule: for each scheduling outcome of interest (desirable shift assignments, overtime, holiday allocations, break timing), calculate the selection rate for each demographic group and compare to the most-selected group. A ratio below 0.80 is a prima facie indicator of disparate impact. This calculation requires demographic data for the scheduled agent population (requiring legal guidance on collection and use), individual-level records of scheduling outcomes by group, and consistent definition of "desirable" and "undesirable" outcomes.

Disparate impact testing is a statistical test for discriminatory outcomes, not discriminatory intent. Finding disparate impact triggers a legal burden-shifting framework under U.S. law: the employer must demonstrate that the tool is job-related and consistent with business necessity, or explore alternative practices with less discriminatory impact.

Counterfactual Analysis

Counterfactual fairness asks whether a scheduling outcome would change if a worker's protected characteristic were different while all other relevant factors were held constant. In scheduling practice, this is implemented by running the scheduling algorithm with and without demographic-correlated features (seniority as proxy for age, part-time status as proxy for caregiving responsibilities) and comparing outcome distributions. Large differences suggest that proxy variables are functioning as demographic proxies in the algorithm's output.

Group Fairness Metrics

Beyond the 4/5ths rule, quantitative fairness audits can compute demographic parity gap (difference in favorable outcome rates between demographic groups), equalized odds gap (difference in qualified workers receiving favorable outcomes between groups), and Gini coefficient of schedule quality (a distributional inequality measure applied to schedule desirability scores across the agent population). These metrics should be monitored over time, not computed once. Scheduling fairness can drift as workforce composition changes, demand patterns shift seasonally, or algorithm parameters are updated.

Human-in-the-Loop Requirements

The EU AI Act's human oversight requirements for high-risk AI systems applied to scheduling tools create specific WFM governance obligations: schedule planners must have genuine capability to review and override algorithm-generated schedules (not merely nominal authority impractical to exercise), overrides must be logged and available for audit, workers must be informed that algorithmic systems are used in scheduling decisions, and a defined escalation path must exist for workers who believe scheduling decisions were unfair.

The practical implication is that fully automated schedule generation — where algorithm output is deployed without human review — does not satisfy the EU AI Act's high-risk oversight requirements. Workforce Management Governance and Change Management frameworks must incorporate fairness review steps into the schedule approval workflow.

Practical Steps for WFM Teams

WFM teams can take structured steps toward fairness compliance regardless of in-house legal or data science expertise:

- Inventory algorithmic tools: identify which scheduling, forecasting, and optimization tools use automated decision logic that affects individual schedules

- Assess regulatory exposure: determine which jurisdictions' laws apply based on where agents are located

- Document inputs and objectives: for each tool, document data inputs used, optimization objective, and how outputs translate to schedule decisions

- Run disparate impact tests: using existing schedule records and available demographic data, compute selection rates for desirable scheduling outcomes by demographic group

- Engage legal counsel: disparate impact findings require legal interpretation; fairness audit design and demographic data collection require employment law guidance

- Establish override and audit trails: ensure planner overrides are logged and scheduling decisions affecting protected characteristics are reviewable

- Disclose to workers: implement required disclosures where mandated by applicable law

Maturity Model Considerations

| Maturity Level | Algorithmic Fairness Posture |

|---|---|

| L1–L2 | Scheduling done manually or with basic tools; fairness not formally assessed; regulatory exposure may be unrecognized |

| L3 | Basic disparate impact testing conducted periodically; schedule outcomes reviewed for demographic patterns; documentation of scheduling tool inputs and objectives exists |

| L4 | Formal fairness audit program with defined metrics and cadence; human override capability operational; worker disclosure in place where legally required; fairness constraints optionally incorporated into optimization objective |

| L5 | Continuous automated fairness monitoring; audit trail integrated with governance framework; formal engagement with legal and HR on fairness-efficiency tradeoffs; counterfactual analysis capability for root-cause investigation; documented compliance posture for EU AI Act and applicable state/local laws |

Related Concepts

- Schedule Optimization

- Schedule Bidding and Preference Based Scheduling

- Schedule Generation

- Scheduling Methods

- Multi-Objective Optimization in Contact Center

- Agent Experience and Wellbeing

- Burnout and Schedule Induced Attrition

- Performance Management

- Adherence and Conformance

- Workforce Management Governance and Change Management

- WFM Roles

- WFM Labs Maturity Model

- Human AI Blended Staffing Models

- Agentic AI Workforce Planning

- Reporting and Analytics Framework

References

- ↑ European Parliament. (2024). Regulation (EU) 2024/1689 of the European Parliament and of the Council on Artificial Intelligence (the AI Act). Official Journal of the European Union.

- ↑ New York City Council. (2021). Local Law 144 of 2021: Automated Employment Decision Tools. NYC Council.

- ↑ Dwork, C., Hardt, M., Pitassi, T., Reingold, O., & Zemel, R. (2012). Fairness Through Awareness. Proceedings of the 3rd Innovations in Theoretical Computer Science Conference, 214–226.

- ↑ Hardt, M., Price, E., & Srebro, N. (2016). Equality of Opportunity in Supervised Learning. Advances in Neural Information Processing Systems, 29.

- ↑ Kleinberg, J., Mullainathan, S., & Raghavan, M. (2017). Human Decisions and Machine Predictions. Quarterly Journal of Economics, 133(1), 237–293.

- ↑ Raghavan, M., Barocas, S., Kleinberg, J., & Levy, K. (2020). Mitigating Bias in Algorithmic Hiring: Evaluating Claims and Opportunities. Proceedings of the 2020 Conference on Fairness, Accountability, and Transparency (FAccT).

- ↑ Ajunwa, I. (2020). The Paradox of Automation as Anti-Bias Intervention. Cardozo Law Review, 41(5), 1671–1742.

- ↑ European Parliament. (2024). Regulation (EU) 2024/1689 on Artificial Intelligence (the AI Act). Official Journal of the European Union.

- ↑ New York City Council. (2021). Local Law 144 of 2021: Automated Employment Decision Tools. NYC Council.

- ↑ Bertsimas, D., Farias, V. F., & Trichakis, N. (2011). The Price of Fairness. Operations Research, 59(1), 17–31.