Organizational Change Management for AI Workforce Transitions

Organizational change management (OCM) for AI workforce transitions refers to the structured application of behavioral, organizational, and communication frameworks to guide workforce management teams through the adoption of artificial intelligence technologies. Unlike conventional technology deployments, AI transitions in workforce management alter not only tools but role definitions, authority structures, and the fundamental nature of analytical work. The failure rate of large-scale digital and AI transformations remains high: McKinsey research has consistently reported that approximately 70% of transformation programs fail to achieve their stated objectives, with organizational and behavioral factors—not technology—as the primary cause of failure.[1]

In WFM specifically, AI transitions affect scheduling, forecasting, adherence management, real-time operations, and reporting functions simultaneously. Each function involves distinct role clusters with different skill profiles, change readiness levels, and exposure to role disruption. Effective change management therefore requires function-level granularity, not enterprise-generic communication plans.

Why AI Workforce Transitions Fail

The 70% failure rate for large transformations is not evenly distributed across failure modes. Technology malfunction accounts for a minority of failures. McKinsey's analysis identifies four primary causes: unclear or inconsistent vision of the desired future state; insufficient executive sponsorship translating to resource withdrawal under pressure; skill gaps that prevent teams from operating new systems effectively; and cultural resistance that manifests as passive non-adoption rather than overt opposition.[2]

In WFM AI transitions, each failure mode has a specific expression. Vision failure appears as inconsistent messaging about what AI will and will not do: analysts hear "AI will optimize schedules" without understanding what that means for their current responsibilities. Sponsorship failure appears as operations leadership supporting AI in planning sessions but reverting to manual overrides when service level pressure rises, signaling to analysts that the new system is not trusted. Skill gaps manifest as teams proficient in legacy WFM platforms but unable to interpret, validate, or override AI recommendations. Cultural resistance appears in adherence data—agents and supervisors comply nominally while circumventing AI-generated schedules through pattern of absence and swap requests.

The "will AI replace me" question operates across all four failure modes simultaneously. When this question is not addressed directly and honestly, it generates an ambient anxiety that degrades adoption of even technically sound implementations. Davenport and Kirby's research on automation and professional work suggests that the most effective organizational response is explicit role redesign—demonstrating through changed job descriptions and performance metrics that the organization intends augmentation rather than replacement—rather than reassurance alone.[3]

Role Evolution Mapping

Each WFM role undergoes a characteristic transition in an AI-augmented environment. Mapping these transitions explicitly is prerequisite to designing targeted development programs.

WFM Analyst (Forecasting/Planning). Pre-transition: manual data extraction, spreadsheet-based variance analysis, interval-level volume forecasting. Post-transition: AI model governance, forecast exception investigation, input parameter management, and scenario interpretation. The skill transition requires statistical literacy sufficient to evaluate model outputs critically, not merely to run them—a meaningful step up in analytical capability. The analyst who cannot explain why the AI forecast diverged from actuals provides no governance value.

WFM Analyst (Real-Time/Intraday). Pre-transition: reactive monitoring, manual headcount adjustment, phone-based agent reallocation. Post-transition: exception-based oversight of automated intraday adjustment, threshold-setting for automated intervention, escalation decision authority. The role compresses in volume but elevates in consequence: automated systems handle routine adjustments while the analyst handles the edge cases that automation cannot.

WFM Scheduler. Pre-transition: manual schedule construction using rules, preference matching through spreadsheets. Post-transition: constraint configuration for optimization engines, exception review, and preference override adjudication. The scheduler transitions from schedule builder to schedule curator and constraint expert.

WFM Manager. Pre-transition: operational oversight, reporting synthesis, escalation handling. Post-transition: AI model performance monitoring, governance of automation boundaries, stakeholder communication on AI recommendation accuracy, and workforce planning strategy incorporating automation economics. The managerial role gains strategic scope but requires new competencies in model evaluation and organizational communication.

Reporting and Analytics Specialist. Pre-transition: static report production, dashboard maintenance. Post-transition: insight synthesis from AI-generated analytics, predictive model interpretation, connection of workforce health metrics to business outcomes. The role transitions from reporting to analysis.

Kotter's 8-Step Model Applied to WFM AI Transformation

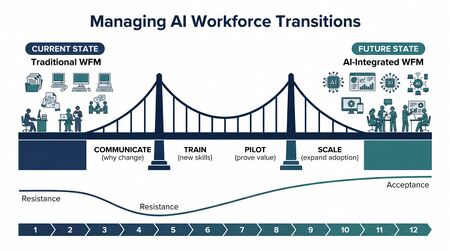

Kotter's eight-step model provides a sequenced framework for large-scale organizational change.[4] Applied to WFM AI transitions, each step has specific operational content.

Step 1: Create urgency. In WFM, urgency derives from competitive and operational pressures, not from general AI hype. The credible urgency case connects AI adoption to measurable workforce challenges: rising attrition costs, forecasting accuracy gaps that generate over- and understaffing costs, or labor market tightening that makes headcount growth unsustainable. Abstract urgency ("AI is the future") does not mobilize action; specific, quantified operational problems do.

Step 2: Build the guiding coalition. The coalition for WFM AI transformation must span operations (service level accountability), HR (role design and talent development), IT (integration and data governance), and finance (budget authority). A coalition confined to WFM or IT will encounter veto points it cannot navigate.

Step 3: Form strategic vision. The vision must specify what AI will handle, what humans will handle, and how the boundary will be maintained. Vague visions ("AI-powered workforce management") generate anxiety by leaving the human role undefined. A specific vision—"AI generates forecasts and schedule recommendations; WFM analysts validate, configure, and govern; operations retains override authority"—gives each role group a clear position in the future state.

Step 4: Enlist a volunteer army. Early adopters within WFM teams—typically analysts with higher statistical proficiency or curiosity about automation—can be identified and elevated as internal champions. Their credibility with peers is higher than that of external consultants or senior leadership.

Step 5: Enable action by removing barriers. Barriers in WFM AI transitions include: legacy system data not available to AI models (IT integration backlog), performance metrics still anchored to manual process outputs (HR policy), and supervisors who revert to spreadsheets under pressure (management behavior). Removing barriers requires authority to change systems, policies, and incentives—not merely training delivery.

Step 6: Generate short-term wins. In WFM, short-term wins should be measurable and attributable. A 2-percentage-point improvement in forecast accuracy in a pilot team, or a reduction in scheduling time that is visible to schedulers, creates tangible evidence that the new model works. Wins must be communicated specifically, with attribution to the new approach.

Step 7: Sustain acceleration. After early wins, premature declaration of success is the most common failure mode. The full transition of WFM analytical roles requires 12–24 months; early wins typically represent 20–30% of the total change. Sustained acceleration requires maintaining organizational attention, continuing skill development, and expanding the scope of AI-governed decisions progressively.

Step 8: Institute change. Change is institutionalized when AI-augmented processes are embedded in job descriptions, performance metrics, onboarding curricula for new analysts, and standard operating procedures. Until those institutional artifacts reflect the new operating model, reversion risk remains.

ADKAR Model: Where WFM Roles Get Stuck

The ADKAR model (Prosci) provides an individual-level adoption framework that complements Kotter's organizational focus.[5] Each ADKAR stage has a characteristic failure point by WFM role.

Awareness (understanding that change is needed): WFM analysts often have high awareness of AI's general existence but low awareness of how it will affect their specific workflow. Communication plans that describe AI at the system level without specifying role-level impact leave analysts in a state of anxious awareness without directional clarity.

Desire (motivation to support the change): Desire failure is most acute in senior WFM professionals whose expertise is embedded in current processes. An analyst who has mastered Erlang-based staffing models over a decade has real losses, not imaginary ones, when AI replaces that skill. Desire cannot be manufactured through communication alone; it requires visible organizational commitment to role continuity and investment in capability development.

Knowledge (knowing how to change): Training programs that focus on tool mechanics—how to use the AI scheduling interface—without addressing analytical judgment—how to evaluate AI recommendations critically—leave a knowledge gap at the consequential decision point. Knowledge for WFM AI adoption includes both technical operation and evaluative judgment.

Ability (demonstrated performance in the new way): Ability gaps appear most clearly in real-time operations, where analysts must make exception decisions under time pressure with AI recommendations as the primary input. Supervised practice with feedback—not just classroom training—is required to develop ability.

Reinforcement (sustaining the change): Without updated performance metrics that reflect the new operating model, analysts revert to familiar behaviors. A scheduler evaluated on schedule coverage percentage has no reinforcement signal for the AI configuration quality that now determines that coverage. Metric redesign is a prerequisite for sustained reinforcement.

Psychological Safety and the Replacement Fear

Edmondson's research on psychological safety—the belief that one can speak up, question, or admit error without fear of punishment—is directly applicable to WFM AI transitions.[6] Teams with low psychological safety will not surface concerns about AI recommendation errors, will not admit uncertainty about new workflows, and will not report when automated systems produce obviously wrong outputs. All three silence behaviors are hazardous in AI-governed workforce management.

The "will AI replace me" fear operates as a psychological safety suppressor. Analysts who believe that expressing concern about AI will be read as resistance—and that resistance will mark them as candidates for elimination—will remain silent. Building psychological safety in WFM AI transitions requires explicit, credible signals from leadership: public acknowledgment that transition is genuinely difficult, visible tolerance of questions and critique about AI systems, and demonstrated follow-through when analysts surface model errors.

The MIT Work of the Future Task Force's analysis of AI adoption across professional functions found that worker anxiety about job displacement is highest not in roles that AI actually displaces but in roles adjacent to automation where the scope of impact is ambiguous.[7] WFM analyst roles sit precisely in this ambiguous zone: clearly affected but not eliminated. Ambiguity management—providing specific, honest information about role scope changes—is more effective than reassurance at reducing anxiety.

Stakeholder Management

The influence/interest grid for a WFM AI transformation spans four organizational domains:

High influence, high interest (manage closely): Operations leadership (service level accountability and direct authority over WFM teams); senior WFM management (program ownership and role design authority); CTO/CIO (infrastructure and integration decisions).

High influence, lower interest (keep satisfied): CFO and finance (budget approval and cost model changes); HR leadership (role redesign, job grade implications, and development investment).

Lower influence, high interest (keep informed): WFM analysts and schedulers (direct role impact); frontline supervisors (schedule acceptance and override behavior); real-time operations teams (adherence monitoring changes).

Lower influence, lower interest (monitor): Internal audit (governance and controls); legal and compliance (data privacy implications of AI workforce decisions).

Stakeholder maps must be maintained dynamically. A CFO initially categorized as lower interest becomes high interest when automation economics data surfaces unexpected implementation costs. A frontline supervisor group initially mapped as lower influence can become consequential through collective circumvention behavior that undermines service level outcomes.

Measuring Change Adoption

Training completion rates are a lagging and insufficient measure of adoption. Organizations that report high training completion alongside stalled behavioral change are measuring inputs, not outcomes. Leading indicators of genuine adoption include:

AI recommendation acceptance rate. The proportion of AI-generated schedules or forecasts accepted without manual override. Declining acceptance rates are diagnostic signals: they may indicate model degradation, analyst distrust, or legitimate quality issues requiring investigation.

Tool usage depth. Whether analysts are using AI capabilities beyond the minimum required—running scenario analyses, configuring custom constraints, accessing model explanation features—indicates genuine capability development versus compliance theater.

Escalation pattern analysis. If real-time analysts are escalating an increasing proportion of intraday decisions to managers (rather than acting on AI recommendations), this signals ability gaps or low confidence in AI outputs.

Confidence surveys. Targeted surveys asking analysts to rate their confidence in evaluating AI recommendations (not merely using AI tools) provide a direct measure of the Knowledge and Ability stages of ADKAR. These should be separated from engagement surveys to avoid conflation.

Error surfacing rate. The rate at which analysts surface AI model errors—through formal channels—is a positive adoption signal and a psychological safety signal simultaneously. A team that never surfaces AI errors is either working with a perfect system or has not developed the analytical confidence to identify errors and the safety to report them.

The Communication Challenge

Communicating honestly about AI workforce changes requires navigating a genuine tension: providing enough specific information to manage anxiety without creating more anxiety through uncertainty. Several principles apply.

Communications should distinguish between what is known (specific tool capabilities, timeline for deployment, initial role changes) and what is not known (long-term staffing levels, future role evolution beyond current planning horizon). Treating unknown futures as known—in either direction—destroys credibility when reality diverges.

Communications should address the "what does this mean for me" question at the role level, not the enterprise level. Analysts understand their own work; they do not find enterprise-level abstractions reassuring.

Communications should be two-directional. Forums where analysts can ask questions and receive substantive answers—not scripted reassurance—build more trust than broadcast communications. Unanswered questions fill with worst-case assumptions.

The governance framework for AI transitions should specify who has authority to answer what questions, so that analysts know where to direct concerns and managers know what they are authorized to commit to.

Maturity Model Considerations

Within the WFM Labs Maturity Model, change management capability is an organizational competency distinct from technical implementation capability. Organizations at lower maturity levels deploy AI tools without structured adoption programs, resulting in tool availability without behavioral change. Organizations at higher maturity levels integrate change management into technology project governance from the outset, treating adoption metrics as project success criteria alongside technical deliverables.

The WFM Assessment process should evaluate change management readiness as a precondition for AI implementation scoping. Organizations with low psychological safety, high analyst turnover, or weak executive sponsorship are poor candidates for accelerated AI deployment regardless of their technical readiness.

The WFM Center of Excellence is a natural organizational vehicle for change management in AI transitions, providing structural continuity across implementation phases and a permanent home for capability development. COEs that include a change management or organizational effectiveness function are better positioned to sustain AI adoption than those that treat implementation as a project with a defined end date.

Related Concepts

- WFM Roles

- WFM Processes

- Workforce Management Governance and Change Management

- WFM Center of Excellence CoE Design

- WFM Assessment

- Agentic AI Workforce Planning

- Human AI Blended Staffing Models

- AI Containment Rate and Its Workforce Implications

- Performance Management

- Agent Experience and Wellbeing

- Burnout and Schedule Induced Attrition

- Workforce Health Metrics and Leading Indicators

- WFM Labs Maturity Model

References

- ↑ McKinsey & Company. (2023). Losing from day one: Why even successful transformations fall short. McKinsey Global Institute.

- ↑ McKinsey & Company. (2023). Losing from day one: Why even successful transformations fall short. McKinsey Global Institute.

- ↑ Davenport, T. H., & Kirby, J. (2016). Only Humans Need Apply: Winners and Losers in the Age of Smart Machines. Harper Business.

- ↑ Kotter, J. P. (2012). Leading Change. Harvard Business Review Press.

- ↑ Prosci. (2023). The Prosci ADKAR Model (12th ed.). Prosci Inc.

- ↑ Edmondson, A. C. (2019). The Fearless Organization: Creating Psychological Safety in the Workplace for Learning, Innovation, and Growth. Wiley.

- ↑ MIT Work of the Future Task Force. (2022). The Work of the Future: Building Better Jobs in an Age of Intelligent Machines. MIT Press.