AI Cost Modeling for Workforce Operations

AI cost modeling for workforce operations applies financial analysis to the operational costs of running AI agents as productive workforce members. Unlike human labor costs — which are dominated by salary, benefits, and overhead — AI agent costs are driven by token consumption, infrastructure provisioning, monitoring overhead, human supervision, and error remediation. The economics are fundamentally different: human agents have high fixed costs and near-zero marginal cost per interaction (the agent is paid regardless), while AI agents have low fixed costs and measurable marginal cost per interaction. This difference transforms workforce financial planning and creates optimization opportunities that traditional Workforce Cost Modeling frameworks do not address.

For capacity implications of cost decisions, see AI Agent Capacity Planning. For governance of cost controls, see AI Workforce Governance Frameworks. For quality-cost tradeoffs, see AI Agent Quality Assurance.

Token Economics

The fundamental unit of AI agent cost is the token — a subword unit processed by language models. Token economics governs operating cost the way labor rates govern human staffing cost.

Token Pricing Structure

Cloud LLM providers price tokens asymmetrically:

| Component | Description | Why It Costs What It Does |

|---|---|---|

| Input tokens | Tokens sent to the model (system prompt, conversation history, retrieved context, user message) | Processed in parallel during prefill; compute cost scales with sequence length |

| Output tokens | Tokens generated by the model (agent response, tool calls, internal reasoning) | Generated sequentially; each token requires a full forward pass. Typically 3–5× more expensive than input tokens |

| Cached input tokens | Input tokens that match a previously cached prefix (system prompt, repeated instructions) | Reduced cost (typically 50–90% discount) because KV cache is reused |

| Reasoning tokens | Internal chain-of-thought tokens in reasoning models (o1, o3, Claude with extended thinking) | Consumed but not shown to user; can 2–10× the visible output token count |

Model Tier Pricing (2025–2026 Ranges)

| Model Tier | Examples | Input (per 1M tokens) | Output (per 1M tokens) | Best For |

|---|---|---|---|---|

| Frontier | GPT-4o, Claude Opus, Gemini Ultra | $2.50–$15.00 | $10.00–$75.00 | Complex reasoning, nuanced customer interactions, compliance-critical decisions |

| Mid-tier | GPT-4o-mini, Claude Sonnet, Gemini Pro | $0.15–$3.00 | $0.60–$15.00 | Standard customer service, moderate complexity, good cost-quality balance |

| Economy | GPT-4.1-mini, Claude Haiku, Gemini Flash | $0.01–$0.40 | $0.04–$1.60 | High-volume simple queries, classification, routing, FAQ |

| Fine-tuned | Custom models on any tier | 2–6× base tier pricing | 2–6× base tier pricing | Domain-specific tasks where fine-tuning reduces token usage or improves accuracy |

| Self-hosted | Llama, Mistral, Qwen on own infrastructure | $0.005–$0.10 (amortized) | $0.02–$0.40 (amortized) | High volume with data sovereignty requirements; costs are infrastructure-amortized |

Prices change rapidly. The trend from 2023–2026 shows a consistent 40–60% annual price decline per quality tier, with new model generations delivering better quality at the previous generation's mid-tier price point.

Cost-Per-Interaction Calculation

The core cost formula for a single AI agent interaction:

cost_per_interaction = (tokens_in × price_in) + (tokens_out × price_out) + (cached_tokens × price_cached) + oversight_cost_per_interaction + error_remediation_cost_per_interaction

Worked Example: Three Model Tiers

Scenario: A standard customer service interaction averaging 2,000 input tokens and 600 output tokens, with 800 cached tokens (system prompt).

| Model Tier | Input Cost | Output Cost | Cache Savings | Raw Token Cost | + Oversight (15%) | + Error Remediation | Total per Interaction |

|---|---|---|---|---|---|---|---|

| Frontier (Opus-class) | (2,000-800)×$10/1M + 800×$1/1M = $0.0128 | 600×$30/1M = $0.0180 | -$0.0072 | $0.0236 | $0.0271 | $0.0050 | $0.032 |

| Mid-tier (Sonnet-class) | (2,000-800)×$3/1M + 800×$0.30/1M = $0.0038 | 600×$15/1M = $0.0090 | -$0.0032 | $0.0096 | $0.0110 | $0.0035 | $0.015 |

| Economy (Haiku-class) | (2,000-800)×$0.25/1M + 800×$0.025/1M = $0.0003 | 600×$1.25/1M = $0.0008 | -$0.0003 | $0.0008 | $0.0009 | $0.0025 | $0.004 |

Error remediation cost decreases with model quality but increases with model cost — this is the central cost-quality tradeoff. Economy models are cheap per token but generate more errors requiring human intervention. Frontier models are expensive per token but produce fewer errors. The minimum total cost often sits at the mid-tier.

Total Cost of AI Ownership (TCAO)

Token costs are typically only 30–50% of total AI agent operating cost. The full TCAO includes:

| Cost Category | Components | Typical Share of TCAO |

|---|---|---|

| Token/API costs | Input tokens, output tokens, cached tokens, reasoning tokens | 30–50% |

| Infrastructure | API platform fees, self-hosted GPU amortization, networking, storage | 10–20% |

| Monitoring and observability | Quality monitoring tools, dashboards, alerting, log storage | 5–10% |

| Human oversight | QA analysts reviewing AI outputs, escalation handling, calibration | 15–25% |

| Error remediation | Re-handling interactions where AI failed, customer recovery, complaint handling | 5–15% |

| Prompt engineering and maintenance | Ongoing prompt optimization, A/B testing, model migration | 3–8% |

| Compliance and audit | Regulatory compliance, audit trail storage, bias monitoring | 2–5% |

A common mistake in AI business cases is comparing raw token cost per interaction against human fully-loaded cost per interaction. This understates AI costs by 50–70% and produces unrealistic ROI projections.

Monthly TCAO Worked Example

Operation: 200,000 AI-handled interactions per month using a mid-tier model.

| Category | Monthly Cost | Per Interaction |

|---|---|---|

| Token costs | $3,000 (200K × $0.015) | $0.015 |

| API platform fee | $500 | $0.003 |

| Monitoring tools | $800 | $0.004 |

| Human oversight (0.5 FTE QA analyst at $65K loaded) | $2,708 | $0.014 |

| Error remediation (3% error rate, $5/remediation) | $30,000 | $0.150 |

| Prompt engineering (0.25 FTE engineer at $120K loaded) | $2,500 | $0.013 |

| Compliance/audit | $400 | $0.002 |

| Total TCAO | $39,908 | $0.200 |

The dominant cost is error remediation — not tokens. An AI agent that handles 97% of interactions correctly still generates 6,000 errors per month requiring human intervention. This is why AI Agent Quality Assurance is not merely a quality concern but a financial imperative. Reducing the error rate from 3% to 1.5% saves $15,000/month — far more than any token cost optimization.

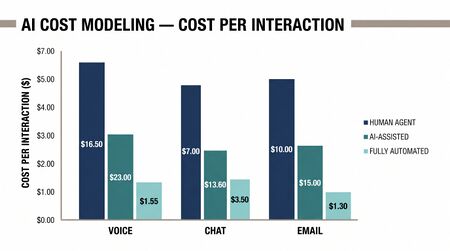

Break-Even Analysis: AI vs Human Agents

The break-even point occurs where AI total cost per interaction equals human total cost per interaction.

Human Agent Cost Baseline

| Component | Low End | Mid Range | High End |

|---|---|---|---|

| Annual salary | $32,000 | $42,000 | $65,000 |

| Benefits (25–35%) | $8,000 | $12,600 | $22,750 |

| Overhead (facilities, IT, management — 20–30%) | $8,000 | $13,650 | $26,325 |

| Training and development | $2,000 | $3,500 | $6,000 |

| Fully loaded annual cost | $50,000 | $71,750 | $120,075 |

| Productive hours/year (accounting for PTO, shrinkage) | 1,600 | 1,600 | 1,600 |

| Interactions/hour (at 6 min AHT) | 8 | 8 | 8 |

| Annual interactions per agent | 12,800 | 12,800 | 12,800 |

| Cost per interaction | $3.91 | $5.61 | $9.38 |

Break-Even Curves

At the mid-tier TCAO of $0.20 per AI interaction versus $5.61 per human interaction, AI is approximately 28× cheaper per interaction on a marginal basis. However, this comparison only holds when:

- The AI agent resolves the interaction to customer satisfaction (no escalation, no re-contact)

- The error rate is included in the AI cost (it is, in the TCAO model above)

- Volume is sufficient to amortize fixed costs (monitoring, engineering, compliance)

The break-even volume — the minimum monthly volume at which AI agent deployment is cost-justified — depends on the fixed cost investment:

break_even_volume = fixed_monthly_costs / (human_cost_per_interaction - AI_variable_cost_per_interaction)

Using the worked example:

- Fixed monthly costs (monitoring + engineering + compliance) = $3,700

- Variable AI cost per interaction = $0.182 (tokens + oversight + error remediation)

- Human cost per interaction = $5.61

break_even_volume = $3,700 / ($5.61 - $0.182) = 681 interactions/month

At just 681 interactions per month, AI deployment breaks even. This low threshold explains the rapid adoption of AI agents — the economics are compelling at virtually any meaningful scale.

When the Math Doesn't Work

AI agents are not cost-effective when:

- Error remediation costs dominate: If the AI error rate exceeds ~15%, remediation costs overwhelm token savings. This happens with complex, high-stakes interactions where errors require senior staff to resolve.

- Compliance overhead is extreme: Highly regulated interactions (healthcare advice, financial guidance) may require 100% human review of AI outputs, eliminating the labor savings.

- Volume is very low: Below the break-even volume, fixed costs exceed savings. Niche queues with 50 interactions/month rarely justify AI agent deployment.

- Customer willingness threshold: Some customer segments refuse AI interaction. Forcing AI on unwilling customers generates complaints and escalations that negate cost savings.

Marginal Cost Curves

AI agents exhibit a distinctive marginal cost curve compared to human agents:

- Human agents: High marginal cost for each additional interaction (requires hiring, training, scheduling). Marginal cost is relatively flat — the 10,000th interaction costs about the same as the 1,000th. Step increases occur when new hires are needed.

- AI agents: Very low marginal cost per interaction (just tokens). Marginal cost is slightly decreasing with volume due to fixed-cost amortization and prompt caching. Step increases occur when infrastructure tier upgrades are needed.

The crossover creates an optimization opportunity: route high-volume, lower-complexity contacts to AI (where marginal cost advantages are greatest) and reserve human agents for lower-volume, higher-complexity contacts (where AI error rates and remediation costs would erode the marginal advantage). This is precisely the routing logic behind the Three-Pool Architecture and Cognitive Portfolio Model (N*).

Model Selection Economics

Choosing between model tiers is a cost-quality optimization problem:

optimal_model = argmin(token_cost(tier) + error_rate(tier) × remediation_cost)

Practical model selection heuristic:

| Interaction Type | Recommended Tier | Rationale |

|---|---|---|

| Simple FAQ, status check, routing | Economy | Low error risk, high volume, token cost dominates |

| Standard troubleshooting, account changes | Mid-tier | Moderate complexity, acceptable error rate, best cost-quality balance |

| Complex complaints, retention, compliance | Frontier | High error cost, low volume, quality dominates |

| Classification, intent detection, triage | Economy or fine-tuned | Structured output, high volume, fine-tuning reduces tokens needed |

Many operations deploy cascading models: start with an economy model, escalate to mid-tier if the interaction becomes complex, and escalate to frontier (or human) for the hardest cases. This cascading approach can reduce average token costs by 40–60% compared to using a single model tier for all interactions.

WFM Applications

AI cost modeling integrates into workforce financial planning:

- Annual budgeting: AI agent costs must be forecasted alongside labor costs. Unlike labor (relatively predictable), AI costs depend on volume, containment rate, model pricing (which may change mid-year), and error rate — all of which have meaningful variance.

- Scenario planning: Long-Run Workforce Sizing models must include AI cost scenarios — what happens if token prices drop 50%? What if the error rate improves from 3% to 1%? What if volume grows 30%? The marginal cost structure of AI means these scenarios have very different financial profiles than human staffing scenarios.

- Make-vs-buy decisions: Self-hosted models have higher fixed costs but lower marginal costs. The crossover volume (where self-hosting becomes cheaper than cloud API) depends on model size, hardware amortization, and engineering team cost. Typically, self-hosting breaks even at 5–10 million interactions per month for mid-tier models.

- Cost allocation: Operations that share AI infrastructure across queues or departments need allocation models. Cost per interaction is the natural allocation unit, but token-level metering provides precise usage tracking that is not possible with human agent cost allocation.

Maturity Model Position

Within the WFM Labs Maturity Model:

- Level 2 (Developing): AI costs tracked at aggregate level (monthly API bill). No per-interaction cost visibility. Business case based on vendor-provided estimates.

- Level 3 (Advanced): Per-interaction cost tracking implemented. TCAO model established including oversight and error remediation. Break-even analysis informs deployment decisions.

- Level 4 (Strategic): Dynamic model selection based on cost-quality optimization. Marginal cost analysis drives routing decisions. AI costs integrated into workforce financial forecasting.

- Level 5 (Transformative): Real-time cost optimization — automatic model tier selection per interaction based on predicted complexity, cost ceiling, and quality target. Cascading models with cost-aware routing. Continuous break-even monitoring with automatic scaling decisions.

See Also

- AI Scaffolding Framework

- Agentic AI Workforce Planning

- Human AI Blended Staffing Models

- Three-Pool Architecture

- Cognitive Portfolio Model (N*)

- Workforce Cost Modeling

- AI Agent Orchestration for WFM

- AI Agent Capacity Planning

- AI Agent Quality Assurance