Level AI

Level AI is a generative AI-powered conversation intelligence platform for contact center quality assurance, founded in 2019 by Ashish Nagar.[1] Headquartered in Mountain View, California, Level AI uses semantic intelligence — AI that understands the meaning and intent behind customer conversations rather than matching keywords — to automate QA scoring, surface actionable insights, and improve agent performance at scale. The company has raised $73.1 million in total funding, including a $39.4 million Series C led by Adams Street Partners in 2024.[2]

Level AI was named a Gartner Cool Vendor in Customer Service and Support Technologies in 2023.

Overview

Level AI was born from founder Ashish Nagar's experience at Amazon, where he worked on the Alexa Prize project (the "Star Trek computer" initiative). That experience — building AI that understands human conversation — directly informed Level AI's core technology: a semantic intelligence engine that comprehends context, intent, and nuance in customer interactions rather than relying on keyword detection or rules-based categorization.

The company positions itself as an emerging disruptor in the conversation intelligence space, competing against established players like CallMiner and growing challengers like Observe.AI. Level AI's differentiation centers on three claims:

- Semantic over syntactic: Understanding what customers mean, not just what they say

- Generative AI-native: Built on large language models from the outset rather than retrofitting AI onto legacy analytics architectures

- Custom AI models: Per-organization model training that adapts to each customer's specific language, products, and quality standards

Level AI serves contact centers across financial services, healthcare, retail, technology, and consumer services verticals, with customers including several Fortune 500 organizations.

Core Capabilities

Semantic Intelligence Engine

Level AI's core technology differentiator:

- Intent understanding: Identifies customer intent from conversational context rather than keyword matching — distinguishing between a customer mentioning "cancel" as a complaint vs. "cancel" as a request vs. "cancel" in passing

- Custom scenario detection: Organizations define business scenarios (upsell opportunity, churn risk, compliance violation) and Level AI's models learn to detect them from conversational semantics

- Multi-dimensional analysis: Simultaneous analysis of sentiment, intent, topic, customer effort, and agent behavior across interactions

- Cross-lingual support: Semantic models that work across languages without requiring per-language rule sets

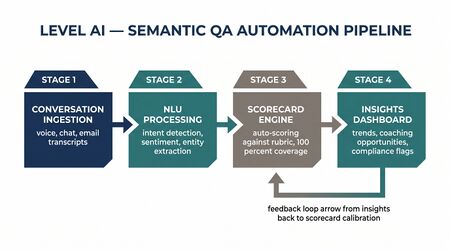

Automated QA (AgentGPT)

Level AI's flagship product for automated quality management:

- 100% interaction evaluation: Every voice call, chat, and email scored against customizable quality rubrics

- Generative AI scoring: LLM-powered evaluation that can explain why an interaction received a particular score — not just the score itself

- Custom QA frameworks: Organizations configure their own evaluation criteria, and Level AI's models learn to evaluate against them

- QA team acceleration: Claims to make QA teams 5x faster, 2x more accurate, and 3x more efficient compared to manual evaluation

- Calibration and alignment: Tools for comparing AI scores to human evaluator scores and continuously improving model accuracy

Real-Time Agent Assistance

- Live conversation monitoring: Real-time analysis of active conversations for sentiment shifts, compliance triggers, and coaching opportunities

- Knowledge retrieval: Context-aware knowledge base suggestions surfaced during conversations

- Proactive alerts: Automatic detection of escalation risk, customer frustration, or compliance deviation during live interactions

- Post-interaction summaries: Generative AI-produced summaries reducing after-call work

Analytics and Insights

- Trend identification: Automated detection of emerging customer issues, product problems, or process failures across interaction populations

- Voice of Customer: Structured insights from unstructured conversations — what customers are actually saying about products, services, and experiences

- Performance benchmarking: Agent-level performance comparison against team and organizational benchmarks

- Custom dashboards: Configurable analytics views for QA managers, supervisors, and operations leaders

Target Market and Deployment Model

Target Market

- Mid-market to enterprise (100-5,000 agents): Organizations seeking AI-powered QA automation without the enterprise complexity and cost of CallMiner

- Organizations with existing QA programs: Companies looking to evolve from manual QA to AI-assisted evaluation

- GenAI-forward organizations: Companies that want to leverage generative AI for customer service operations and are comfortable with newer technology

- Contact centers with nuanced quality requirements: Operations where keyword-based approaches fail because quality depends on conversational context and intent

Pricing Model

Level AI pricing is subscription-based, typically per-agent per-month:

- Pricing tiers based on modules selected and interaction volume

- Generally positioned between CallMiner (more expensive) and basic QA tools (less expensive)

- Implementation timelines of 4-8 weeks depending on customization requirements

Deployment Model

Cloud-native SaaS platform. Integrates with major CCaaS platforms through recording and API integrations. SOC 2 Type II compliant.

Key Differentiators

Semantic intelligence vs. keywords. Level AI's emphasis on understanding meaning rather than matching words addresses a genuine limitation of older analytics platforms. Customer conversations are ambiguous — the same words carry different meaning depending on context. Semantic models handle this better than keyword rules.

Generative AI-native. Level AI was built on LLMs from the start rather than bolting generative AI onto legacy speech analytics architectures. This architectural choice affects everything from model accuracy to product roadmap flexibility.

Explainable AI scores. QA teams need to trust automated scores before they replace manual evaluation. Level AI's ability to explain its scoring rationale — not just produce a number — builds trust and enables calibration that black-box scoring cannot.

Custom model training. Per-organization models that learn each customer's specific language, quality standards, and business context. A healthcare company's quality framework is fundamentally different from a financial services firm's, and Level AI adapts rather than forcing generic models.

Emerging disruptor pricing. As a Series C startup, Level AI typically prices below established competitors like CallMiner, making advanced conversation intelligence accessible to organizations that CallMiner prices out.

WFM Practitioner Perspective

What It Does Well

- QA modernization with lower barrier: For organizations still running 100% manual QA (common in mid-market), Level AI provides a faster, more affordable path to automated quality evaluation than CallMiner or even Observe.AI in some cases.

- Semantic quality insights: Understanding why interactions fail — not just that they failed — provides WFM teams with actionable inputs for training design, routing optimization, and knowledge management improvements.

- Voice of customer for forecasting: Semantic analysis can identify emerging demand drivers before they show up in volume metrics. A WFM team monitoring Level AI insights might detect a product issue driving call volume days before it peaks.

- Agent development data: Granular, explainable quality scores provide the foundation for evidence-based performance management and speed-to-proficiency tracking.

Where It Falls Short

- Scale maturity: As a Series C startup with 194 employees, Level AI has less enterprise deployment experience than CallMiner (20+ years) or even Observe.AI ($214M raised). Very large enterprise deployments carry more risk.

- Feature breadth: Level AI focuses on QA automation and conversation intelligence. It does not offer the real-time agent guidance depth of Cresta or Balto, nor the analytics depth of CallMiner.

- Compliance feature depth: For heavily regulated industries requiring extensive compliance rule libraries, audit trails, and regulatory reporting, CallMiner's two decades of compliance-focused development provides superior coverage.

- Integration ecosystem: As a smaller vendor, Level AI's pre-built integration library is narrower than established competitors. Some CCaaS integrations may require more implementation effort.

- Vendor viability risk: $73M in funding is meaningful but modest compared to the space. WFM practitioners should evaluate financial trajectory and reference customers before committing.

Net Assessment

Level AI is a promising emerging platform that brings genuine innovation — semantic intelligence and generative AI-native architecture — to contact center QA. It is the right choice for mid-market organizations that want modern AI-powered quality management at accessible pricing and are comfortable with a younger vendor. It is not yet the right choice for large enterprise operations requiring deep compliance, extensive customization, or proven scale. WFM practitioners should watch Level AI's trajectory closely — the semantic intelligence approach is architecturally sound, and if the company continues to execute, it could reshape the conversation intelligence competitive landscape within 2-3 years.

Integration Ecosystem

CCaaS: NICE CXone, Genesys Cloud CX, Amazon Connect, Five9, Talkdesk, Twilio Flex, RingCentral

CRM: Salesforce, Zendesk

Collaboration: Slack, Microsoft Teams

BI: API-based data export to external analytics platforms

Knowledge Management: Integration with organizational knowledge bases for real-time content surfacing

Maturity Model Position

Level AI supports maturity advancement in quality management:

- Level 2 (Foundational): Automated QA evaluation replaces manual sampling, establishing consistent quality measurement across 100% of interactions.

- Level 3 (Advanced): Semantic intelligence provides context-aware quality insights that inform coaching, training, and process improvement proactively.

- Level 4 (Optimized): Generative AI-powered analysis creates feedback loops: quality insights → coaching → behavior change → quality improvement.

Reaching Levels 4-5 requires pairing Level AI with dedicated WFM, real-time automation, and broader workforce engagement platforms.