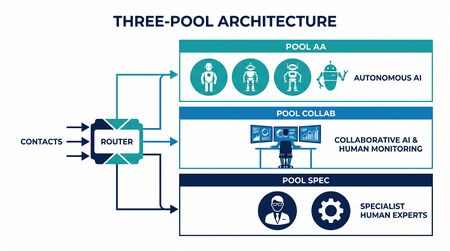

Three-Pool Architecture

The Three-Pool Architecture is the organizing pattern at the center of the Value-Based Planning Model. It replaces the single-pool / single-staffing-model assumption that has governed contact-center planning since Erlang with three structurally different pools, each requiring a different staffing methodology, each consuming a different cost structure, and each connected to the others through escalation cascades.

The architecture is the Level 4 — Advanced (The Ecosystem Emerges) WFM Labs Maturity Model™ articulation of how AI integrates into workforce planning — not as a deflection layer above the operation, but as a workforce pool inside it. Where legacy WFM treats automation as a volume reducer that sits upstream of the staffing equation, the Three-Pool Architecture treats automation as a staffing pool that must be sized, costed, and governed with the same rigor applied to human pools — just with different math.

The architecture is documented in Lango (2026), Value-Based Models for Customer Operations.[1]

The pool concept

A pool is a class of interactions that shares a staffing model. Two interaction types belong to the same pool when (a) they are best handled by the same agent type — fully autonomous AI, AI-supervised human, or specialist human — and (b) the staffing math that sizes that agent type is structurally appropriate to their work.

The three pools are not a typology of automation maturity. They are an architectural decomposition of the workforce that reflects how different work types must be staffed under different mathematical models. The decomposition is exhaustive and mutually exclusive at the planning level — every forecasted contact lands in exactly one pool for staffing purposes, even if the routing decision at execution time is probabilistic.

This distinction matters because it reframes the central question of Agentic AI Workforce Planning. Traditional planning asks: "How many agents do we need?" The Three-Pool Architecture asks: "How much of our work belongs in each pool, what does each pool cost when fully loaded, and where are the boundary dynamics that will shift those proportions over time?"

Why three pools, not two or four

The number three is not arbitrary. It emerges from the intersection of two constraints:

- Agent type. There are exactly three production-grade agent types available today: fully autonomous AI (no human in loop), AI-supervised human (human monitors AI or AI monitors human), and unassisted specialist human. Each type has structurally different throughput, cost, and failure characteristics.

- Staffing math. Each agent type requires a fundamentally different mathematical model for capacity planning. Autonomous AI is sized by cost modeling. Collaborative work is sized by cognitive portfolio theory. Specialist human work is sized by simulation. These three models are not interchangeable.[2]

A hypothetical fourth pool — autonomous specialist AI capable of handling high-complexity, high-judgment work without supervision — may emerge as large language models mature. When it does, the architecture extends naturally by adding a fourth staffing model. But no production implementation of that fourth pool exists as of 2026, and claiming it does is vendor marketing, not architecture.

Pool AA — Autonomous AI

Characteristics

Pool AA handles interactions where AI operates without any human in the loop. The interaction begins with AI, proceeds through AI, and resolves through AI — or fails and escalates. There is no human monitoring in real time. Quality assurance is retrospective (sample audits, drift detection, outcome tracking), not concurrent.

When to use: interactions with high AI Capability (>80%) and low Value Score (≤4) on the Value Routing Model composite. These are typically high-volume, pattern-stable interactions where the cost of a failure is low and the recovery path (escalation) is well-defined.

Typical interaction types

- Password resets, account lookups, balance inquiries

- Order status and tracking

- FAQ and knowledge-base retrieval

- Simple returns and exchanges with standard policies

- Appointment scheduling and rescheduling

- Basic troubleshooting with decision-tree resolution paths

Staffing math: the five-layer cost model

Pool AA is not staffed by headcount; it is sized by cost. The math is a five-layer cost model, not a queuing model:

- Initial investment. Build, integration, content, training data, model selection. This includes the engineering effort to design conversation flows, integrate with backend systems, build testing infrastructure, and validate against production traffic. For enterprise deployments, initial investment typically ranges from $500K to $5M depending on scope and integration complexity.[3]

- Transaction cost. Marginal cost per AI-handled interaction. This is the only layer most vendor business cases show. For LLM-based agents, transaction cost includes API token consumption, orchestration compute, and any third-party service calls. Current range: $0.03–$0.50 per interaction depending on complexity and model tier.

- Escalation cost. Expected cost adjusted for the cascade probability — when AI hands off to Pool Collab or Pool Spec, the full handoff cost is properly attributed. The escalation tax includes: wasted AI processing time, context-transfer overhead, customer frustration from repetition, and the full handling cost in the receiving pool. Critical insight: escalation cost scales superlinearly with escalation rate because high-escalation deployments also produce lower first-contact resolution even for escalated interactions.

- Maintenance cost. Model retraining, content updates, regression testing, drift monitoring. Persistent and underestimated. AI systems degrade as products change, policies shift, and customer language evolves. A responsible Pool AA budget allocates 15-25% of initial investment annually to maintenance — a figure that surprises organizations accustomed to "set and forget" IVR thinking.

- Rebound cost. The capacity required to handle induced demand is part of Pool AA's cost, not Pool Collab's. New volume that AA's existence creates is AA's responsibility. When customers discover they can get instant service, they contact more often. When resolution is partial, they call back. Both effects create volume that would not exist without Pool AA.

The full Pool AA cost is materially higher than transaction cost alone — typically 3-10× higher when escalation, maintenance, and rebound are properly priced. This is the single most important correction the Three-Pool Architecture makes to standard vendor ROI models.

Sizing Pool AA

Pool AA capacity is not expressed in headcount but in concurrent session capacity and annual cost envelope. The sizing process:

- Forecast volume eligible for Pool AA using the routing heuristic

- Estimate containment rate — the fraction that AA resolves without escalation

- Apply the five-layer cost model to contained + escalated volume

- Compare total cost to the counterfactual (handling the same volume in Pool Collab or Pool Spec)

- Set the interior optimum — the containment rate that minimizes total cost, which is not 100%

Pool Collab — Collaborative

Characteristics

Pool Collab is the architectural novelty of the framework. It handles interactions where neither pure AI nor pure human is optimal. Instead, a human and an AI system work the interaction together, with one monitoring the other. The collaboration pattern varies:

- AI-primary, human-monitored: AI handles the interaction while a human watches a dashboard of concurrent sessions and intervenes when confidence drops or anomalies surface. This is the dominant pattern at scale.

- Human-primary, AI-assisted: A human handles the interaction with AI providing real-time suggestions, knowledge retrieval, sentiment analysis, and next-best-action recommendations. This pattern dominates early in the Level 3 → Level 4 transition.

- Handoff-mediated: AI begins the interaction, collects context, and hands to a human with a structured brief. The human resolves the issue, and AI handles post-interaction wrap-up. This pattern suits interactions where the critical judgment moment is sandwiched between routine steps.

When to use: interactions with mid-range AI Capability and Value Score — the "everything else" bucket from the routing heuristic. In practice, Pool Collab often handles 30-50% of total volume in mature deployments, making it the largest pool by interaction count in many operations.

Staffing math: the Cognitive Portfolio Model

Pool Collab staffing uses the Cognitive Portfolio Model (N*). A single human monitors and intervenes across N concurrent AI-handled interactions, where N is solved as a fixed point:

N* = ρ_max / ( λ_int · (E[S_int] + γ(N)) + m(N) )

with γ(N) = γ_0 + γ_1·ln(N) (logarithmic switching cost) and m(N) = m_0·N^α (monitoring overhead). Pool Collab headcount is then volume / (N* × throughput).

The five parameters that govern the model:

- λ_int — intervention arrival rate: how often the AI flags an interaction for human review. Depends on AI confidence thresholds, which are a tunable parameter. Lower thresholds mean more flags, lower N*, and higher headcount.

- E[S_int] — expected intervention service time: how long each human intervention takes. Depends on interaction complexity and tool quality. Ranges from 30 seconds (quick confirmation) to 5 minutes (complex judgment call).

- γ_0, γ_1 — switching cost parameters: the cognitive overhead of context-switching between monitored sessions. γ_0 is the base switching cost; γ_1 governs how switching cost grows logarithmically as the number of simultaneous sessions increases.

- m_0, α — monitoring overhead parameters: the baseline attention cost of passively monitoring sessions even when no intervention is needed. α < 1 implies sublinear growth (monitoring gets more efficient with experience); α > 1 implies superlinear growth (cognitive overload).

- ρ_max — maximum cognitive utilization: the fraction of available cognitive capacity that can be sustainably deployed. Analogous to occupancy in Erlang models but harder to measure. Typical range: 0.75–0.90.

The work pattern in Pool Collab is novel. There is no Erlang C analog. The cognitive constraint, not the arrival rate, determines staffing. This is why Pool Collab cannot be modeled by simply adjusting Erlang parameters — the fundamental bottleneck has changed from telephone line occupancy to human attention capacity.[4]

Calibration of the five parameters is open research; the white paper is explicit that practitioners should use expert-estimated ranges with sensitivity analysis until in-house data accumulates. Organizations entering Pool Collab should plan a 3-6 month calibration period where they run controlled experiments varying N and measuring intervention quality, response time, and error rates.

The supervision ratio question

The most common implementation question for Pool Collab: "What is the right supervision ratio?" The answer is that N* is not a single number — it is a function of interaction complexity, AI confidence calibration, and human expertise. Practical ranges observed in early implementations:

- Simple monitoring (AI handles routine interactions, human confirms): N* = 20–40

- Active collaboration (AI and human co-handle moderate interactions): N* = 5–15

- Complex oversight (human guides AI through difficult interactions): N* = 2–5

These ranges will shift as AI capability improves. The architectural discipline is to measure N* rather than assume it, and to recognize that different interaction types within Pool Collab may have different optimal N* values — creating sub-pools within Pool Collab that each require their own calibration.

See Human AI Supervision and Escalation Frameworks for detailed governance patterns within Pool Collab.

Pool Spec — Specialist

Characteristics

Pool Spec handles the work that AI cannot do well and that collaboration does not improve. These are long, heterogeneous, judgment-intensive interactions where the human is the value — not as a monitor of AI, but as the primary problem-solver using experience, empathy, regulatory knowledge, and creative reasoning that current AI cannot replicate.

When to use: interactions with low AI Capability (<30%) or high Value Score (≥8). The work that concentrates here is the long, heterogeneous, judgment-intensive remainder.

Typical interaction types

- Complex complaints requiring regulatory or legal judgment

- High-value customer retention negotiations

- Multi-system troubleshooting with ambiguous symptoms

- Interactions requiring emotional intelligence (bereavement, hardship, crisis)

- Cross-departmental escalations requiring organizational navigation

- Novel situations with no established playbook

Staffing math: simulation

Staffing math: simulation, not closed-form Erlang. The work is too heterogeneous for Erlang C's homogeneous-arrivals / homogeneous-service assumptions to hold. Specialist staffing has been simulation-driven in mature operations for years; what changes at Level 4 is that Pool Spec receives a structurally harder workload than the historical specialist tier, because complexity has been concentrated by deflection.

The key modeling requirements for Pool Spec simulation:

- Non-exponential service times. Pool Spec AHT distributions are log-normal or Pareto-tailed, not exponential. Mean AHT is insufficient; the full distribution shape matters for staffing.

- Skill-based routing within the pool. Pool Spec is not monolithic. It contains sub-specialties (regulatory, technical, retention) that require skill-based assignment. The simulation must model skill availability and routing preferences.

- Arrival process coupling. Pool Spec arrivals are not independent of Pool AA and Pool Collab performance. When AI containment drops (due to model degradation, product launches, or policy changes), Pool Spec volume spikes. The simulation must accept escalation-driven arrival feeds, not just independent Poisson processes.

- Fatigue and quality decay. Extended exposure to concentrated-complexity work produces cognitive fatigue that degrades quality. Simulation models should incorporate utilization-dependent quality functions, not just throughput constraints.

The Complexity Premium applies here. AHT distributions in Pool Spec are wider and right-skewed compared to pre-deflection specialist work. Simulation models must be re-fit on post-deflection data before being used for Pool Spec sizing.

The concentration effect

Pool Spec experiences a phenomenon unique to the Three-Pool Architecture: complexity concentration. When Pool AA and Pool Collab absorb the easier work, Pool Spec's remaining work is not simply "the same specialist work as before." It is harder, because the easy tail of the specialist distribution has been removed.

Concretely: if pre-deflection specialist AHT averaged 12 minutes with σ = 8 minutes, post-deflection specialist AHT may average 18 minutes with σ = 12 minutes — not because individual interactions got harder, but because the easier interactions that pulled the average down are now in Pool Collab. This has direct implications for staffing: Pool Spec needs more capacity per interaction than historical specialist tiers, even though it handles fewer interactions.[5]

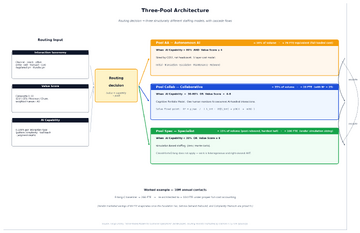

The default routing heuristic

The white paper proposes a default routing rule based on the two most discriminating taxonomy dimensions:

- Pool AA (Autonomous AI): AI Capability > 80% AND Value Score ≤ 4

- Pool Spec (Specialist): AI Capability < 30% OR Value Score ≥ 8

- Pool Collab (Collaborative): everything else

The thresholds are planning decisions, not laws. They are motivated by Cobham's c-μ scheduling rule (work goes where its product of cost and service rate is highest) but lack a closed-form optimality guarantee. Practitioners should treat the defaults as starting points and sweep thresholds against the multi-objective cost / CX / EX surface.

Threshold sensitivity

Small changes in routing thresholds produce large changes in pool composition. Consider a 1M annual contact operation:

- Moving the AA capability threshold from 80% to 70% might shift 120K additional interactions into Pool AA — increasing Pool AA volume by 25%, raising the escalation rate by 3-5 percentage points, and pushing 5-10K additional escalations into Pool Collab and Pool Spec.

- Moving the Spec value threshold from 8 to 7 might pull 80K interactions from Pool Collab into Pool Spec — increasing Pool Spec headcount requirements by 15-20% while relieving pressure on Pool Collab's supervision ratios.

This sensitivity is why routing thresholds should be treated as portfolio optimization parameters, not fixed configuration. See Multi-Objective Optimization in Contact Center for the optimization methodology.

Boundary dynamics: the migration pattern

The Three-Pool Architecture is not static. Over time, work migrates across pool boundaries in a predictable directional pattern: Spec → Collab → AA. This migration is driven by three forces:

Force 1: AI capability improvement

As AI models improve, interactions that required human judgment become automatable. Work that was Pool Spec (AI Capability < 30%) crosses the threshold into Pool Collab territory. Work that was Pool Collab crosses into Pool AA territory. This is the most visible migration force and the one vendors emphasize — but it is also the slowest, because it depends on external model advances.

Force 2: Organizational learning

As operations accumulate data on AI performance within each pool, they can tune confidence thresholds, improve training data, and refine escalation criteria. This learning makes AI more effective at the current capability frontier, expanding Pool AA and Pool Collab without requiring fundamental model improvements. This force operates on a 6-18 month cycle and is under the organization's direct control.

Force 3: Process redesign

Some interactions are in Pool Spec not because AI cannot handle them, but because the process was designed for humans. Redesigning processes — simplifying policies, standardizing exception handling, creating structured decision frameworks — can shift work from Pool Spec to Pool Collab or even Pool AA. This is the most powerful migration force but requires cross-functional collaboration beyond the contact center.

Migration planning

Mature Three-Pool implementations maintain a migration roadmap that forecasts which interaction types will move between pools over the next 12-24 months, with specific triggers (AI capability milestones, process redesign completions, training data thresholds) for each transition. This roadmap feeds directly into Agentic AI Workforce Planning and Workforce Planning with AI Agents, enabling proactive staffing adjustments rather than reactive scrambling.

The migration pattern also has workforce implications: as work migrates from Spec to Collab to AA, the type of human skill needed changes. Pool Spec needs deep domain experts. Pool Collab needs people comfortable with AI monitoring and rapid context-switching. Pool AA needs AI engineers and data scientists for maintenance. Workforce development planning must anticipate these shifts. See Human AI Blended Staffing Models for transition strategies.

Integration with Erlang models

A common question: "Does the Three-Pool Architecture replace Erlang C?" The answer is nuanced:

- Pool AA: Erlang C is irrelevant. There are no human agents to staff with queuing theory. Cost modeling replaces queuing.

- Pool Collab: Erlang C is structurally wrong. The bottleneck is cognitive capacity, not telephony occupancy. The Cognitive Portfolio Model (N*) replaces Erlang C.

- Pool Spec: Erlang C is approximately applicable for homogeneous sub-queues within Pool Spec, but simulation is preferred because of the heterogeneity and fat-tailed distributions. Organizations that lack simulation capability can use Erlang C as a rough approximation for Pool Spec — but they should apply a complexity premium multiplier (typically 1.15–1.30×) to account for the concentration effect.

The practical implication: organizations do not abandon Erlang. They scope it. Erlang C becomes one tool in a toolkit that also includes cost modeling and cognitive portfolio theory. The Three-Pool Architecture tells you which model to apply where — which is precisely the guidance that traditional WFM frameworks lack.[6]

Integration with the AI Scaffolding Framework

The AI Scaffolding Framework provides the implementation methodology for standing up each pool. The mapping:

- Pool AA requires Level 3+ scaffolding: autonomous execution with monitoring, automated quality gates, and escalation triggers. The scaffolding defines how AI operates independently; the Three-Pool Architecture defines what work it operates on.

- Pool Collab requires Level 2 scaffolding: human-in-the-loop with AI assistance, real-time confidence scoring, and structured handoff protocols. The scaffolding intensity varies with the collaboration pattern (AI-primary vs. human-primary).

- Pool Spec requires Level 1 scaffolding at most: AI provides background information and post-interaction summarization, but the human drives the interaction. Over-scaffolding Pool Spec work risks slowing specialists with irrelevant AI suggestions.

See AI Agent Orchestration for WFM for the technical implementation of multi-pool agent routing.

Real-world implementation guidance

Phase 1: Taxonomy and measurement (Weeks 1-8)

Before implementing the Three-Pool Architecture, an operation must build the measurement infrastructure:

- Interaction taxonomy. Classify all interaction types by AI Capability score and Value Score using the Value Routing Model. This requires sampling interactions, testing AI performance on each type, and estimating value through the composite scoring methodology.

- Baseline measurement. Measure current AHT, FCR, CSAT, and cost per interaction by interaction type, not just in aggregate. These baselines become the counterfactuals against which pool performance is compared.

- Escalation instrumentation. Build the ability to track interactions as they move between pools, including full timing, context-transfer quality, and outcome measurement at each stage.

Phase 2: Pool AA pilot (Weeks 4-16)

Start with Pool AA because it is the most measurable:

- Select 2-3 interaction types with the highest AI Capability scores and lowest Value Scores

- Deploy AI handling with full five-layer cost tracking

- Measure containment rate, escalation rate, and customer outcomes

- Validate or adjust the five-layer cost model with real data

- Expand to additional interaction types only when cost model is validated

Phase 3: Pool Collab pilot (Weeks 12-30)

Pool Collab is the hardest to implement because the staffing model is novel:

- Select interaction types in the Collab zone of the routing heuristic

- Start with low N (3-5 concurrent sessions per human) and measure intervention quality

- Gradually increase N while tracking quality degradation curves

- Calibrate the Cognitive Portfolio Model parameters from observed data

- Establish sustainable N* ranges for each interaction type within Pool Collab

Phase 4: Full architecture deployment (Weeks 24-52)

- Implement the routing heuristic across all interaction types

- Establish Pool Spec with simulation-based staffing on post-deflection data

- Connect the pools through measured escalation cascades

- Begin threshold optimization against the multi-objective surface

- Establish the migration roadmap for ongoing pool boundary management

Common implementation pitfalls

- Declaring Pool AA victory too early. Organizations that measure only containment rate and transaction cost will overstate Pool AA performance. Insist on full five-layer cost accounting before scaling.

- Ignoring the concentration effect in Pool Spec. Using pre-deflection AHT data for post-deflection Pool Spec sizing produces systematic understaffing.

- Treating Pool Collab as "agents with a chatbot." Pool Collab is a fundamentally different work pattern, not traditional agent work with an AI sidebar. It requires different training, different tools, different scheduling, and different performance metrics.

- Static routing thresholds. Thresholds set at launch will be wrong within 6 months as AI capabilities evolve and the operation learns. Build the optimization infrastructure from day one.

Worked example: decomposing a contact center into three pools

Scenario

A mid-size financial services contact center: 10M annual contacts, 18 interaction types, baseline staffing under traditional Erlang-C ≈ 266 FTE. Average AHT 7.2 minutes, blended cost per FTE $65K/year, 80/20 Service Level target.

Step 1: Interaction taxonomy

Each interaction type is scored on AI Capability (0-100) and Value Score (1-10):

| Interaction Type | Annual Volume | AI Capability | Value Score | Pool Assignment |

|---|---|---|---|---|

| Balance inquiry | 1,800,000 | 95 | 2 | AA |

| Password reset | 900,000 | 92 | 1 | AA |

| Order status | 1,200,000 | 88 | 3 | AA |

| Simple returns | 800,000 | 83 | 3 | AA |

| Payment arrangements | 600,000 | 65 | 5 | Collab |

| Product questions | 1,100,000 | 58 | 4 | Collab |

| Account changes | 700,000 | 55 | 5 | Collab |

| Technical support L1 | 500,000 | 50 | 5 | Collab |

| Billing disputes | 400,000 | 45 | 6 | Collab |

| Policy exceptions | 300,000 | 40 | 7 | Collab |

| Fraud claims | 350,000 | 22 | 9 | Spec |

| Regulatory complaints | 200,000 | 15 | 9 | Spec |

| Complex disputes | 250,000 | 18 | 8 | Spec |

| Retention (high-value) | 300,000 | 25 | 9 | Spec |

| Hardship/crisis | 150,000 | 10 | 10 | Spec |

| Multi-product resolution | 200,000 | 28 | 7 | Spec |

| Escalation recovery | 100,000 | 20 | 8 | Spec |

| Executive complaints | 50,000 | 12 | 10 | Spec |

Step 2: Pool volume allocation

- Pool AA: 4,700,000 interactions (47%)

- Pool Collab: 3,600,000 interactions (36%)

- Pool Spec: 1,600,000 interactions (16%)

- Unallocated (rounding): 100,000

Step 3: Pool AA sizing

Applying the five-layer cost model:

- Containment rate: 82% (measured from pilot)

- Contained interactions: 3,854,000 at $0.12 avg transaction cost = $462K

- Escalated interactions: 846,000 × $18 avg escalation cost = $15.2M

- Initial investment (amortized): $2.5M / 3 years = $833K/year

- Maintenance: $625K/year

- Rebound volume: 8% of contained = 308K additional interactions across all pools = $1.9M allocated cost

Pool AA total annual cost: $19.0M — equivalent to ~$4.04 per interaction or ~29 FTE-equivalent at $65K. Note: a vendor model showing only transaction cost would claim $462K — a 41× understatement.

Step 4: Pool Collab sizing

Calibrated Cognitive Portfolio parameters (from Phase 3 pilot):

- Average N* across interaction types: 22 (range: 8 for billing disputes to 35 for product questions)

- Weighted average throughput per human-hour: 18 interactions

- Required human-hours: 3,600,000 / 18 = 200,000 hours

- At 1,800 productive hours/year: ~28 FTE (with schedule efficiency adjustments: ~32 FTE rostered)

Step 5: Pool Spec sizing

Simulation-based sizing using post-deflection distributions:

- Post-deflection average AHT: 22.4 minutes (vs. 14.1 minutes pre-deflection for similar interaction types)

- AHT distribution: log-normal with σ = 15.8 minutes

- Required capacity at 80/20 service level: ~46 FTE (vs. ~38 FTE if pre-deflection distributions were incorrectly used — a 21% understaffing error)

Step 6: Total architecture

- Pool AA: ~29 FTE-equivalent cost

- Pool Collab: ~32 FTE rostered

- Pool Spec: ~46 FTE rostered

- Overhead (routing, monitoring, optimization): ~4 FTE

- Total: ~111 FTE-equivalent — down from 266 FTE baseline

The 266 → 111 reduction is real. The 111 → 84 "savings" that some vendor models project is largely fictional once the escalation tax, rebound, and complexity premium are priced in. Honest accounting produces a 58% reduction, not the 68% that cherry-picked cost models suggest. Still transformative — but the difference between 58% and 68% is the difference between a sustainable business case and one that collapses on contact with reality.[7]

Maturity Model Position

The Three-Pool Architecture is the architectural unit of Level 4 — Advanced (The Ecosystem Emerges) in the WFM Labs Maturity Model™.

- Level 1 — Initial (Emerging Operations) — Architecture is unreachable. There is no taxonomy that would allow pool assignment. Operations lack the data infrastructure to measure AI Capability or Value Scores. The concept of multiple staffing models is foreign.

- Level 2 — Foundational (Traditional WFM Excellence) — Architecture is invisible. Operations run a single pool against a single service-level metric. AI, when deployed, is conceived as a deflection layer above the workforce, not a pool inside it. Erlang C is the only staffing model. AI in Workforce Management is understood as "chatbot reduces volume."

- Level 3 — Progressive (Breaking the Monolith) — Architecture is approachable. Multi-skill routing exists; per-skill staffing exists; but the explicit three-pool partition with three different staffing models is not yet in place. Pool Collab in particular has no analog. Operations at Level 3 may have an AI deployment and a specialist tier, but they model both with Erlang variants — missing the structural insight that each pool requires fundamentally different math.

- Level 4 — Advanced (The Ecosystem Emerges) — Architecture is the operating model. All three pools are explicit, each with its own staffing math. Routing is governed by the explicit heuristic. Cascade and rebound are priced. The operation has moved from "AI as deflection" to "AI as workforce pool."

- Level 5 — Pioneering (Enterprise-Wide Intelligence) — Architecture is closed-loop. Routing thresholds, Cognitive Portfolio parameters, and cascade probabilities are recalibrated automatically from drift signals. The pools are self-tuning: interaction types migrate between pools based on measured capability changes, not manual reassignment.

The Level 3 → Level 4 transition is the first decisive structural change in WFM since multi-skill routing in the 1990s. It requires not just new technology but a new mental model: the workforce is no longer a homogeneous resource pool sized by a single equation. It is a portfolio of structurally different pools, each with its own economics, its own math, and its own optimization surface.[8]

How the pools connect

Pools are not independent. Three connections matter:

Escalation cascades

Interactions can move AA → Collab → Spec, or AA → Spec directly, or Collab → Spec. Each cascade hop adds the escalation tax to expected cost and may degrade CX. Cascade probabilities must be measured per interaction type, not assumed.

The cascade architecture has directionality: work flows toward higher human involvement, never backward. An interaction that reaches Pool Spec does not return to Pool AA or Pool Collab — it completes in Pool Spec. This directionality simplifies the mathematical model but creates an important constraint: Pool Spec must be sized to absorb cascade overflow from both other pools, and that overflow is correlated with system stress (model degradation, unusual volume patterns, product launches).

Rebound flow

Demand rebound generated by Pool AA's existence appears as new volume. Some lands in Pool AA again (R_d), some in adjacent pools (R_i), some as new-category volume distributed across the architecture (R_s). The rebound coefficients are empirically measurable but require 3-6 months of post-deployment data. Operations that fail to account for rebound systematically understaff by 5-15%.

Routing as a coupled decision

The threshold settings for the routing heuristic affect all three pools simultaneously. Lowering the AI Capability threshold for Pool AA from 80% to 70% expands Pool AA, raises the escalation rate, increases load on Pool Collab, and concentrates more complexity into Pool Spec. The decision is a portfolio decision, not three independent ones. This coupling is why optimization must be multi-objective — optimizing any single pool in isolation will degrade the others.

Limitations

The architecture is honestly bounded:

- Routing is heuristic. The default thresholds are not provably optimal. Operations should sweep them against their own multi-objective cost surface.

- Pool boundaries are not crisp. Some interaction types straddle Pool Collab and Pool Spec; the routing decision is a soft probability, not a hard partition. Practitioners should measure boundary stability over time.

- Three pools is a current-decade architecture. If AI capability advances such that a fourth pool (e.g., autonomous specialist AI) becomes meaningful, the architecture extends naturally — but no published implementation of that extension exists today.

- No published large-scale implementation. The architecture is mathematically coherent and operationally implementable, but the empirical evidence base is small. Early adopters will be calibrating in real time.

- Cognitive Portfolio Model parameters are nascent. The N* equation is theoretically grounded but lacks the empirical calibration depth that Erlang models have accumulated over a century. Early implementations should budget for extensive parameter tuning.

- Cultural resistance. The shift from "AI deflects volume" to "AI is a workforce pool" requires a mental model change that many WFM leaders and executives find uncomfortable. Implementation success correlates more with organizational change management than with technical sophistication.

See Also

- Value-Based Planning Model — the framework the architecture lives inside

- Cognitive Portfolio Model (N*) — Pool Collab's staffing equation

- The Escalation Tax — the cost penalty for cross-pool cascades

- Service Demand Rebound Model — Pool AA's rebound responsibility

- Interior Optimum (containment rate) — sets Pool AA's volume share

- Value Routing Model — the composite Value Score that drives pool assignment

- Discrete-Event vs. Monte Carlo Simulation Models — Pool Spec's staffing basis

- Multi-Objective Optimization in Contact Center — the optimization surface routing thresholds are swept against

- Agentic AI Workforce Planning — strategic workforce planning with AI agents

- Human AI Blended Staffing Models — transition strategies for hybrid workforces

- Workforce Planning with AI Agents — operational planning integration

- AI Containment Rate and Its Workforce Implications — containment rate economics

- AI Scaffolding Framework — implementation methodology per pool

- Erlang C — the traditional model that Pool Spec approximates and Pools AA/Collab replace

- Service Level — the service target that all three pools must collectively achieve

- AI in Workforce Management — broader context for AI in WFM

- AI Agent Orchestration for WFM — technical routing implementation

- Human AI Supervision and Escalation Frameworks — governance within Pool Collab

Interactive tools

- Service Model Simulator — servicemodel.wfmlabs.com. Models in-house, BPO outsourced, and AI-automated service models side-by-side, with cost, quality, and scaling profiles for each. The closest interactive analogue to running a Three-Pool sizing exercise — sweep volume, AHT, agent costs, BPO rates, and AI capability parameters and observe how the optimal mix shifts. Useful for grounding Pool-AA / Pool-Collab / Pool-Spec sizing intuition before applying the formal architecture.

References

- ↑ Lango, T. (2026). Value-Based Models for Customer Operations — From Traditional Queuing to Bottom-Up Value Planning. WFM Labs white paper.

- ↑ Gans, N., Koole, G., & Mandelbaum, A. (2003). Telephone call centers: Tutorial, review, and research prospects. Manufacturing & Service Operations Management, 5(2), 79-141.

- ↑ Deloitte (2024). Global Contact Center Survey. Deloitte Digital.

- ↑ Wickens, C. D. (2008). Multiple resources and mental workload. Human Factors, 50(3), 449-455.

- ↑ Aksin, Z., Armony, M., & Mehrotra, V. (2007). The modern call center: A multi-disciplinary perspective on operations management research. Production and Operations Management, 16(6), 665-688.

- ↑ Koole, G. (2013). Call Center Optimization. MG Books.

- ↑ McKinsey & Company (2023). The economic potential of generative AI: The next productivity frontier. McKinsey Global Institute.

- ↑ Garnett, O., Mandelbaum, A., & Reiman, M. I. (2002). Designing a call center with impatient customers. Manufacturing & Service Operations Management, 4(3), 208-227.