Deterministic vs Probabilistic Models

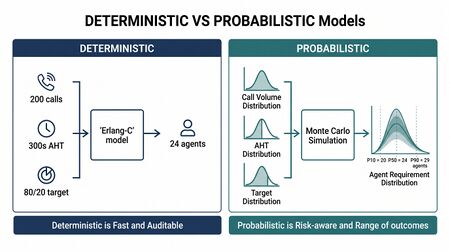

In workforce management (WFM), the distinction between deterministic models and probabilistic models represents a foundational conceptual framework that shapes how analysts approach forecasting, scheduling, capacity planning, and operational decision-making. A deterministic model produces the same output every time it receives the same input; a probabilistic model incorporates randomness, uncertainty, or distributional assumptions to produce a range of possible outcomes, each with an associated likelihood.

Understanding when to apply each approach—and when to combine them—is arguably the most important analytical skill in a WFM practitioner's toolkit. The choice between deterministic and probabilistic methods affects not only the accuracy of operational plans but also the organization's ability to quantify risk, communicate uncertainty to stakeholders, and make defensible decisions under conditions of incomplete information. A WFM analyst who treats every problem as deterministic will systematically underestimate risk; one who applies probabilistic methods to every problem will introduce unnecessary complexity where simple rules suffice.

This article provides a comprehensive treatment of both model types as they appear in contact center and workforce management contexts, including the subtle but critical distinction between a model's execution behavior and the meaning of its outputs. It also offers practical guidance for selecting the appropriate approach based on the nature of the problem, the available data, and the decision being supported.

Deterministic Models in WFM

Main article: Erlang C

A deterministic model is one in which the output is entirely determined by its inputs and internal logic, with no element of randomness. Given the same inputs, a deterministic model will always produce the same result. In mathematical terms, a deterministic model defines a function f such that y = f(x), where y is uniquely determined by x.

Characteristics

Deterministic models share several properties that make them attractive for operational use:

- Reproducibility — identical inputs always yield identical outputs, making results auditable and explainable.

- Computational efficiency — without the need to simulate many scenarios, deterministic models typically run faster than their probabilistic counterparts.

- Transparency — the logic chain from input to output can be traced step by step, which supports compliance requirements and stakeholder communication.

- Precision — outputs are point values rather than distributions, which simplifies downstream consumption by scheduling engines and staffing tools.

WFM Applications

Deterministic models appear throughout the WFM workflow:

Erlang C staffing calculation. The Erlang-C formula computes the probability that a call will wait in queue, the expected waiting time, and the required number of agents for a given arrival rate, average handle time, and service level target. Although it models a probabilistic system (random arrivals following a Poisson process), the formula itself executes deterministically: the same inputs always produce the same staffing requirement. This duality is explored further in the execution-meaning distinction below.

Linear programming for schedule optimization. Given a set of staffing requirements by interval, employee availability constraints, labor rules, and an objective function (such as minimizing cost or maximizing service level), a linear programming (LP) solver produces an optimal schedule deterministically. The solver explores the feasible region defined by the constraints and identifies the vertex that optimizes the objective. Commercial WFM platforms such as NICE, Verint, and Aspect use LP and mixed-integer programming (MIP) solvers for schedule generation.[1]

Business rules and compliance checks. Rules such as "agents must have a minimum 30-minute lunch break between the 4th and 6th hour of a shift" or "no agent may work more than 6 consecutive days" are deterministic: they either pass or fail for a given schedule. These rules form hard constraints in optimization models and validation checks in schedule auditing.

Interval-level staffing requirements. The standard WFM process of converting a volume forecast and an AHT assumption into a staffing requirement for each 15- or 30-minute interval is deterministic arithmetic: Required Staff = f(Volume, AHT, Service Level Target, Shrinkage). This step is so routine that its deterministic nature is rarely questioned—but it represents a significant modeling choice, as discussed in the common mistakes section.

Limitations

The primary limitation of deterministic models is that they present a single version of reality. When the inputs to a deterministic model are themselves uncertain (as volume forecasts always are), the deterministic output conceals that uncertainty. A staffing plan that shows "42 agents needed at 10:00 AM" offers no information about how sensitive that number is to forecast error. This limitation is precisely what motivates probabilistic approaches.

Probabilistic Models in WFM

Main article: Probabilistic Scheduling

A probabilistic (or stochastic) model explicitly incorporates randomness, producing outputs that are distributions rather than point values. Instead of stating that 42 agents are needed, a probabilistic model might state that the required staffing falls between 38 and 47 agents with 90% confidence, with a median estimate of 42.

Characteristics

- Uncertainty quantification — outputs include measures of spread (variance, confidence intervals, prediction intervals) that convey how much faith to place in the central estimate.

- Risk visibility — decision-makers can evaluate worst-case and best-case scenarios rather than relying on a single plan.

- Distribution-awareness — probabilistic models can capture asymmetric distributions, fat tails, and multimodal patterns that point estimates obscure.

- Computational cost — simulation-based probabilistic methods require many iterations, increasing computation time and resource requirements.

WFM Applications

Monte Carlo simulation. By running thousands of simulated scenarios—each drawing arrival rates, handle times, and other parameters from probability distributions—Monte Carlo methods produce empirical distributions of outcomes such as service level, wait time, abandonment rate, and required staffing. This approach is central to simulation-based capacity planning and is implemented in tools ranging from custom spreadsheet models to dedicated simulation platforms.[2]

Probabilistic forecasting. Rather than producing a single forecast line, probabilistic forecasting generates a distribution of possible future volumes for each interval. Bayesian methods, quantile regression, and ensemble approaches all produce distributional forecasts. These methods are increasingly available in machine learning forecasting tools and are essential for staffing to a percentile rather than the mean.[3]

Stochastic simulation for capacity planning. Long-range capacity planning must account for uncertainty in demand growth, attrition rates, hiring pipeline yields, and training durations. Stochastic models simulate many possible futures to produce distributions of staffing gaps or surpluses, enabling planners to quantify the risk of under- or over-investment in capacity.

Confidence intervals on performance metrics. When reporting historical performance, probabilistic methods can attach confidence intervals to metrics like service level or average speed of answer, distinguishing genuine performance changes from statistical noise. This is the foundation of variance harvesting—separating signal from noise in operational data.

Limitations

Probabilistic models are not universally superior to deterministic ones. They introduce complexity that may not be warranted when uncertainty is low, when decisions are insensitive to the range of possible outcomes, or when stakeholders lack the statistical literacy to interpret distributional outputs. A Monte Carlo simulation that produces a 90% confidence interval of [41, 43] agents adds little value over a deterministic estimate of 42; the computational and communication overhead is not justified by the narrow uncertainty band.

The Execution-Meaning Distinction

One of the most important and frequently misunderstood concepts in applied modeling is the distinction between how a model executes and what its outputs mean in terms of the system being modeled. This distinction was introduced on the AI Scaffolding Framework page and deserves extended treatment here.

Deterministic Execution, Probabilistic Meaning

The Erlang C formula is the canonical example. Given inputs of arrival rate (λ), average handle time (s), and number of agents (N), the formula deterministically computes the probability that a call will wait in queue (Pw) and the expected wait time (Wq). The calculation involves no random number generation; the same inputs always yield the same outputs.

However, the system being modeled is inherently probabilistic. The Erlang-C formula assumes that call arrivals follow a Poisson process (random arrivals with exponentially distributed inter-arrival times) and that service times are exponentially distributed. The formula's outputs—Pw and Wq—are expected values under these probabilistic assumptions. When the formula reports a service level of 80/20 (80% of calls answered within 20 seconds), it means that on average, over many intervals with these exact conditions, 80% of calls will be answered within 20 seconds. Any individual interval may deviate substantially from this expectation.[4]

This has profound practical implications. A staffing plan built on Erlang-C outputs is deterministic in construction but probabilistic in the reality it describes. The plan represents a single point on a distribution of possible outcomes. When actual results deviate from plan—as they inevitably do—the deviation is not necessarily a failure of execution; it may simply be the natural variance of a stochastic system.

Probabilistic Execution, Deterministic Meaning

The reverse case also exists, though it is less common in WFM. A randomized algorithm (such as a genetic algorithm for schedule optimization) may use random number generation internally but converge to the same optimal or near-optimal solution regardless of the random seed. The execution is probabilistic, but the meaning of the output—the schedule—is deterministic: it represents a specific assignment of agents to shifts and activities. Some metaheuristic solvers used in schedule optimization exhibit this pattern.

Why This Matters for Practitioners

Failure to recognize the execution-meaning distinction leads to two common errors:

- Treating deterministic outputs as certainties. Because the Erlang-C formula always returns the same number, analysts may treat its output as a guarantee rather than an expected value. This leads to under-staffing (no buffer for variance) and surprise when actual results deviate from plan.

- Treating probabilistic outputs as unreliable. Because a Monte Carlo simulation produces a range of results rather than a single number, some stakeholders dismiss it as "not giving a real answer." In fact, the range is the answer; a point estimate that conceals the range is less informative, not more.

The mature analytical approach is to understand both the execution behavior (deterministic or probabilistic) and the semantic meaning (whether the output represents a point value, an expected value, or a distribution), and to communicate the appropriate level of confidence to stakeholders.

When to Use Which

The choice between deterministic and probabilistic approaches depends on several factors. The following framework provides practical guidance for WFM practitioners.

Decision Framework

| Factor | Favors Deterministic | Favors Probabilistic |

|---|---|---|

| Uncertainty in inputs | Low — inputs are well-known or tightly bounded | High — inputs are estimates with significant error bands |

| Sensitivity of decision | Low — outcome changes little across the plausible input range | High — small input changes produce large outcome swings |

| Cost of error | Symmetric — over-staffing and under-staffing are roughly equally costly | Asymmetric — one direction of error is much more costly (e.g., service level penalties) |

| Stakeholder audience | Needs a single number for planning | Can interpret ranges and make risk-adjusted decisions |

| Time horizon | Short — near-term (intraday, next day) where uncertainty is lower | Long — multi-week or quarterly where uncertainty compounds |

| Computational budget | Limited — real-time or near-real-time decisions | Ample — batch planning processes that can run overnight |

| Regulatory/compliance | Rules-based — pass/fail compliance checks | Risk-based — demonstrate that risk has been quantified and managed |

Rules of Thumb

- Intraday management — predominantly deterministic. Real-time reforecasting and staffing adjustments operate on short time horizons where the additional value of distributional information rarely justifies the complexity.

- Weekly/daily scheduling — deterministic with probabilistic inputs. Use probabilistic forecasting to generate inputs, then feed a percentile (e.g., the 70th percentile volume forecast) into a deterministic scheduler. This is the hybrid approach described in the section below.

- Capacity planning — predominantly probabilistic. Long-range decisions about hiring, training, and headcount carry high uncertainty and asymmetric cost; probabilistic models that quantify the risk of shortfall are essential for defensible planning.[5]

- Performance analysis — probabilistic for root cause analysis (distinguishing signal from noise), deterministic for reporting (stakeholders want metrics, not distributions).

- Compliance and labor rules — deterministic. Rules are binary: met or not met.

Common Mistakes

WFM practitioners commonly make several errors related to the deterministic-probabilistic distinction.

Treating Probabilistic Outputs as Point Estimates

When a probabilistic forecast produces a distribution of possible volumes, it is tempting to extract only the mean or median and discard the rest. This collapses a rich distributional output into a single number, losing the uncertainty information that motivated the probabilistic approach in the first place. If the 50th percentile forecast is 500 calls and the 90th percentile is 620 calls, staffing to 500 ignores a 50% chance of being under-staffed. The concept of staffing to a percentile addresses this directly.

Treating Deterministic Outputs as Certainties

An Erlang-C calculation that returns a service level of 80% does not mean that 80% of calls will be answered within the threshold in every interval. It means that in expectation, across many intervals with identical conditions, the service level will average 80%. Individual intervals will scatter around this value. Analysts who treat the 80% as a guarantee and react to every interval that deviates are chasing noise rather than managing performance.

Over-Engineering with Probability

Not every problem requires a probabilistic treatment. If the decision is insensitive to the range of uncertainty—for example, if the staffing requirement is 42 agents regardless of whether volume is 480 or 520 calls—a Monte Carlo simulation adds complexity without value. The appropriate level of analytical sophistication should be matched to the decision's sensitivity and the cost of error.

Ignoring Distributional Shape

When probabilistic methods are used, practitioners sometimes focus exclusively on the mean and standard deviation, implicitly assuming a normal (Gaussian) distribution. Many WFM-relevant distributions are not normal: call volumes often exhibit right-skew (occasional high-volume days), handle times are typically right-skewed with a long tail, and attrition rates may follow a bimodal pattern (new-hire attrition vs. long-tenure attrition). Applying symmetric confidence intervals to asymmetric distributions leads to systematic bias.

Conflating Uncertainty and Variability

Uncertainty (epistemic) reflects what is not known—it can be reduced with better data or models. Variability (aleatory) reflects genuine randomness in the system—it cannot be eliminated. A probabilistic model captures both, but they have different implications: uncertainty suggests investment in better measurement, while variability suggests investment in flexibility and buffers.[6]

Hybrid Approaches

Main article: Capacity Planning Methods

In practice, most WFM analytical workflows combine deterministic and probabilistic elements. Several common hybrid patterns appear across the industry.

Probabilistic Forecast, Deterministic Scheduler

The most prevalent hybrid approach feeds a probabilistic forecast into a deterministic scheduling engine. The forecast model produces a distribution of possible volumes for each interval; the planner selects a percentile (e.g., the 70th percentile for a conservative plan or the 50th for a cost-minimizing plan) and passes it to the scheduling optimizer as a single-valued requirement. This approach captures uncertainty at the forecasting stage while maintaining the computational efficiency and interpretability of deterministic scheduling.

The choice of percentile is itself a risk management decision. Staffing to the mean accepts a roughly 50% chance of being under-staffed in any given interval; staffing to the 80th percentile reduces that risk to approximately 20% but increases labor costs. This tradeoff is organization-specific and should be governed by explicit policy rather than analyst discretion.

Deterministic Plan, Probabilistic Stress Test

A second hybrid pattern builds a deterministic operational plan (schedule, hiring plan, capacity model) and then stress-tests it against probabilistic scenarios. Monte Carlo simulation or scenario analysis generates hundreds or thousands of possible futures; the deterministic plan is evaluated against each one to produce a distribution of outcomes. This answers questions like "what is the probability that our schedule will deliver below 70% service level?" or "how often will our hiring plan leave us short-staffed?"

Variance Harvesting

Variance Harvesting is a hybrid analytical method that applies probabilistic reasoning to deterministic performance metrics. It decomposes observed variance in metrics like service level or occupancy into explainable components (volume variance, AHT variance, staffing variance, schedule adherence variance) and a residual that represents genuine uncertainty. By separating signal from noise, variance harvesting enables more effective root cause analysis and more realistic performance expectations.

Ensemble Methods in Forecasting

Modern forecasting approaches increasingly use ensemble methods that combine multiple deterministic models probabilistically. Each model in the ensemble produces a point forecast; the ensemble combines them using weighted averaging, where the weights may themselves be estimated probabilistically. The result is a distributional forecast that captures both model uncertainty (different models disagree) and parametric uncertainty (individual models are imprecise).[3]

Mathematical Foundations

This section provides the minimal mathematical background needed for a WFM practitioner to work competently with both model types. It is not a substitute for a statistics course but covers the concepts most frequently encountered in operational analytics.

Expected Value and Variance

The expected value (mean) of a random variable X is the long-run average of its realized values:

- (discrete case)

The variance measures how spread out the values are around the mean:

The standard deviation is the square root of the variance and is expressed in the same units as the original variable, making it more interpretable for practitioners.

In WFM, the expected value of call volume in a given interval is the forecast; the variance describes how much actual volume tends to deviate from that forecast. A probabilistic model captures both; a deterministic model captures only the expected value.

Probability Distributions

Several distributions appear frequently in WFM modeling:

- Poisson distribution — models the number of events (calls, chats, emails) arriving in a fixed time interval, given a known average rate. Fundamental to Erlang-C and queueing theory. The key property is that the variance equals the mean, which means relative variability decreases as volume increases (the coefficient of variation is 1/√λ).

- Exponential distribution — models the time between events in a Poisson process and the service time in the simplest queueing models. Memoryless property: the probability of completion in the next moment is independent of how much time has already elapsed.

- Normal (Gaussian) distribution — the bell curve. Useful for modeling aggregate quantities (daily volume totals, weekly AHT averages) via the Central Limit Theorem, even when the underlying individual events are not normally distributed.

- Log-normal distribution — models quantities that are the product of many independent factors, such as handle times. Right-skewed with a long tail, which matches the empirical pattern of most handle time distributions.

Confidence Intervals and Prediction Intervals

A confidence interval quantifies uncertainty about an estimated parameter (e.g., "we are 95% confident the true mean AHT is between 240 and 260 seconds"). A prediction interval quantifies uncertainty about a future observation (e.g., "we predict tomorrow's volume will fall between 480 and 620 calls with 90% probability"). Prediction intervals are always wider than confidence intervals because they account for both parameter uncertainty and random variability.

In WFM, prediction intervals on forecasts are directly actionable: they define the range of staffing requirements a schedule should be robust to. Confidence intervals on performance metrics help distinguish genuine changes from statistical noise.

Conditional Probability and Bayes' Theorem

Bayes' theorem provides the mathematical foundation for updating beliefs as new evidence arrives:

In WFM, Bayesian updating is used in real-time reforecasting (updating the day's volume forecast as actual calls arrive), in adaptive scheduling (adjusting break placement based on emerging demand patterns), and in machine learning models that combine prior domain knowledge with observed data.

Tools and Implementation

Spreadsheet Approaches

Many WFM teams operate primarily in spreadsheets, which can support both deterministic and basic probabilistic modeling:

- Deterministic: Erlang-C calculators (widely available as Excel add-ins or VBA macros), staffing requirement calculations, schedule coverage analysis.

- Probabilistic: Monte Carlo simulation via repeated random draws using RAND(), NORM.INV(), or POISSON.DIST() functions. Data tables or VBA loops can generate thousands of scenarios. While not as efficient as purpose-built tools, spreadsheet Monte Carlo is accessible and often sufficient for capacity planning scenarios.

Python Libraries

Python's scientific computing ecosystem provides robust support for both modeling paradigms:

- NumPy — random number generation, array operations, and basic statistical functions. The

numpy.randommodule provides generators for all standard distributions. - SciPy — optimization solvers (

scipy.optimize.linprogfor linear programming), statistical distributions (scipy.stats), and hypothesis testing. - SimPy — a discrete-event simulation library for building custom contact center simulations that model queues, agent pools, skill-based routing, and other operational complexities.

- PyMC — a Bayesian modeling library for probabilistic forecasting, parameter estimation with uncertainty, and hierarchical models.

- Pandas — data manipulation and analysis, essential for preparing input data for both deterministic and probabilistic models.

Simulation Platforms

Dedicated simulation platforms provide graphical interfaces for building and running probabilistic models without programming. These range from general-purpose tools (Arena, AnyLogic, Simul8) to contact-center-specific solutions that incorporate WFM domain knowledge such as skill-based routing, schedule adherence, and multi-channel interaction handling.

WFM Platform Capabilities

Most enterprise WFM platforms (NICE, Verint, Calabrio, Genesys) operate primarily in the deterministic paradigm: they produce point forecasts, compute staffing requirements via Erlang-C or similar formulas, and generate schedules via mathematical optimization. Some newer platforms and modules are beginning to incorporate probabilistic elements, particularly in forecasting (prediction intervals) and "what-if" scenario analysis. Understanding the underlying model type helps practitioners interpret their platform's outputs correctly and identify when supplementary probabilistic analysis is needed.

Relationship to AI and Machine Learning

Main article: Artificial Intelligence Fundamentals

The deterministic-probabilistic distinction is central to understanding AI and machine learning in WFM. Most machine learning models are fundamentally probabilistic: a neural network for volume forecasting learns a probabilistic mapping from input features to output predictions, with uncertainty captured (or sometimes hidden) in the model's architecture.

The AI Scaffolding Framework proposes that effective AI deployment in WFM requires understanding which components should be deterministic (business rules, compliance checks, schedule constraints) and which should be probabilistic (forecasting, anomaly detection, demand classification). Conflating the two—applying deterministic rules where probabilistic judgment is needed, or introducing probabilistic uncertainty where deterministic rules should govern—is a common source of AI implementation failure.

Machine learning models themselves span the spectrum. Decision trees execute deterministically (same input, same leaf node), while random forests introduce probabilistic elements through bootstrap sampling and feature randomization. Understanding where a model falls on this spectrum helps practitioners calibrate their trust in its outputs and design appropriate human-in-the-loop oversight.

See Also

- Erlang C

- Forecasting Methods

- Probabilistic Forecasting

- Probabilistic Scheduling

- AI Scaffolding Framework

- AI in Workforce Management

- Discrete-Event vs. Monte Carlo Simulation Models

- Simulation Software

- Schedule Optimization

- Schedule Generation

- Variance Harvesting

- Capacity Planning Methods

- Machine Learning for Volume Forecasting

- Staffing to Percentile vs Mean Forecast

- Artificial Intelligence Fundamentals

- Machine Learning Concepts

References

- ↑ "Staff scheduling and rostering: A review of applications, methods and models". European Journal of Operational Research. 153(1): 3–27. 2004. doi:10.1016/S0377-2217(03)00095-X.

- ↑ Law, Averill M.. Simulation Modeling and Analysis. McGraw-Hill Education. 2015. ISBN 978-0-07-340132-4.

- ↑ 3.0 3.1 Forecasting: Principles and Practice. OTexts. 2021. ISBN 978-0-9875071-3-6.

- ↑ Koole, Ger. Call Center Optimization. MG Books. 2013. ISBN 978-90-820179-0-5.

- ↑ "Telephone Call Centers: Tutorial, Review, and Research Prospects". Manufacturing & Service Operations Management. 5(2): 79–141. 2003. doi:10.1287/msom.5.2.79.16071.

- ↑ Uncertainty: A Guide to Dealing with Uncertainty in Quantitative Risk and Policy Analysis. Cambridge University Press. 1990. ISBN 978-0-521-42744-4.