Workforce Clustering and Segmentation

Workforce Clustering and Segmentation applies unsupervised learning to group agents, queues, demand patterns, or schedule preferences into meaningful segments for differentiated management. Instead of treating all agents as interchangeable resources or all demand days as identical, clustering reveals natural groupings that inform targeted coaching, hierarchical forecasting, and preference-based schedule design. The goal is not prediction — it is structure discovery.

Overview

A 500-agent contact center contains multitudes. Some agents handle calls quickly but sacrifice quality. Others are slow but thorough. Some are consistent; others are volatile. Treating them identically — same coaching, same targets, same schedule options — ignores information that clustering makes actionable.

Similarly, demand patterns vary. Mondays look different from Wednesdays. January looks different from July. But within these variations, there are clusters of similar patterns — groups of days that share a shape. Identifying these clusters enables model selection: fit one forecast model per cluster rather than one model for all days.

Clustering is fundamentally different from supervised learning. There is no "right answer" to validate against. The value of a clustering solution is measured by its utility — do the segments produce different and actionable management strategies? A 4-cluster agent segmentation that leads to 4 distinct coaching interventions is more valuable than a 12-cluster solution that the organization cannot differentiate.

Mathematical Foundation

Distance Metrics

Clustering requires a notion of similarity (or distance) between observations. For WFM data:

Euclidean distance (most common):

Appropriate when features are on comparable scales. For WFM metrics (AHT in seconds, quality on 1-5 scale, adherence as percentage), standardization is required before computing Euclidean distance.

Manhattan distance:

More robust to outliers than Euclidean. Useful when WFM data contains extreme values (agents with very high AHT due to a few long calls).

Dynamic Time Warping (DTW): For comparing demand curves that may be similar in shape but shifted in time. Two days with the same volume pattern but offset by 30 minutes are "close" under DTW but "far" under Euclidean distance. Valuable for demand pattern clustering.

Feature Standardization

WFM metrics operate on wildly different scales:

- AHT: 200-600 seconds

- Quality score: 1.0-5.0

- Adherence: 70-100%

- FCR: 50-95%

- Calls handled per day: 20-80

Without standardization, AHT (range: 400) dominates the clustering while quality score (range: 4) is ignored. Standard approaches:

Z-score standardization: — centers at 0, unit variance. Preferred for K-means.

Min-max scaling: — scales to [0, 1]. Preferred when the original range is meaningful.

Robust scaling: — resistant to outliers. Recommended for WFM data with extreme values.

Method

K-Means Clustering

K-means partitions observations into clusters by minimizing within-cluster sum of squares:

where is the centroid of cluster .

Algorithm: 1. Choose (number of clusters). 2. Initialize centroids (K-means++ initialization is standard). 3. Assign each observation to the nearest centroid. 4. Recompute centroids as the mean of assigned observations. 5. Repeat steps 3-4 until convergence.

Choosing k:

- Elbow method: Plot within-cluster sum of squares vs. . Look for the "elbow" where adding another cluster provides diminishing returns. For agent performance clustering, this typically produces -5.

- Silhouette score: Measures how similar each observation is to its own cluster vs. the nearest other cluster. Ranges from -1 (wrong cluster) to 1 (well-clustered). Average silhouette above 0.4 indicates reasonable structure; above 0.6 is strong.

- Business constraint: The organization can realistically differentiate management for 3-5 groups. More clusters may be statistically justified but operationally impractical.

Hierarchical Clustering

Hierarchical clustering builds a tree (dendrogram) of nested clusters, allowing the analyst to choose the number of clusters after seeing the full hierarchy.

Agglomerative (bottom-up): Start with each observation as its own cluster. Repeatedly merge the two closest clusters. Linkage criteria:

- Ward's method: Merges clusters that minimize the increase in total within-cluster variance. Tends to produce compact, equally-sized clusters. Recommended for most WFM applications.

- Complete linkage: Uses maximum distance between clusters. Produces tight clusters but is sensitive to outliers.

- Average linkage: Uses mean distance. A compromise between Ward's and complete.

The dendrogram visualization is particularly useful for WFM — it reveals whether clusters are clearly separated or if the data has no natural grouping.

DBSCAN

DBSCAN identifies clusters as dense regions separated by sparse regions. Unlike K-means, it:

- Does not require specifying in advance

- Can find clusters of arbitrary shape

- Identifies noise points (outliers) explicitly

Parameters: (neighborhood radius) and MinPts (minimum points to form a dense region). For WFM agent clustering, MinPts = 5-10 is typical. can be selected from the k-distance plot (plot the distance to the k-th nearest neighbor, sorted; the elbow suggests ).

DBSCAN is valuable when the data contains agents who do not fit any natural cluster — these outliers may need individual management rather than segment-based strategies.

Gaussian Mixture Models (GMM)

GMMs model the data as a mixture of Gaussian distributions. Each observation has a probability of belonging to each cluster (soft assignment), unlike K-means (hard assignment).

where is the mixing weight, is the mean, and is the covariance matrix of component .

Advantages for WFM:

- Soft assignments: "Agent A is 70% likely Cluster 1, 30% Cluster 2" — useful for agents on the boundary between performance tiers.

- Each cluster can have its own shape (covariance structure), unlike K-means which assumes spherical clusters.

- Model selection via BIC (Bayesian Information Criterion) provides a principled method for choosing .

WFM Applications

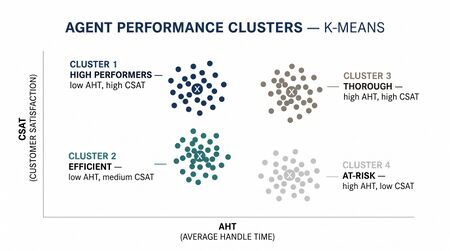

Agent Performance Clustering

Features: AHT, quality score, schedule adherence, FCR, calls per hour, after-call work time.

Typical results (4-cluster solution):

| Cluster | Profile | Size | Coaching Strategy |

|---|---|---|---|

| 1: Speed Stars | Low AHT, moderate quality, high volume | ~25% | Quality enrichment coaching — slow down for thoroughness |

| 2: Quality Champions | High quality, high FCR, moderate AHT | ~30% | Efficiency coaching — maintain quality while reducing handle time |

| 3: Steady Performers | Near-average on all metrics | ~30% | Targeted improvement — identify one metric for focused development |

| 4: Struggling | High AHT, low quality, low adherence | ~15% | Intensive coaching or performance management — address root causes |

This segmentation replaces generic coaching with differentiated interventions. A Speed Star does not need the same coaching as a Struggling agent. The coaching investment per agent is better allocated when the segment is known.

Demand Pattern Clustering

Features: Represent each day as a vector of 96 interval-level volumes (15-minute intervals over 24 hours), normalized to percentage of daily total.

Typical results (5-cluster solution):

- Cluster A — Standard weekday: Ramp from 8 AM, peak at 10-11 AM, lunch dip, afternoon plateau, decline from 4 PM. Monday-Thursday dominant.

- Cluster B — Monday surge: Similar shape to A but with 15-20% higher morning peak. Monday-specific.

- Cluster C — Friday light: Lower overall volume, flatter shape, earlier decline. Friday dominant.

- Cluster D — Weekend: Later start, lower peak, more uniform distribution. Saturday/Sunday.

- Cluster E — Anomalous: Holidays, outage days, marketing event days — no consistent pattern.

Application: Fit a separate forecast model per cluster. The Monday model learns Monday's shape; the Friday model learns Friday's. This produces better forecasts than a single model that averages across patterns. See Time Series Feature Engineering for WFM for related feature construction.

Schedule Preference Segmentation

Features: Preferred start time, tolerance for split shifts, weekend availability, overtime willingness, shift swap frequency, time-off request patterns.

Typical results (3-cluster solution):

- Early birds: Prefer 6-7 AM start, available weekends, low swap frequency. Often parents with school-age children.

- Flexible midday: Accept 9 AM-1 PM start range, moderate weekend availability, high swap activity. Often younger agents valuing social schedule flexibility.

- Late shift preferred: Prefer 2-6 PM start, low weekend availability, high overtime willingness. Often agents with secondary employment or education commitments.

Application: Design shift templates that align with each preference cluster. Offering preferred schedules to each segment improves satisfaction and reduces voluntary attrition (see Survival Analysis for Workforce Attrition for measuring this effect).

Queue Family Grouping

Features: Volume correlation between queues, AHT similarity, skill overlap, seasonal pattern similarity.

Application: Group queues into families for Hierarchical Forecasting. Forecast at the family level, then disaggregate to individual queues. This improves accuracy when individual queues have sparse data but the family has sufficient volume for reliable patterns.

Worked Example

A 500-agent technical support center segments agents for differentiated coaching using K-means on performance metrics.

Data preparation: Extract 90 days of agent-level metrics. For each agent, compute:

- Mean AHT (seconds)

- Mean quality score (1-5 scale)

- Schedule adherence (%)

- First call resolution rate (%)

- Calls handled per scheduled hour

- Mean after-call work time (seconds)

Exclude agents with <30 days of data (insufficient for stable metrics). Final dataset: 468 agents, 6 features.

Standardization: Apply Z-score standardization to all features. Verify no single feature dominates the variance.

Choosing k:

- Elbow method: inflection at k=4 (within-cluster SS drops steeply from k=1 to k=4, then flattens).

- Silhouette score: k=4 scores 0.42, k=3 scores 0.39, k=5 scores 0.38. k=4 is optimal.

- Business input: coaching team confirms they can differentiate 4 strategies. If they could only handle 3, k=3 is acceptable.

Results:

| Metric | Cluster 1 (n=112) | Cluster 2 (n=148) | Cluster 3 (n=136) | Cluster 4 (n=72) |

|---|---|---|---|---|

| AHT (sec) | 348 | 442 | 405 | 518 |

| Quality | 3.7 | 4.3 | 3.9 | 3.2 |

| Adherence (%) | 92.1 | 90.8 | 91.5 | 83.4 |

| FCR (%) | 68 | 82 | 74 | 61 |

| Calls/hour | 7.2 | 5.8 | 6.1 | 4.6 |

| ACW (sec) | 32 | 58 | 44 | 72 |

Cluster profiles:

- Cluster 1 — Efficient Resolvers: Fast handling, moderate quality, high throughput. Risk: rushing customers. Coaching: quality depth, empathy, thoroughness verification.

- Cluster 2 — Quality Anchors: Highest quality and FCR, longer handle times. The center's backbone. Coaching: efficiency techniques without sacrificing quality. Consider as mentors for Cluster 4.

- Cluster 3 — Developing Performers: Near-average on all metrics. Largest growth potential. Coaching: identify each agent's weakest metric for targeted improvement.

- Cluster 4 — At-Risk: Longest handle times, lowest quality, worst adherence. Root cause analysis needed: are they undertrained, misassigned to wrong queue, or disengaged? Coaching: intensive support plan with weekly check-ins.

Validation:

- Silhouette plot: most agents have positive silhouette scores. ~12% of agents (mostly on cluster boundaries) have scores near zero — these could reasonably belong to either adjacent cluster. Use GMM for probabilistic assignment if boundary agents need special handling.

- Temporal stability: re-run clustering on the subsequent 90 days. 78% of agents remain in the same cluster. 22% shift — mostly between adjacent clusters (Cluster 3 ↔ Cluster 1 or 2). This indicates reasonable stability.

- Outcome validation: after 6 months of differentiated coaching, overall quality improved 0.2 points and AHT decreased 12 seconds. Cluster 4 showed the largest improvement (quality +0.4, AHT −35 seconds), confirming that targeted intervention works.

Implementation

import pandas as pd

import numpy as np

from sklearn.cluster import KMeans, DBSCAN, AgglomerativeClustering

from sklearn.mixture import GaussianMixture

from sklearn.preprocessing import StandardScaler, RobustScaler

from sklearn.metrics import silhouette_score, silhouette_samples

import matplotlib.pyplot as plt

# Load agent performance data

agents = pd.read_csv('agent_metrics.csv')

features = ['aht', 'quality_score', 'adherence', 'fcr', 'calls_per_hour', 'acw']

X = agents[features].values

# Standardize

scaler = RobustScaler() # robust to outliers

X_scaled = scaler.fit_transform(X)

# --- Elbow method ---

inertias = []

sil_scores = []

K_range = range(2, 9)

for k in K_range:

km = KMeans(n_clusters=k, n_init=10, random_state=42)

labels = km.fit_predict(X_scaled)

inertias.append(km.inertia_)

sil_scores.append(silhouette_score(X_scaled, labels))

fig, (ax1, ax2) = plt.subplots(1, 2, figsize=(12, 4))

ax1.plot(K_range, inertias, 'bo-')

ax1.set_xlabel('k'); ax1.set_ylabel('Inertia'); ax1.set_title('Elbow Method')

ax2.plot(K_range, sil_scores, 'ro-')

ax2.set_xlabel('k'); ax2.set_ylabel('Silhouette Score'); ax2.set_title('Silhouette Score')

plt.tight_layout()

plt.savefig('cluster_selection.png', dpi=150)

# --- Final K-Means ---

k_final = 4

km = KMeans(n_clusters=k_final, n_init=20, random_state=42)

agents['cluster'] = km.fit_predict(X_scaled)

# Cluster profiles

profile = agents.groupby('cluster')[features].mean().round(1)

print(profile)

print(agents['cluster'].value_counts().sort_index())

# --- Gaussian Mixture Model (soft assignments) ---

gmm = GaussianMixture(n_components=4, covariance_type='full', random_state=42)

gmm.fit(X_scaled)

agents['gmm_cluster'] = gmm.predict(X_scaled)

agents['gmm_proba_max'] = gmm.predict_proba(X_scaled).max(axis=1)

# Agents with low max probability are boundary cases

boundary_agents = agents[agents['gmm_proba_max'] < 0.6]

print(f"Boundary agents (uncertain cluster): {len(boundary_agents)}")

# --- Demand Pattern Clustering ---

# Each row = one day, columns = interval volumes (normalized to % of daily total)

daily_patterns = interval_df.pivot_table(

index='date', columns='interval', values='volume'

)

daily_patterns = daily_patterns.div(daily_patterns.sum(axis=1), axis=0) # normalize

from scipy.cluster.hierarchy import dendrogram, linkage, fcluster

Z = linkage(daily_patterns.values, method='ward')

fig, ax = plt.subplots(figsize=(14, 6))

dendrogram(Z, truncate_mode='lastp', p=30, ax=ax)

ax.set_title('Demand Pattern Dendrogram')

plt.savefig('demand_dendrogram.png', dpi=150)

# Cut at 5 clusters

daily_patterns['cluster'] = fcluster(Z, t=5, criterion='maxclust')

Key libraries:

- scikit-learn — K-means, DBSCAN, GMM, silhouette analysis, preprocessing

- scipy.cluster.hierarchy — hierarchical clustering and dendrograms

- tslearn — time series clustering with DTW distance

- yellowbrick — visualization for cluster evaluation (elbow, silhouette)

Maturity Model Position

| Level | Capability | Clustering Application |

|---|---|---|

| Level 1 — Reactive | No segmentation | All agents managed identically, single forecast model for all days |

| Level 2 — Managed | Manual grouping | Performance tiers by single metric (e.g., AHT quartiles) |

| Level 3 — Proactive | Basic clustering | K-means on 2-3 metrics, queue grouping by volume |

| Level 4 — Advanced | Multivariate segmentation | Multi-metric clustering with validation, demand pattern families, preference segmentation |

| Level 5 — Optimized | Dynamic segmentation | Clusters updated continuously, personalized management per segment, automated coaching routing |

See Also

- Survival Analysis for Workforce Attrition — analyzing attrition differences across performance clusters

- Time Series Feature Engineering for WFM — feature construction for demand pattern clustering

- Hierarchical Forecasting — using queue clusters as hierarchy levels

- Scikit-learn for WFM — implementation of clustering algorithms

- Schedule Optimization — preference-based schedule design using preference segments

References

- Jain, A.K. (2010). "Data clustering: 50 years beyond K-means." Pattern Recognition Letters, 31(8), 651-666. State of the field overview.

- Rousseeuw, P.J. (1987). "Silhouettes: A graphical aid to the interpretation and validation of cluster analysis." Journal of Computational and Applied Mathematics, 20, 53-65. Silhouette score methodology.

- Ester, M., Kriegel, H.P., Sander, J., & Xu, X. (1996). "A density-based algorithm for discovering clusters in large spatial databases with noise." Proceedings of KDD, 226-231. DBSCAN.

- McLachlan, G.J. & Peel, D. (2000). Finite Mixture Models. Wiley. Theory of Gaussian mixture models.

- Aghabozorgi, S., Shirkhorshidi, A.S., & Wah, T.Y. (2015). "Time-series clustering — A decade review." Information Systems, 53, 16-38. Review of time series clustering methods including DTW.