Time Series Feature Engineering for WFM

Time Series Feature Engineering for WFM is the practice of constructing informative input variables (features) from raw temporal data to improve forecast model performance. The difference between a mediocre WFM forecast and a strong one is often not the model — it is the features. A well-engineered feature set captures the calendar rhythms, external events, cross-queue dynamics, and temporal patterns that drive contact center demand. This page covers the feature categories, encoding methods, selection techniques, and practical pitfalls of feature engineering for WFM forecasting.

Overview

Classical WFM forecasting relies on time series decomposition: extract trend, seasonality, and residual. Models like ARIMA, ETS, and Holt-Winters learn these components implicitly from the target series. This works well when the demand pattern is stable and driven primarily by internal rhythms.

Modern ML forecasting models — XGBoost, LightGBM, random forests, neural networks — do not inherently understand time. They see a tabular dataset where each row is a prediction target (volume at a specific interval) and columns are features. The model learns a function from features to target. If the features do not encode the temporal structure, the model cannot learn it.

This creates both an obligation and an opportunity. The obligation: you must explicitly construct features that capture calendar effects, lagged values, and external drivers. The opportunity: you can encode information that classical models cannot incorporate — marketing campaigns, system outages, weather events, cross-queue relationships — as features that the model exploits.

The payoff is substantial. A baseline ML model with only day-of-week and hour-of-day features might achieve 10-12% MAPE. Adding lag features, rolling statistics, and calendar features typically brings this to 7-9%. Adding external regressors (marketing events, known outages) can push it to 5-7%. Feature engineering is the highest-leverage activity in WFM ML forecasting.

Mathematical Foundation

The Supervised Learning Frame

For interval-level volume forecasting, the prediction problem is:

where:

- is the predicted volume at interval

- are lagged volume values

- are calendar features (day-of-week, hour, holiday)

- are external features (marketing flag, weather, website traffic)

- is the model (gradient boosting, neural network, etc.)

The feature engineering task is constructing the right , , and vectors.

Information Content of Features

A feature is useful if it reduces uncertainty about the target. Formally, feature is informative about target if the mutual information is positive:

where is the entropy of the target and is the conditional entropy given the feature. In practice, we approximate this through feature importance scores (permutation importance, SHAP values) and forecast accuracy with vs. without the feature.

Method

Category 1: Calendar Features

Calendar features encode the deterministic temporal structure.

Basic calendar:

- Day of week (0-6 or one-hot encoded)

- Hour of day (0-23)

- Month (1-12)

- Interval within day (1-96 for 15-minute intervals)

- Quarter (1-4)

- Week of year (1-52)

Extended calendar:

- Day of month (1-31) — captures pay-period effects

- Is weekend (binary)

- Is month-end (last 3 business days)

- Is month-start (first 3 business days)

- Business day of month (1st, 2nd, ... business day)

Holiday features:

- Is holiday (binary)

- Days before holiday (1, 2, 3, ... or 0)

- Days after holiday (1, 2, 3, ... or 0)

- Holiday type (national, regional, company)

- Is bridge day (working day between holiday and weekend)

Holiday effects are asymmetric — the day before Thanksgiving drives higher volume than the day after. Encoding "days before" and "days after" as separate features lets the model learn this asymmetry.

Pay-period features:

- Is payday (binary — if known)

- Days since payday (0, 1, 2, ...)

- Days until next payday

For financial services and utilities contact centers, payday effects can drive 10-15% volume swings.

Category 2: Lag Features

Lag features capture autocorrelation — the relationship between current volume and past volume.

Same-interval lags:

- Volume at same interval, 1 week ago: (for 15-min intervals, 672 intervals per week)

- Volume at same interval, 2 weeks ago

- Volume at same interval, 4 weeks ago

- Volume at same interval, 1 year ago (for annual seasonality)

Same-interval, same-day-of-week lags are the single most predictive feature category for WFM forecasting. Monday 10:00 AM volume is strongly correlated with last Monday 10:00 AM volume.

Daily lags:

- Total daily volume, 1 day ago

- Total daily volume, 1 week ago

- Total daily volume, 1 year ago

Daily lags capture day-level trends that interval lags may miss.

Adjacent interval lags:

- Volume at previous interval:

- Volume at 2 intervals ago:

These capture intraday momentum — if 10:00 AM is running hot, 10:15 AM is likely hot too. Only available for intraday reforecasting (not day-ahead forecasts).

Category 3: Rolling Statistics

Rolling features smooth out noise and capture local trends.

Rolling means:

- 4-week rolling mean of same-interval volume

- 4-week rolling mean of daily volume

- 13-week rolling mean (quarterly trend)

Rolling standard deviations:

- 4-week rolling SD of same-interval volume — captures demand volatility

- Coefficient of variation (rolling SD / rolling mean) — useful as a model input for prediction interval width

Rolling quantiles:

- 4-week rolling 25th and 75th percentile of same-interval volume

- These capture the distributional range, not just the center

Trend indicators:

- Difference between 4-week and 13-week rolling means — positive indicates growth, negative indicates decline

- Percentage change from same interval 4 weeks ago

Category 4: External Regressors

External features encode information outside the contact volume time series.

Marketing and business events:

- Marketing campaign active (binary per campaign type)

- Days since campaign launch (captures decay of campaign-driven volume)

- Campaign channel (email, social, TV — different channels drive different contact patterns)

- Product launch date flags

- Known system outage flags

Digital signals:

- Website traffic (hourly or daily)

- App downloads (daily)

- Social media mention count (daily or hourly)

- Support page views (hourly — leading indicator of contact volume)

The relationship is typically: website visit → FAQ page → support page → contact. Support page views lead contact volume by 15-60 minutes depending on the channel.

Weather:

- Temperature, precipitation for insurance, utilities, travel contact centers

- Severe weather alerts (binary)

Economic indicators:

- Unemployment rate (monthly — for financial services)

- Consumer confidence index (monthly)

External regressors are high-value but high-maintenance. Each requires a data pipeline, and the feature is only useful for forecasting if future values are known or predictable. Marketing campaigns are known in advance (use them). Weather forecasts are available 7 days out (useful for short-horizon). Social media mentions are only available in real time (useful for intraday reforecasting, not day-ahead).

Category 5: Cross-Queue Features

Contact centers with multiple queues often exhibit cross-queue dynamics.

Lagged cross-queue volume:

- When technical support queue spikes, billing queue follows 30-60 minutes later (customers call back about charges related to the technical issue).

- When IVR self-service completion rate drops, agent queue volume increases 15-30 minutes later.

Queue proportion features:

- Proportion of total volume going to each queue — shifts in this proportion indicate routing changes or demand composition changes.

Cross-queue correlation:

- Compute rolling correlation between queues. High-correlation queue pairs may share demand drivers that can be jointly modeled.

Feature Encoding

Cyclical encoding for periodic features:

Hour-of-day and day-of-week are cyclical — hour 23 is close to hour 0, Saturday is close to Sunday. One-hot encoding does not capture this proximity. Sine/cosine encoding does:

For hour of day (period = 24):

- Hour 0: sin=0.00, cos=1.00

- Hour 6: sin=1.00, cos=0.00

- Hour 12: sin=0.00, cos=−1.00

- Hour 23: sin=−0.26, cos=0.97 (close to hour 0, as desired)

One-hot encoding for categorical features: Day-of-week, holiday type, campaign type. Creates binary columns.

Target encoding for high-cardinality categoricals: Agent ID, supervisor ID — too many levels for one-hot. Replace with the mean target value for that level (with regularization to prevent overfitting on small groups).

Feature Selection

More features is not always better. Overfitting is the primary risk — the model learns noise in the training set rather than signal. Signs of overfitting:

- Training MAPE much lower than test MAPE (e.g., 3% vs. 9%)

- Performance degrades when adding features that should be irrelevant

- Unstable forecasts that change substantially when retrained on slightly different data

Selection methods:

Permutation importance: Train the model, then randomly shuffle each feature one at a time and measure the accuracy drop. Features that cause large drops when shuffled are important; features with no effect are candidates for removal.

SHAP values: Shapley Additive Explanations quantify each feature's contribution to each prediction. Aggregate SHAP values across observations to rank features by overall importance.

Recursive Feature Elimination (RFE): Train the model, remove the least important feature, retrain, repeat. Stop when removing features degrades accuracy.

Practical rule: Start with a rich feature set (50-100 features), use SHAP or permutation importance to identify the top 15-25, and verify that the reduced set matches full-set accuracy on held-out data. For most WFM forecasting problems, 15-25 well-chosen features capture 95%+ of achievable accuracy.

WFM Applications

ML Forecasting Model Feature Set

A complete feature set for an XGBoost volume forecast model:

| Category | Features | Count |

|---|---|---|

| Calendar | DOW (one-hot), hour (sin/cos), month (sin/cos), is_weekend, is_holiday, days_before_holiday, days_after_holiday, is_monthend, is_payday | ~15 |

| Lag | Same-interval 1w, 2w, 4w, same-day 1w, 2w, 4w ago | 6 |

| Rolling | 4-week rolling mean, 4-week rolling std, 13-week rolling mean, trend (4w - 13w) | 4 |

| External | Marketing campaign flags (by type), website traffic (daily), app downloads (daily) | 5-8 |

| Cross-queue | Total center volume, queue proportion, correlated queue lag | 3-4 |

| Total | ~35 |

Prophet Regressor Features

Prophet accepts external regressors as additive or multiplicative effects. Suitable features:

- Marketing campaign binary flags (additive — adds volume)

- Holiday indicators (Prophet handles holidays natively, but custom business events need manual features)

- Known outage flags (additive — typically large positive impact on volume)

- Weather severity index (additive for relevant industries)

See Prophet for regressor configuration.

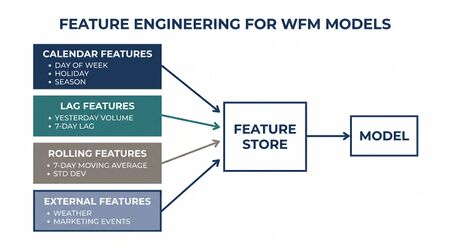

Feature Store for WFM

Mature organizations maintain a feature store — a centralized repository of pre-computed features available for any downstream model. The feature store ensures:

- Consistent feature definitions across models (same "4-week rolling mean" everywhere)

- No data leakage (features computed from training data only)

- Reproducibility (features versioned alongside models)

- Efficiency (compute once, use in multiple models)

This is a Level 5 capability — most WFM teams compute features ad hoc in model training scripts.

Worked Example

A 6-queue customer service center with 50,000 contacts per week builds a LightGBM forecast model. Baseline model (day-of-week + hour only) achieves 10.4% MAPE on a 4-week holdout.

Feature engineering rounds:

| Round | Features Added | MAPE | Improvement |

|---|---|---|---|

| Baseline | DOW (one-hot), hour (sin/cos) | 10.4% | — |

| + Lags | Same-interval 1w, 2w, 4w lag | 7.8% | −2.6 pp |

| + Rolling | 4-week rolling mean, rolling std | 7.2% | −0.6 pp |

| + Calendar | Holiday flags, days before/after, month-end, payday | 6.9% | −0.3 pp |

| + External | Marketing campaign flags (3 campaign types) | 6.1% | −0.8 pp |

| + Cross-queue | Total center volume, queue proportion | 5.9% | −0.2 pp |

Key findings:

- Lag features provide the largest single improvement (−2.6 pp). Same-interval, same-DOW lag 1 week is the most important individual feature.

- Marketing campaign features provide the second-largest improvement (−0.8 pp). Without them, the model systematically underpredicts during active campaigns.

- Rolling standard deviation is important not for point forecast accuracy but for prediction interval calibration — intervals with high rolling SD should have wider prediction bands.

- Cross-queue features provide modest improvement for individual queue forecasts but substantially improve aggregate center-level accuracy.

SHAP analysis (top 10 features by importance): 1. Same-interval volume, 1 week ago 2. Same-interval volume, 4 weeks ago 3. 4-week rolling mean 4. Hour of day (sin component) 5. Day of week — Monday indicator 6. Marketing campaign — email blast flag 7. Same-interval volume, 2 weeks ago 8. 4-week rolling standard deviation 9. Is holiday flag 10. Day of week — Friday indicator

Overfitting check: Training MAPE 4.2%, test MAPE 5.9%. Gap of 1.7 pp is acceptable (less than 2 pp is a common rule of thumb). No evidence of severe overfitting.

Final model: 28 features, LightGBM with 200 trees, max depth 6, learning rate 0.05. Retrained weekly with the most recent 52 weeks of data. MAPE improved from 10.4% (baseline) to 5.9% (final) — a 43% relative improvement.

Implementation

import pandas as pd

import numpy as np

from sklearn.model_selection import TimeSeriesSplit

def build_wfm_features(df, target_col='volume'):

"""Build feature set for WFM interval-level forecasting.

Args:

df: DataFrame with columns: datetime, interval, volume, queue_id

Index should be datetime-ordered.

"""

df = df.copy()

df['datetime'] = pd.to_datetime(df['datetime'])

# --- Calendar features ---

df['hour'] = df['datetime'].dt.hour

df['dow'] = df['datetime'].dt.dayofweek

df['month'] = df['datetime'].dt.month

df['is_weekend'] = (df['dow'] >= 5).astype(int)

df['day_of_month'] = df['datetime'].dt.day

df['is_monthend'] = (df['datetime'].dt.is_month_end).astype(int)

# Cyclical encoding

df['hour_sin'] = np.sin(2 * np.pi * df['hour'] / 24)

df['hour_cos'] = np.cos(2 * np.pi * df['hour'] / 24)

df['dow_sin'] = np.sin(2 * np.pi * df['dow'] / 7)

df['dow_cos'] = np.cos(2 * np.pi * df['dow'] / 7)

df['month_sin'] = np.sin(2 * np.pi * df['month'] / 12)

df['month_cos'] = np.cos(2 * np.pi * df['month'] / 12)

# One-hot DOW (tree models prefer this to cyclical for DOW)

dow_dummies = pd.get_dummies(df['dow'], prefix='dow')

df = pd.concat([df, dow_dummies], axis=1)

# --- Lag features ---

intervals_per_week = 7 * 96 # 15-min intervals

df['lag_1w'] = df[target_col].shift(intervals_per_week)

df['lag_2w'] = df[target_col].shift(2 * intervals_per_week)

df['lag_4w'] = df[target_col].shift(4 * intervals_per_week)

# Same-day lag (for daily models)

intervals_per_day = 96

df['lag_1d'] = df[target_col].shift(intervals_per_day)

# --- Rolling statistics (same interval, same DOW) ---

# Group by interval-of-week for proper same-context rolling stats

df['interval_of_week'] = df['dow'] * 96 + (df['hour'] * 4 +

df['datetime'].dt.minute // 15)

rolling_group = df.groupby('interval_of_week')[target_col]

df['rolling_mean_4w'] = rolling_group.transform(

lambda x: x.shift(1).rolling(4, min_periods=2).mean()

)

df['rolling_std_4w'] = rolling_group.transform(

lambda x: x.shift(1).rolling(4, min_periods=2).std()

)

df['rolling_mean_13w'] = rolling_group.transform(

lambda x: x.shift(1).rolling(13, min_periods=4).mean()

)

df['trend'] = df['rolling_mean_4w'] - df['rolling_mean_13w']

# --- Holiday features ---

# Assume holidays_df has 'date' and 'holiday_name' columns

# df = merge_holiday_features(df, holidays_df)

# --- External regressors ---

# Merge marketing campaign flags, website traffic, etc.

# df = df.merge(marketing_df, on='date', how='left')

return df

def add_cross_queue_features(df, queue_col='queue_id', target_col='volume'):

"""Add cross-queue features: total volume, queue proportion."""

total_vol = df.groupby('datetime')[target_col].transform('sum')

df['total_center_volume'] = total_vol

df['queue_proportion'] = df[target_col] / total_vol.clip(lower=1)

return df

# --- Feature importance with SHAP ---

import lightgbm as lgb

import shap

feature_cols = [c for c in df.columns if c not in

['datetime', 'volume', 'queue_id', 'interval_of_week']]

# Time series split (no random splitting for temporal data!)

tscv = TimeSeriesSplit(n_splits=4)

for train_idx, test_idx in tscv.split(df):

X_train = df.iloc[train_idx][feature_cols]

y_train = df.iloc[train_idx]['volume']

X_test = df.iloc[test_idx][feature_cols]

y_test = df.iloc[test_idx]['volume']

model = lgb.LGBMRegressor(

n_estimators=200, max_depth=6, learning_rate=0.05,

subsample=0.8, colsample_bytree=0.8

)

model.fit(X_train, y_train)

# SHAP analysis

explainer = shap.TreeExplainer(model)

shap_values = explainer.shap_values(X_test.sample(500, random_state=42))

shap.summary_plot(shap_values, X_test.sample(500, random_state=42))

# Permutation importance

from sklearn.inspection import permutation_importance

perm_imp = permutation_importance(model, X_test, y_test, n_repeats=10)

sorted_idx = perm_imp.importances_mean.argsort()[::-1]

for i in sorted_idx[:15]:

print(f"{feature_cols[i]}: {perm_imp.importances_mean[i]:.4f}")

Key libraries:

- pandas — feature computation, lag/rolling operations

- LightGBM / XGBoost — gradient boosting models that consume tabular features

- SHAP — feature importance and interpretation

- scikit-learn — preprocessing, feature selection, time series cross-validation

- Prophet — external regressor integration for Bayesian forecasting

- Feature-engine — feature engineering transformations with scikit-learn API

Maturity Model Position

| Level | Capability | Feature Engineering Application |

|---|---|---|

| Level 1 — Reactive | No feature engineering | Manual forecast adjustments based on intuition |

| Level 2 — Managed | Basic calendar features | DOW and holiday adjustments in classical models |

| Level 3 — Proactive | Lag and rolling features | Same-interval lags, rolling means feeding ML models |

| Level 4 — Advanced | External regressors and cross-queue | Marketing events, digital signals, queue interactions as model inputs |

| Level 5 — Optimized | Feature store and automated selection | Centralized feature repository, automated feature importance evaluation, continuous feature discovery |

See Also

- Forecasting — forecasting methodology that consumes engineered features

- Prophet — time series model with external regressor support

- Scikit-learn for WFM — ML framework for feature selection and model training

- Anomaly Detection in WFM Operations — anomaly flags as features for robust forecasting

- Workforce Clustering and Segmentation — demand pattern clustering informed by feature analysis

- Pandas for WFM — data manipulation for feature computation

References

- Hyndman, R.J. & Athanasopoulos, G. (2021). Forecasting: Principles and Practice. 3rd ed. OTexts. Chapter 7 on time series features.

- Lundberg, S.M. & Lee, S.I. (2017). "A unified approach to interpreting model predictions." Advances in Neural Information Processing Systems, 4765-4774. SHAP methodology.

- Ke, G., Meng, Q., et al. (2017). "LightGBM: A highly efficient gradient boosting decision tree." Advances in Neural Information Processing Systems, 3146-3154.

- Christ, M., Braun, N., Neuffer, J., & Kempa-Liehr, A.W. (2018). "Time series feature extraction on basis of scalable hypothesis tests (tsfresh)." Neurocomputing, 307, 72-77. Automated feature extraction.

- Januschowski, T., et al. (2020). "Criteria for classifying forecasting methods." International Journal of Forecasting, 36(1), 167-177. Framework for evaluating forecasting approaches.