Anomaly Detection in WFM Operations

Anomaly Detection in WFM Operations applies statistical and machine learning methods to identify unusual patterns in contact center data before they escalate into operational crises. A 12% AHT increase in a single queue, an unexpected volume spike 40% above forecast, or a sudden adherence drop across a team — these anomalies are signals that something has changed. The faster they are detected, the faster the response. This page covers detection methods ranging from classical statistical process control to machine learning approaches, with emphasis on the operational workflow that turns detection into action.

Overview

Contact centers generate anomalies constantly. Most are benign — random variation in a noisy system. A few are operationally significant: a system outage driving call volume, a process change increasing handle time, a supervisor absence causing adherence degradation, or a quality scoring drift masking real performance issues. The challenge is not detecting all unusual points — that produces alert fatigue. The challenge is detecting the right anomalies at the right time with enough confidence to trigger action.

Anomaly detection in WFM operates at multiple timescales:

- Real-time (intraday): Detecting volume spikes, AHT increases, or adherence drops within minutes. Enables immediate reallocation, callback activation, or escalation.

- Daily: Identifying days where metrics deviated significantly from expected values. Feeds into root cause analysis and forecast recalibration.

- Trend (weekly/monthly): Detecting slow drifts — AHT creep, gradual quality decline, forecast bias accumulation — that do not trigger daily alerts but indicate structural changes.

Each timescale requires different methods, different sensitivity settings, and different response workflows.

Mathematical Foundation

Statistical Anomaly Definition

An observation is anomalous if it is improbable under the assumed data-generating process:

For a normal distribution with mean and standard deviation , the Z-score measures deviation:

A common threshold is (0.27% probability under normality). But WFM data is rarely normal — call volumes follow Poisson-like distributions, AHT is right-skewed, and metrics exhibit strong autocorrelation. Methods must account for these properties.

Types of Anomalies

- Point anomalies: A single observation that is extreme. Example: interval volume of 380 when the forecast is 220.

- Contextual anomalies: An observation that is normal in one context but anomalous in another. Example: 150 calls at 2 AM is extreme for a domestic queue but normal for a 24/7 technical support line.

- Collective anomalies: A sequence of observations that is anomalous as a group, even if individual points are not extreme. Example: AHT that is 5% above baseline for 15 consecutive intervals — each interval within normal bounds, but the run is statistically improbable.

Method

Method 1: Rolling Z-Score with Adaptive Windows

The simplest effective approach for WFM time series. For each new observation:

1. Compute the rolling mean and standard deviation over a trailing window (e.g., the same interval on the same day-of-week for the past 8 weeks). 2. Calculate the Z-score against this rolling baseline. 3. Flag observations exceeding the threshold.

Why same-interval, same-day-of-week? WFM data has strong daily and weekly seasonality. Monday 10:00 AM volume is not comparable to Sunday 10:00 AM volume. The comparison must be against the distribution of the same context.

Adaptive windows improve robustness:

- Use an 8-week trailing window by default

- Weight recent weeks more heavily (exponential weighting)

- Exclude previously flagged anomalies from the baseline (anomalies should not pollute the reference distribution)

- Expand the window to 12 weeks when data is sparse (new queues, new intervals)

Method 2: Isolation Forest

Isolation Forest is an unsupervised anomaly detection algorithm based on a simple insight: anomalies are easier to isolate than normal points. The algorithm builds random trees by selecting random features and random split values. Anomalous points — being rare and different — are isolated in fewer splits (shallower trees) than normal points.

For WFM applications, construct feature vectors from multiple metrics simultaneously:

- Current interval: volume, AHT, ASA, abandonment rate, adherence

- Context: hour-of-day, day-of-week, holiday flag

- Deltas: change from previous interval, change from same interval last week

The isolation forest scores each interval[1]. Low scores indicate anomalies — intervals where the combination of metrics is unusual, even if no single metric is individually extreme.

Advantages over Z-score:

- Detects multivariate anomalies (AHT up and quality down and volume normal — suggests a system issue, not a demand issue)

- No distributional assumptions

- Handles high-dimensional data naturally

Disadvantages:

- Less interpretable — the algorithm identifies what is anomalous but not why

- Requires tuning the contamination parameter (expected fraction of anomalies)

Method 3: Prophet Anomaly Detection

Facebook's Prophet decomposes time series into trend[2], seasonality, and residuals. Anomalies are observations where the residual exceeds an uncertainty interval:

1. Fit a Prophet model on historical data (with daily and weekly seasonality for WFM data) 2. Generate forecasts with uncertainty intervals (80% or 95%) 3. Flag observations outside the uncertainty interval

This approach is attractive for WFM because Prophet handles multiple seasonalities, holiday effects, and missing data natively. The anomaly detection inherits these capabilities — a volume spike that is unusual for a Tuesday will be detected even if it would be normal for a Monday.

See Prophet for model configuration details.

Method 4: DBSCAN for Pattern Anomalies

DBSCAN (Density-Based Spatial Clustering of Applications with Noise[3]) identifies anomalies as points in low-density regions of feature space. Unlike isolation forest, DBSCAN explicitly distinguishes between core points (in dense clusters), border points, and noise points (anomalies).

For WFM, DBSCAN is useful for detecting anomalous days or intervals based on their demand shape. Represent each day as a vector of interval-level volumes. DBSCAN clusters normal days (weekday patterns, weekend patterns, holiday patterns) and identifies days that do not fit any cluster — these are demand shape anomalies that a single-metric Z-score would miss.

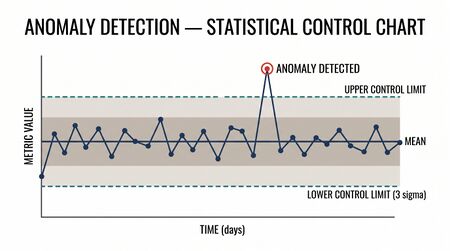

Method 5: Statistical Process Control

Statistical Process Control (SPC) provides a well-established framework for monitoring metrics over time. Control charts (X-bar, R-chart, CUSUM, EWMA) are specifically designed to distinguish common-cause variation (normal) from special-cause variation (anomalous).

The CUSUM (cumulative sum) chart is particularly valuable for detecting small, persistent shifts — the AHT creep that increases 2 seconds per week for 8 weeks. Individual observations stay within normal bounds, but the CUSUM accumulates the deviation and triggers an alarm.

The EWMA (exponentially weighted moving average) chart provides a middle ground between the responsiveness of Z-scores and the cumulative memory of CUSUM.

See Statistical Process Control for full SPC methodology.

WFM Applications

Volume Spike Detection

Problem: A product recall drives a 60% volume spike 3 hours before it appears in news alerts. The WFM team needs to know immediately.

Method: Rolling Z-score on 15-minute interval volumes. Threshold: Z > 3 for single-interval alert, Z > 2 for 3 consecutive intervals. Compare against same-day-of-week, same-interval baseline from past 8 weeks.

Response: Automated alert to real-time analyst → activate callback queue → request overtime for next 4 hours → notify capacity planning.

AHT Creep Detection

Problem: A new CRM interface was deployed 6 weeks ago. AHT has increased 18 seconds (4.3%) but it happened gradually — no single day triggered a standard alert.

Method: CUSUM chart on daily AHT by queue. The CUSUM accumulates the positive deviation and triggers when the cumulative sum exceeds the control limit (typically 4-5 standard deviations of the daily AHT mean).

Response: Alert to operations manager → compare AHT by call segment to isolate which interaction phase increased → CRM team investigation.

Adherence Degradation Monitoring

Problem: A team of 15 agents drops from 91% to 84% adherence over 2 weeks. No individual agent has adherence below the 80% escalation threshold.

Method: Isolation forest on team-level metrics (mean adherence, adherence variance, out-of-adherence event count, average non-adherence duration). The team's vector is anomalous because multiple metrics shifted simultaneously.

Response: Alert to team supervisor → investigation reveals supervisor absence (out on leave, temporary supervisor not enforcing adherence) → assign experienced interim supervisor.

Quality Score Drift Detection

Problem: Quality scores appear stable at 4.1/5.0, but the distribution has changed — fewer 5s, more 3s. The mean is stable but the composition shifted, indicating evaluator calibration drift or genuine quality degradation masked by averaging.

Method: Monitor not just the mean but the full distribution. Kolmogorov-Smirnov test or chi-squared test comparing this month's quality score distribution to the trailing 3-month baseline. Flag significant distribution shifts even when means are stable.

Response: QA calibration review → re-score a sample under original rubric → determine if scoring or performance changed.

Alert Fatigue and Sensitivity Tuning

The most common failure mode of anomaly detection systems is alert fatigue — too many alerts, most of which are false positives or operationally irrelevant. Analysts learn to ignore the system, defeating its purpose.

Tuning guidelines:

- Aim for 1-3 actionable alerts per day per analyst. If the system produces 20 alerts per day, the threshold is too sensitive.

- Tier alerts by severity: Critical (immediate action required), Warning (investigate within 1 hour), Informational (review in daily debrief).

- Use composite scores: An anomaly that spans multiple metrics (volume up AND AHT up AND quality down) is more likely actionable than a single-metric outlier.

- Track false positive rate: If >50% of alerts investigated turn out to be benign variation, tighten the threshold.

- Seasonal adjustment: Widen thresholds during known high-variability periods (holidays, promotion windows).

Feedback loop: Every alert should be dispositioned — "true anomaly, action taken," "true anomaly, no action needed," or "false positive." This disposition data trains the next iteration of threshold tuning.

Worked Example

A multi-queue contact center runs an isolation forest model on 15-minute interval data to detect multivariate anomalies.

Feature engineering: For each 15-minute interval, construct a feature vector:

| Feature | Source | Purpose |

|---|---|---|

| Volume | ACD | Demand level |

| Volume delta (vs. same interval last week) | Calculated | Demand change |

| AHT | ACD | Handle time level |

| AHT delta (vs. 4-week rolling mean) | Calculated | Handle time change |

| ASA | ACD | Service responsiveness |

| Abandonment rate | ACD | Customer patience signal |

| Adherence | WFM platform | Agent compliance |

| Hour of day | Clock | Contextual |

| Day of week | Calendar | Contextual |

| Holiday flag | Calendar | Contextual |

Model training: Train the isolation forest on 12 weeks of historical interval data (~4,000 intervals per queue). Set contamination parameter to 0.02 (expect 2% of intervals to be anomalous). Fit one model per queue group (queues with similar characteristics share a model).

Detection event: On a Tuesday at 10:15 AM, the billing queue triggers an anomaly alert. The interval's anomaly score is −0.72 (scores below −0.5 are flagged). Investigation reveals:

- Volume: 195 (expected: 180) — slightly elevated but within ±1 SD

- AHT: 502 seconds (expected: 445) — 12.8% above baseline

- ASA: 48 seconds (expected: 22) — elevated due to longer handle times

- Quality score: 3.8 (expected: 4.1) — slightly depressed

- Adherence: 89% (expected: 91%) — normal range

No single metric would have triggered a univariate alert. The isolation forest detected the combination — elevated AHT with depressed quality while volume is near-normal — as anomalous. This pattern suggests a system or process issue, not a demand issue.

Root cause: The CRM knowledge base update deployed at 9:45 AM broke search functionality in the billing module. Agents are spending additional time navigating without search. The anomaly was detected 30 minutes after deployment — standard daily reporting would not have surfaced it until the next morning.

Impact of early detection: CRM team rolled back the update by 10:45 AM. Estimated savings: 90 minutes of elevated AHT across 40 agents = 60 agent-hours of capacity preserved.

Implementation

import pandas as pd

import numpy as np

from sklearn.ensemble import IsolationForest

from scipy import stats

# --- Rolling Z-Score ---

def rolling_zscore(series, window=8, threshold=3.0):

"""Z-score against same-interval, same-DOW trailing window."""

rolling_mean = series.rolling(window=window).mean()

rolling_std = series.rolling(window=window).std()

z_scores = (series - rolling_mean) / rolling_std

anomalies = z_scores.abs() > threshold

return z_scores, anomalies

# --- Isolation Forest ---

def detect_anomalies_iforest(df, feature_cols, contamination=0.02):

"""Multivariate anomaly detection with Isolation Forest."""

from sklearn.preprocessing import StandardScaler

scaler = StandardScaler()

X = scaler.fit_transform(df[feature_cols])

model = IsolationForest(

n_estimators=200,

contamination=contamination,

random_state=42

)

df = df.copy()

df['anomaly_score'] = model.fit_predict(X) # -1 = anomaly, 1 = normal

df['anomaly_raw_score'] = model.score_samples(X)

return df

# Apply to WFM data

feature_cols = ['volume', 'volume_delta_wow', 'aht', 'aht_delta_rolling',

'asa', 'abandon_rate', 'adherence', 'hour', 'dow']

results = detect_anomalies_iforest(interval_df, feature_cols, contamination=0.02)

anomalies = results[results['anomaly_score'] == -1]

print(f"Detected {len(anomalies)} anomalous intervals out of {len(results)}")

# --- CUSUM for drift detection ---

def cusum(values, target, threshold=5.0, drift=0.5):

"""One-sided upper CUSUM chart."""

sigma = np.std(values[:50]) # estimate sigma from initial stable period

cusum_pos = np.zeros(len(values))

cusum_neg = np.zeros(len(values))

alerts = []

for i in range(1, len(values)):

cusum_pos[i] = max(0, cusum_pos[i-1] + (values[i] - target) / sigma - drift)

cusum_neg[i] = max(0, cusum_neg[i-1] - (values[i] - target) / sigma - drift)

if cusum_pos[i] > threshold or cusum_neg[i] > threshold:

alerts.append(i)

return cusum_pos, cusum_neg, alerts

# Detect AHT drift

target_aht = daily_aht['aht'].iloc[:30].mean() # baseline from first 30 days

cusum_up, cusum_down, alert_indices = cusum(

daily_aht['aht'].values, target=target_aht, threshold=5.0

)

# --- Prophet anomaly detection ---

from prophet import Prophet

def prophet_anomaly_detection(df, interval_width=0.95):

"""Detect anomalies as observations outside Prophet's uncertainty interval."""

model = Prophet(

daily_seasonality=True,

weekly_seasonality=True,

interval_width=interval_width

)

model.fit(df[['ds', 'y']])

forecast = model.predict(df[['ds']])

merged = df.merge(forecast[['ds', 'yhat', 'yhat_lower', 'yhat_upper']], on='ds')

merged['anomaly'] = (merged['y'] < merged['yhat_lower']) | (merged['y'] > merged['yhat_upper'])

return merged

Key libraries:

- scikit-learn — Isolation Forest, DBSCAN, preprocessing

- Prophet — time series decomposition and anomaly detection

- scipy.stats — Z-scores, distribution tests

- statsmodels — CUSUM, EWMA control charts

- PyOD — comprehensive outlier detection library (20+ algorithms)

Maturity Model Position

| Level | Capability | Anomaly Detection Application |

|---|---|---|

| Level 1 — Reactive | Manual observation | "I noticed the board looks red" — human pattern recognition only |

| Level 2 — Managed | Threshold alerts | Static thresholds on volume and service level (e.g., SL < 70% → alert) |

| Level 3 — Proactive | Statistical anomaly detection | Rolling Z-scores, control charts, forecast-vs-actual deviation monitoring |

| Level 4 — Advanced | Multivariate ML detection | Isolation forest, Prophet anomalies, root cause triage workflow |

| Level 5 — Optimized | Autonomous detection and response | Self-tuning models, automated root cause classification, auto-remediation triggers |

See Also

- Statistical Process Control — control chart methodology, the classical approach to anomaly detection

- Prophet — time series model used for forecast-based anomaly detection

- Real-Time Operations — the operational context where anomaly alerts are consumed

- Forecasting — anomaly detection as a complement to forecast accuracy monitoring

- Scikit-learn for WFM — implementation of isolation forest and DBSCAN

References

- ↑ Liu, F.T., Ting, K.M. and Zhou, Z.-H. (2008). "Isolation Forest." Proceedings of the 8th IEEE International Conference on Data Mining, 413-422.

- ↑ Taylor, S.J. and Letham, B. (2018). "Forecasting at Scale." The American Statistician, 72(1), 37-45.

- ↑ Ester, M. et al. (1996). "A Density-Based Algorithm for Discovering Clusters in Large Spatial Databases with Noise." Proceedings of the 2nd International Conference on Knowledge Discovery and Data Mining (KDD-96), 226-231.

- Liu, F.T., Ting, K.M., & Zhou, Z.H. (2008). "Isolation Forest." Proceedings of IEEE ICDM, 413-422. Original isolation forest paper.

- Page, E.S. (1954). "Continuous inspection schemes." Biometrika, 41(1/2), 100-115. The CUSUM method.

- Chandola, V., Banerjee, A., & Kumar, V. (2009). "Anomaly detection: A survey." ACM Computing Surveys, 41(3), 1-58. Comprehensive survey of anomaly detection methods.

- Taylor, S.J. & Letham, B. (2018). "Forecasting at scale." The American Statistician, 72(1), 37-45. Prophet methodology.

- Montgomery, D.C. (2019). Introduction to Statistical Quality Control. 8th ed. Wiley. SPC and control chart theory.