The AI-Native WFM Function

The AI-native WFM function describes a workforce management organization redesigned from first principles for environments where artificial intelligence handles a majority of customer interactions. Unlike incremental adoption models that bolt AI capabilities onto existing WFM processes, the AI-native approach treats the blended human-AI workforce as the default operating model and restructures roles, metrics, planning cycles, and technology architecture accordingly. As contact centers approach and exceed 50% AI containment rates, the fundamental assumptions underpinning traditional WFM — that capacity planning means planning human schedules, that staffing calculations assume homogeneous human agents, and that planning occurs in batch cycles — become structurally inadequate. This article examines what Workforce Management looks like when redesigned for a workforce that is more machine than human, drawing on emerging practice from early adopters and projecting the organizational design implications for the 2027–2030 planning horizon.

Defining AI-Native WFM

The distinction between "WFM with AI" and "AI-native WFM" is not semantic — it reflects a fundamentally different design philosophy. Most organizations in 2025–2026 operate in the first mode: they have added AI-powered tools to existing WFM workflows. Forecasting models may use machine learning. Scheduling engines may incorporate AI optimization. Chatbots may deflect a portion of contacts. But the organizational structure, role definitions, planning cadence, and success metrics remain rooted in a human-centric model designed decades ago.

AI-native WFM inverts this. Rather than asking "how do we add AI to our WFM process?", it asks: "if we were designing a WFM function today, knowing that AI agents handle most routine work, what would we build?" The answer differs in nearly every dimension.

Davenport and Ronanki (2018) identified three categories of AI application in business: process automation, cognitive insight, and cognitive engagement.[1] Traditional WFM AI adoption maps to the first two categories — automating scheduling tasks and generating forecasting insights. AI-native WFM operates in a fourth category that has since emerged: AI as workforce participant. In this model, AI agents are not tools used by the workforce; they are the workforce, alongside humans. The WFM function's job is to orchestrate both populations toward shared service outcomes.

The WFM Labs Maturity Model™ positions AI-native WFM at Level 5, where the organization has moved beyond pilot programs and incremental automation to a state where AI capacity is the primary planning variable and human capacity is the secondary, specialized complement. At this level, the traditional WFM technology stack — WFM software, time tracking, schedule adherence — represents only a fraction of the WFM function's operational footprint.

The Shift in WFM Scope

Traditional WFM scope can be summarized in a single phrase: plan human schedules to meet forecasted demand at target service levels. Every process, tool, and role in a traditional WFM organization serves this objective. Forecasting predicts how many contacts will arrive. Capacity planning determines how many humans are needed. Scheduling assigns those humans to shifts. Real-time management adjusts when reality deviates from plan.

AI-native WFM expands this scope to: orchestrate work across human and AI capacity to optimize service quality, cost, and customer experience simultaneously. This is not a minor expansion. It requires the WFM function to:

- Manage AI capacity as a planning input. AI agent capacity is not infinite — it is bounded by platform concurrency limits, integration latency, licensing costs, and containment capability by contact type. These constraints must be modeled, forecasted, and optimized alongside human capacity. The Three-Pool Architecture provides a structural framework for this, segmenting work into AI-only, human-only, and blended pools.

- Own the containment boundary. The line between what AI handles and what humans handle is not static. It shifts with AI model updates, seasonal contact mix changes, product launches, and regulatory changes. In an AI-native model, the WFM function owns the analysis and recommendation of where this boundary sits — a responsibility that in most current organizations falls to the technology team or is not explicitly owned at all. AI Agent Orchestration for WFM describes the routing and escalation frameworks that operationalize this boundary.

- Optimize for total cost of resolution, not just staffing cost. Traditional WFM optimizes for the cheapest way to cover human schedules at target service levels. AI-native WFM optimizes for the cheapest way to resolve each contact at acceptable quality, which may mean routing some contacts to more expensive human agents when AI resolution quality is marginal and routing others to AI when it produces equivalent or better outcomes. This requires visibility into blended staffing economics that most organizations lack today.

- Integrate with AI operations. WFM must coordinate with AI engineering on model deployments, performance monitoring, and capacity changes. A new model release that improves containment by 5% has immediate staffing implications. A model degradation event requires immediate human capacity surge. These operational interdependencies do not exist in traditional WFM.

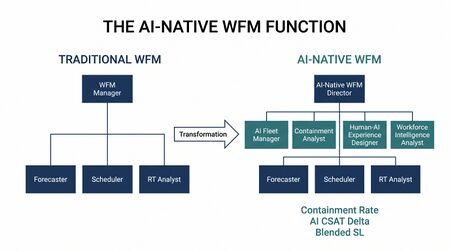

New Roles in AI-Native WFM

The role structures defined in WFM Roles — workforce planners, real-time analysts, scheduling specialists — remain necessary in an AI-native WFM function but are insufficient. Several new roles emerge that have no precedent in traditional WFM organizations.

AI Fleet Manager

The AI Fleet Manager is responsible for the capacity planning, performance monitoring, and operational management of AI agent systems. This role bridges the gap between WFM and AI engineering, translating platform capabilities into planning inputs. Key responsibilities include:

- Maintaining AI capacity models (concurrent session limits, throughput by contact type, degradation characteristics under load)

- Coordinating AI platform maintenance windows with WFM scheduling to ensure human coverage during downtime

- Monitoring AI agent performance metrics and flagging containment shifts that affect human staffing requirements

- Managing the AI agent "roster" — which AI models are deployed, which contact types they handle, and at what quality thresholds

This role is analogous to a fleet operations manager in logistics — managing a fleet of AI systems rather than a fleet of vehicles. Willcocks, Lacity, and Craig (2015) anticipated this class of role when they described "automation management" as a distinct organizational capability separate from both IT management and operations management.[2] The AI Fleet Manager extends this concept to conversational AI agents operating at enterprise scale.

Containment Analyst

The Containment Analyst focuses on analyzing, forecasting, and optimizing AI containment rates across contact types, channels, and customer segments. While traditional WFM analysts focus on volume and handle time, the Containment Analyst adds a third dimension: what proportion of contacts can AI resolve without human intervention, and how is that proportion changing?

Responsibilities include:

- Building and maintaining containment forecasting models by contact type and complexity tier

- Analyzing escalation patterns to identify containment improvement opportunities (feeding recommendations to AI engineering)

- Modeling the downstream impact of containment changes on human staffing requirements, accounting for the escalation enrichment effect described in Agentic AI Workforce Planning

- Developing scenario analyses for containment trajectory planning (what happens to human headcount if containment moves from 55% to 70% over 12 months?)

Human-AI Experience Designer

The Human-AI Experience Designer ensures that handoffs between AI and human agents preserve customer experience quality. This role sits at the intersection of WFM, quality management, and customer experience design. Traditional WFM does not address interaction design — it plans capacity. In an AI-native model, how work flows between AI and humans directly affects both customer satisfaction and workforce efficiency.

Key focus areas include:

- Designing escalation protocols that minimize customer friction during AI-to-human handoffs

- Defining context transfer requirements (what information must the AI pass to the human agent to avoid customer repetition)

- Analyzing customer satisfaction differentials between AI-handled and human-handled contacts to inform routing optimization

- Collaborating with the AI Fleet Manager and Containment Analyst to adjust routing boundaries based on experience data

Workforce Intelligence Analyst

The Workforce Intelligence Analyst synthesizes data across the entire blended workforce to generate actionable intelligence for WFM leadership. This role goes beyond traditional reporting to build the analytical foundation for strategic workforce decisions — hiring plans, AI investment cases, organizational design changes, and skill development priorities.

This role operates the unified analytics layer described in the technology architecture section, producing insights such as:

- Cost per resolution trends by channel and resolution type (AI, human, blended)

- Workforce composition optimization models (what is the optimal human-to-AI ratio by contact type?)

- Predictive models for skill demand evolution as AI containment expands

- ROI analysis of AI capacity investments versus human hiring

New Metrics

Traditional WFM metrics — Service Level, Average Handle Time, occupancy, adherence, Shrinkage — were designed for a purely human workforce. An AI-native WFM function requires a fundamentally different measurement framework that captures the performance of the blended workforce and the interactions between its components.

Containment Rate

The percentage of contacts resolved by AI without human intervention, segmented by contact type, channel, and complexity tier. This is the single most consequential metric in AI-native WFM because it directly determines human staffing requirements. A 1% change in containment rate at scale can represent dozens of FTE in staffing impact. Unlike traditional metrics, containment rate is both a WFM metric and an AI engineering metric — requiring cross-functional ownership.

AI CSAT Delta

The difference in customer satisfaction between AI-resolved and human-resolved contacts, measured at the contact type level. A negative delta (AI satisfaction lower than human) signals contact types where AI routing may be damaging customer relationships. A positive delta (AI satisfaction higher than human, typically in simple transactional contacts where speed matters most) confirms appropriate routing. This metric drives routing optimization decisions.

Cost Per Resolution by Channel

The fully loaded cost to resolve a contact, calculated separately for AI-only, human-only, and blended (escalated) resolutions. This metric replaces the traditional cost-per-call metric by incorporating AI platform costs, licensing, and the hidden cost of escalation (contacts that start with AI but require human intervention typically cost more than contacts routed directly to humans due to duplicated effort). McKinsey's 2024 analysis of AI economics in service operations found that organizations tracking this metric made materially better routing decisions than those using aggregate cost metrics.[3]

Blended Service Level

A composite service level metric that weights AI response time and human response time according to the containment split. Traditional Service Level assumes all contacts enter a single human-served queue. Blended service level reflects the customer's actual experience: contacts handled by AI are typically resolved in seconds, while contacts reaching human agents follow traditional queuing dynamics. The blended metric provides a more accurate picture of customer experience than reporting AI and human service levels separately.

Human Utilization Rate

The proportion of human agent time spent on contacts that require human judgment, empathy, or complex problem-solving — work that AI cannot currently perform. As AI handles more routine work, human utilization rate should increase because humans should spend less time on work below their capability. A declining human utilization rate in an AI-native environment signals a routing problem: humans are handling work that AI should be handling.

This metric replaces traditional occupancy as the primary human efficiency measure. Occupancy measures whether agents are busy; human utilization rate measures whether they are busy with the right work.

The Planning Cycle Reimagined

From Batch to Continuous

Traditional WFM operates on batch planning cycles: annual capacity plans, monthly forecast updates, weekly schedule generation, daily real-time adjustments. These cycles assume that the supply side of the equation (human agents) changes slowly — hiring takes weeks to months, training takes days to weeks, schedule changes take a pay period.

AI capacity does not follow this cadence. AI systems can be scaled up in minutes, updated in hours, and reconfigured in real time. This asymmetry breaks the batch planning model. An AI-native WFM function moves toward continuous planning, where:

- Capacity models update continuously as AI containment data arrives

- Human scheduling adjusts dynamically based on real-time AI performance

- Staffing plans incorporate rolling containment forecasts rather than point-in-time snapshots

- Real-Time Schedule Adjustment becomes the primary operating mode rather than an exception handler

Deloitte's 2025 workforce management technology assessment described this as the shift from "plan-execute-measure" to "sense-respond-optimize" — a continuous loop where planning and execution blur together.[4]

Real-Time Capacity Rebalancing

In a blended workforce, real-time management becomes significantly more complex and more consequential. When an AI platform experiences degraded performance — increased latency, declining containment, or outright failure — human capacity must absorb the overflow immediately. Conversely, when AI performance exceeds expectations, human agents may find themselves underutilized.

AI-native WFM implements real-time capacity rebalancing: automated rules and decision logic that adjust routing, staffing, and scheduling in response to AI performance signals. This requires:

- Real-time API integration between the WFM platform and AI orchestration layer

- Predefined escalation protocols with automatic trigger thresholds

- Flexible scheduling models that allow rapid human capacity surge (e.g., on-call pools, flex scheduling, voluntary overtime triggers)

- Automated communication systems that notify human agents of schedule changes driven by AI capacity shifts

AI Capacity as Elastic Variable

The most consequential change in planning cycle design is treating AI capacity as an elastic variable rather than a fixed input. Traditional planning treats human headcount as semi-fixed (slow to change) and optimizes scheduling within that constraint. AI-native planning can treat AI capacity as rapidly adjustable and optimize the human-AI mix continuously.

This creates a new optimization problem: at any given moment, what is the optimal allocation of work between AI and human agents, considering quality, cost, customer experience, and human agent utilization? This optimization runs continuously, not in batch cycles, and drives both routing decisions and staffing recommendations.

Technology Architecture

AI-native WFM requires a technology architecture that integrates three layers that are typically separate in traditional organizations.

WFM Platform Layer

The traditional WFM platform — forecasting, scheduling, real-time adherence, reporting — remains the foundation but is no longer sufficient on its own. The WFM platform must expose APIs that enable real-time integration with the AI orchestration layer and accept inputs (containment forecasts, AI capacity models) that traditional WFM platforms were not designed to consume. Most current WFM platforms require significant customization or middleware to operate in an AI-native architecture.

AI Orchestration Layer

The AI orchestration layer manages AI agent deployment, routing, performance monitoring, and capacity management. In an AI-native architecture, this layer feeds real-time performance data to the WFM platform and accepts routing optimization signals in return. The orchestration layer controls:

- Which AI models handle which contact types

- Concurrent session allocation and load balancing

- Real-time containment monitoring and escalation triggers

- AI model version management and rollback capabilities

Unified Analytics Layer

The unified analytics layer aggregates data from both the WFM platform and AI orchestration layer to produce the blended metrics described above. Traditional WFM reporting and AI platform reporting are typically separate, making it difficult to answer questions like "what is our true cost per resolution?" or "how did today's AI model update affect human agent utilization?" The unified analytics layer resolves this by creating a single data model that spans both workforce populations.

The AI Scaffolding Framework provides a structured approach to building this technology architecture incrementally, allowing organizations to evolve from bolt-on AI integration to a fully native architecture over time.

Organizational Design

Where the AI-native WFM function sits in the organizational hierarchy is a strategic decision with significant implications for effectiveness.

Under Operations

Placing AI-native WFM under the operations function (typically under a VP of Operations or COO) maintains alignment with service delivery but may create tension with technology teams that control AI platforms. This model works when the operations leader has sufficient technical fluency to manage the AI capacity dimension and when the organization has strong cross-functional coordination mechanisms.

Under Technology

Placing AI-native WFM under the technology function aligns AI capacity management with AI engineering but risks disconnecting workforce planning from operational reality. Technology leaders may optimize for AI performance metrics without sufficient attention to human workforce dynamics, customer experience implications, or operational cost structures.

Under a New Function

Some forward-looking organizations are creating a new function — variously called "Workforce Intelligence," "Blended Operations," or "Capacity & AI Operations" — that reports directly to the C-suite and owns the full scope of blended workforce orchestration. This model avoids the compromises of placing AI-native WFM under either operations or technology but requires executive sponsorship and organizational appetite for structural change.

The Organizational Change Management for AI Workforce Transitions literature suggests that the optimal design depends on organizational maturity: operations-aligned placement works at earlier maturity levels, while dedicated function placement becomes advantageous as AI containment exceeds 50% and the complexity of blended orchestration warrants specialized leadership.

Transition Roadmap

Moving from traditional WFM to AI-native WFM is not a single transformation — it is a multi-phase evolution that must be sequenced carefully to avoid operational disruption.

Phase 1: Instrumentation (Months 1–6)

Build the measurement foundation. Implement containment tracking by contact type. Begin measuring cost per resolution across channels. Establish baseline AI CSAT delta metrics. Create visibility into AI platform capacity and performance. No organizational changes yet — this phase is about generating the data that will inform all subsequent decisions.

Phase 2: Integration (Months 6–18)

Connect WFM and AI operations. Build API integrations between WFM platform and AI orchestration layer. Begin incorporating containment forecasts into staffing models. Pilot real-time capacity rebalancing in a limited scope. Create the Containment Analyst role (often by evolving an existing senior WFM analyst). Begin cross-training WFM staff on AI platform fundamentals.

Phase 3: Redesign (Months 18–30)

Restructure the WFM function for AI-native operation. Implement the full new role structure (AI Fleet Manager, Human-AI Experience Designer, Workforce Intelligence Analyst). Migrate from batch to continuous planning for at least the AI-impacted portion of the workforce. Deploy the unified analytics layer. Shift primary metrics from traditional to blended measures. This is the phase where organizational design decisions (placement under operations, technology, or a new function) must be made.

Phase 4: Optimization (Months 30+)

Operate the AI-native WFM function at full capability. Continuously optimize the human-AI work allocation. Expand AI capacity planning to include emerging AI capabilities (multimodal, proactive outreach). Develop predictive models for containment trajectory and workforce composition evolution. At this phase, the WFM function is operating in the "sense-respond-optimize" continuous loop rather than traditional batch cycles.

Autor (2015) noted that the history of technology adoption in labor markets follows a consistent pattern: initial displacement anxiety, followed by task restructuring, followed by the emergence of new complementary roles and capabilities.[5] The transition roadmap above reflects this pattern applied specifically to the WFM function.

Risks and Resistance

Job Displacement Fears

The most immediate source of resistance to AI-native WFM is the fear — held by both frontline agents and WFM professionals — that AI will eliminate their jobs. This fear is not unfounded: as AI containment increases, fewer human agents are needed for routine contacts, and some traditional WFM analyst tasks (basic forecasting, schedule generation) are increasingly automated. AI and Employment research suggests that the net effect is role transformation rather than elimination, but this is cold comfort to individuals whose specific tasks are being automated.

Effective change management requires honest communication: some roles will change substantially, some will be eliminated, and new roles will be created. The transition roadmap's phased approach allows the organization to retrain and redeploy staff rather than executing mass displacement. Organizations that attempt to implement AI-native WFM without a credible workforce transition plan will face resistance that can delay or derail the transformation.

Skill Gaps

AI-native WFM requires skills that most current WFM professionals do not possess: AI platform management, data science, API integration, real-time systems thinking. Closing these skill gaps requires sustained investment in training and, in many cases, hiring from adjacent fields (data engineering, AI operations, product management). The AI Scaffolding Framework addresses this by providing structured capability-building paths that parallel the technology evolution.

Organizational Inertia

Many contact center organizations have operated with essentially the same WFM model for 20+ years. The processes, tools, vendor relationships, and organizational structures are deeply embedded. Shifting to AI-native WFM requires changing not just technology but mental models — how leaders think about capacity, how planners think about their scope, how the organization measures success. Bessen (2019) documented that organizational adjustment to new technology typically lags the technology itself by 5–10 years, suggesting that the primary bottleneck in AI-native WFM adoption will be organizational, not technical.[6]

Regulatory and Ethical Considerations

As AI handles a larger proportion of customer interactions, regulatory scrutiny increases. Financial services, healthcare, and government sectors face compliance requirements around AI transparency, data privacy, and human oversight that constrain how aggressively organizations can pursue AI-native WFM. The WFM function must incorporate regulatory compliance into its planning — not as an afterthought, but as a core planning constraint alongside service level and cost targets.

See Also

- AI in Workforce Management

- Agentic AI Workforce Planning

- Workforce Planning with AI Agents

- AI Agent Orchestration for WFM

- Human AI Blended Staffing Models

- Three-Pool Architecture

- AI Scaffolding Framework

- WFM Roles

- WFM Labs Maturity Model™

- Organizational Change Management for AI Workforce Transitions

- AI and Employment

References

- ↑ Davenport, T. H., & Ronanki, R. (2018). Artificial intelligence for the real world. Harvard Business Review, 96(1), 108–116.

- ↑ Willcocks, L. P., Lacity, M. C., & Craig, A. (2015). The IT Function and Robotic Process Automation. The London School of Economics and Political Science.

- ↑ McKinsey & Company. (2024). The Economics of AI in Customer Service Operations. McKinsey Digital.

- ↑ Deloitte. (2025). Workforce Management Technology Assessment: The Shift to Continuous Planning. Deloitte Insights.

- ↑ Autor, D. H. (2015). Why are there still so many jobs? The history of workplace automation. Journal of Economic Perspectives, 29(3), 3–30.

- ↑ Bessen, J. (2019). AI and Jobs: The Role of Demand. NBER Working Paper No. 24235. National Bureau of Economic Research.