Rostering

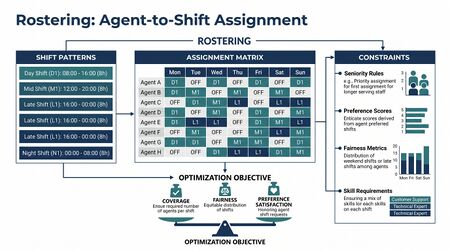

Rostering is the assignment of specific named employees to the shift slots produced by Schedule Generation. Schedule generation answers "how many agents on which shifts?" Rostering answers "who, by name, on which shift?" The two problems are related but mathematically distinct: schedule generation is a coverage-and-cost optimization over an anonymous pool; rostering is a bipartite assignment problem over identified individuals with preferences, fairness obligations, and contractual entitlements[1].

The distinction is operational. WFM teams that conflate the two stop short of solving the rostering problem and produce schedules that satisfy aggregate coverage but burden specific agents unfairly. Koole's Call Center Optimization (chapter 7) treats them as separate stages for exactly this reason: solve coverage first, then solve assignment.

What practitioners build

A rostering process produces three deliverables every planning period:

- The roster itself — for each shift slot generated by schedule generation, the named employee assigned to that slot for the planning period (typically a week, sometimes two or four weeks).

- An unrosterable list — shift slots that the rostering algorithm could not fill given the available employee pool, the constraints, and the preferences. Each unrosterable shift triggers an escalation path: voluntary overtime offer, temporary staff request, or coverage gap acceptance.

- A fairness report — distribution metrics across the workforce showing how undesirable shifts (weekends, nights, holidays, premium-pay-but-unwanted slots) were allocated. Without this report, fairness drift is invisible until it surfaces as a grievance.

The roster is the artifact employees actually see. Schedule generation lives in the WFM team's spreadsheets; the roster is what shows up in the agent's portal Monday morning.

Math: the bipartite assignment formulation

Let be the set of employees and the set of shift slots produced by schedule generation. Define:

- — binary variable, 1 if employee is assigned to slot , 0 otherwise

- — preference score: how much employee values being assigned to slot (positive for desired, negative for undesired)

- — fairness score: how much assigning slot to employee deviates from their fair share of undesirable shifts (positive when it corrects historical unfairness, negative when it compounds it)

- — weights balancing preference vs fairness

Maximize:

Subject to:

- One employee per slot: (or if some slots may go unfilled)

- Slot count per employee: where is the number of shifts the employee's contract requires

- Skill match: when employee lacks the skills slot requires

- Maximum consecutive shifts: encoded as a constraint over consecutive days

- Mandatory rest periods: minimum hours between successive shifts (typically 8-11 hours, jurisdiction-dependent)

- Availability: when employee is on approved leave during the slot

This is an integer program. For typical contact-center scale (hundreds of employees, hundreds of slots per week) it solves in seconds with modern solvers. The structure — bipartite, mostly transportation-like — is favorable; off-the-shelf assignment algorithms apply when the additional constraints are mild, and integer programming with branch-and-bound handles the heavily constrained version.

The classical academic literature treats variants of this as the Nurse Rostering Problem (Burke et al., 2004; the field's reference review). Nurse rostering generalizes contact-center rostering: more skill dimensions, longer planning horizons, harder regulatory constraints. The math is the same family.

Practitioner playbook

- Define preference elicitation. How will employees express which shifts they want? Typical mechanisms: ranked bidding (top-N preferred shifts), ordinal preferences (rank all shifts), categorical preferences (preferred days, preferred start time, preferred days off). Whichever mechanism is chosen, the elicitation must produce values the optimizer can consume. Capturing preference badly is the most common rostering failure mode.

- Define fairness metrics. At minimum, track: weekend distribution, night distribution, holiday distribution, undesirable-time-slot distribution, premium-pay distribution. Compute a rolling-window fairness score per employee. The fairness score becomes in the optimization.

- Set the preference-fairness weight. versus is a values decision. Too much preference weight produces drift toward "lucky" employees who get their preferences early and stay favored. Too much fairness weight ignores employee voice. The competent default is roughly equal weights with periodic recalibration.

- Run the optimization. Modern WFM software (NICE IEX, Verint, Calabrio, Alvaria) supports rostering optimization natively; specialized tools (PlanShift, Quinyx) emphasize it. Open-source: Google OR-Tools handles bipartite assignment with constraints out of the box.

- Review the unrosterable list. Every shift the optimizer couldn't fill is a decision point. Voluntary overtime? Temp request? Manager-discretion fill? Accept the gap and re-forecast service level? Document the escalation path so it doesn't become a one-off scramble.

- Publish the fairness report. Show employees and operations leadership the distribution of undesirable shifts. Transparency is itself a fairness mechanism — invisible unfairness compounds; visible unfairness self-corrects through complaint and renegotiation.

- Capture preference outcomes. For each employee, log whether the roster gave them their preferred shifts. Use this data in the next period's preference weighting and to identify drift.

Centralized vs distributed rostering

The classical formulation above is centralized: the WFM team runs the optimization and publishes the result. The alternative is self-rostering (see Self-Scheduling and Flexible Workforce Models), where employees claim slots directly through a marketplace mechanism (auction, FCFS, lottery).

Trade-offs:

- Centralized rostering produces mathematically optimal assignments under the chosen objective. It scales to large operations and handles complex constraints. It treats employee preferences as inputs rather than as agency.

- Self-rostering gives employees direct control. It tends to produce higher reported satisfaction. It does not produce mathematically optimal coverage and requires fallback rules for unclaimed slots. It works best when the workforce is predominantly experienced and the constraint set is mild.

The competent practice is hybrid: centralized rostering for the bulk of slots; a self-service window after the centralized pass where employees can swap, trade, or claim unfilled slots. The hybrid captures most of the optimization benefit while preserving meaningful agent voice.

Common failure modes

- Treating rostering as data entry. WFM teams sometimes hand-assign employees to slots in a spreadsheet without any optimization or fairness logic. The result satisfies coverage but distributes pain unevenly; the most-junior or most-recently-hired employees absorb the worst shifts.

- Ignoring fairness drift. Without explicit fairness tracking, the same employees are repeatedly assigned undesirable shifts. The drift is silent; it surfaces as attrition, grievances, or sudden refusal.

- Conflating skill match with skill ranking. Slot requires Sales skill; multiple agents have it; the optimizer picks one. If the optimizer uses tenure, raw preference score, or first-fit ordering without considering skill proficiency, the most-skilled agent ends up assigned to the busiest queue every time. That's a different fairness failure — over-utilizing high-skill agents — and it requires a soft-constraint adjustment.

- Hard-constraining preferences. Treating "Mary prefers mornings" as a hard constraint produces infeasibility when many employees prefer the same shifts. Preferences belong in the soft objective; only legally protected accommodations belong in hard constraints.

- No escalation path for unrosterable shifts. The optimizer cannot fill every shift in a constrained workforce. A team without a defined voluntary-overtime / temp-fill / accept-the-gap protocol scrambles every week, and the scramble itself burns out the WFM team.

- Skipping the fairness report. Without a published distribution, employees believe the roster is unfair regardless of actual data. Publishing the data is cheap; not publishing it is expensive in trust.

Maturity Model Position

In the WFM Labs Maturity Model™, rostering practice progresses from informal manual assignment to optimized fairness-aware allocation:

- Level 1 — Initial (Emerging Operations) — rostering is ad-hoc; the supervisor writes names on a printed schedule grid; no preference elicitation; no fairness tracking; favoritism and resentment are routine.

- Level 2 — Foundational (Traditional WFM Excellence) — basic rostering inside the WFM software; tenure or first-fit assignment; some preference capture but limited weight in the algorithm; fairness assessed informally if at all; centralized model only.

- Level 3 — Progressive (Breaking the Monolith) — formalized preference elicitation; explicit fairness metrics tracked per employee on a rolling window; preference-fairness weighting tuned to organizational values; published fairness reports; defined escalation path for unrosterable shifts; hybrid centralized-plus-self-service window.

- Level 4 — Advanced (The Ecosystem Emerges) — rostering co-optimized with skill utilization and the pool architecture; predictive fairness scoring incorporates attrition risk; rostering decisions trigger and consume signals from the analytical engine; preferences captured continuously rather than via periodic surveys.

- Level 5 — Pioneering (Enterprise-Wide Intelligence) — rostering is part of an integrated supply-demand orchestration layer where employee preferences, fairness obligations, demand variance, skill development, and career-path goals are jointly optimized; agent voice has full mathematical standing in the decision.

The cluster's pivotal lift is from Level 2 to Level 3 — the introduction of explicit fairness metrics as first-class optimization inputs. Most operations that report rostering "problems" are stuck at Level 2, attempting to solve a Level 3 problem with Level 2 tooling.

References

- Koole, G. Call Center Optimization. ccmath/book.pdf MG Books, 2013. Chapter 7 covers rostering and shift assignment; primary source for this page.

- Burke, E. K., De Causmaecker, P., Vanden Berghe, G., & Van Landeghem, H. "The state of the art of nurse rostering." Journal of Scheduling 7(6), 2004. The reference review of nurse rostering, which generalizes contact-center rostering.

- Ernst, A. T., Jiang, H., Krishnamoorthy, M., & Sier, D. "Staff scheduling and rostering: A review of applications, methods and models." European Journal of Operational Research 153(1), 2004. Comprehensive review of staff scheduling and rostering across industries.

- Cheang, B., Li, H., Lim, A., & Rodrigues, B. "Nurse rostering problems — a bibliographic survey." European Journal of Operational Research 151(3), 2003.

Tools

- Erlang Suite — supplies the per-interval staffing requirements that schedule generation transforms into shift slots; rostering operates on those slots.

- Staffing Gap Optimizer — handles the unrosterable-shift escalation: when rostering produces a coverage gap, this tool models the overtime-vs-temp trade-off.

See Also

- Scheduling Methods — overview of the scheduling cluster

- Schedule Generation — produces the slots that rostering fills

- Multi-Skill Scheduling — skill-aware coverage that rostering must respect

- Self-Scheduling and Flexible Workforce Models — distributed alternative to centralized rostering

- Schedule Maintenance — handling roster changes mid-period

- Schedule Quality Metrics — metrics that evaluate rostering output

- Adherence and Conformance — measurement of agent behavior against the roster

- Capacity Planning Methods — capacity inputs to rostering

- Probabilistic Scheduling — distributional approach to coverage planning

- Multi-Objective Optimization in Contact Center — multi-objective formulation underlying preference-fairness trade-offs

- Schedule Bidding and Preference Based Scheduling

- ↑ Koole, G. (2013). "Call Center Mathematics". MG Books.