Reinforcement Learning in Workforce Operations

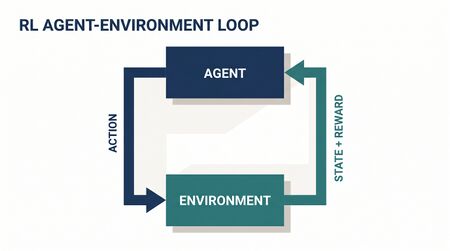

Reinforcement learning (RL) is the branch of machine learning concerned with sequential decision-making under uncertainty — an agent takes actions in an environment, observes rewards, and learns a policy that maximizes cumulative reward over time. This is precisely the structure of real-time workforce management: a routing engine assigns interactions to agents moment by moment, a scheduling system sequences decisions across planning horizons, and the outcomes of each decision depend on future arrivals that are unknown at decision time.

This page expands on the fundamentals of RL and develops its WFM applications in depth, covering Markov Decision Process formulations, value-based and policy-based methods, multi-armed bandits, deep RL, and the practical challenges of deploying RL in production contact center environments.

Overview

Traditional WFM optimization assumes a known model: forecast demand, compute requirements, solve a mathematical program. RL relaxes this assumption. The agent (in RL terminology — distinct from a contact center agent) learns from interaction with the environment rather than from a pre-specified model. This makes RL particularly suited to:

- Real-time routing — where the optimal assignment depends on the current queue state, agent availability, and anticipated future arrivals

- Schedule optimization — where policies must balance competing objectives across long horizons

- A/B testing and experimentation — where multi-armed bandits adaptively allocate traffic to the best-performing treatment

- Adaptive workforce management — where the system improves its own decision rules over time without manual recalibration

RL is not a replacement for classical optimization. It is a complement — strongest where the model is partially unknown, the environment is non-stationary, or the state space is too large for exact solution.

Mathematical Foundation

Markov Decision Process

The foundation of RL is the Markov Decision Process (MDP), defined by the tuple :

- — state space (e.g., current queue lengths, available agents by skill, time of day)

- — action space (e.g., assign to agent k, hold in queue, route to overflow)

- — transition probability: given state s and action a, the probability of reaching state s'

- — immediate reward (e.g., negative cost: penalties for wait time, idle time, skill mismatch)

- — discount factor, weighting immediate vs. future rewards

A policy maps states to action probabilities. The objective is to find the policy that maximizes expected discounted cumulative reward:

The optimal value function satisfies the Bellman optimality equation:

Q-Learning

When transition probabilities are unknown (the typical case in live WFM systems), Q-learning learns the state-action value function directly from experience:

where is the learning rate, is the observed reward, and is the next state. Q-learning is model-free (no explicit transition model needed) and off-policy (it learns about the optimal policy while following an exploratory one).

Convergence is guaranteed under standard conditions (every state-action pair visited infinitely often, decaying learning rate), but convergence can be slow when the state space is large — which it always is in WFM.

Policy Gradient Methods

Instead of learning a value function and deriving a policy from it, policy gradient methods directly parameterize the policy and optimize the parameters by gradient ascent:

This is the REINFORCE estimator (Williams, 1992). Key advantages for WFM:

- Handles continuous action spaces naturally (e.g., fractional agent allocation across skill groups)

- Can enforce constraints through the policy parameterization (e.g., policies that never violate labor rules)

- Works with stochastic policies, which provide built-in exploration

The actor-critic architecture combines both: the actor is a policy gradient model; the critic is a value function that reduces variance in the gradient estimate. Proximal Policy Optimization (PPO) and Soft Actor-Critic (SAC) are the workhorses of modern policy gradient RL.

Multi-Armed Bandits

The multi-armed bandit (MAB) is a simplified RL problem: a single state, multiple actions, and the goal of maximizing cumulative reward over T rounds. This maps directly to WFM experimentation: which routing strategy, queue configuration, or script variant performs best?

Thompson Sampling: Maintain a posterior distribution over each arm's reward. At each round, sample from each posterior and play the arm with the highest sample. This naturally balances exploration (uncertain arms get sampled occasionally) and exploitation (high-reward arms get sampled frequently).

For Bernoulli rewards (e.g., FCR success/failure):

After observing success on arm k: . After failure: .

Upper Confidence Bound (UCB): Play the arm that maximizes:

where is the empirical mean reward of arm k, is the number of times it has been played, and controls the exploration bonus. UCB is deterministic, has strong regret bounds, and is easy to implement.

Deep Reinforcement Learning

When the state space is too large for tabular methods (typical in WFM — consider all combinations of queue lengths, agent states, time features), neural networks approximate the value function or policy:

- Deep Q-Network (DQN): A neural network approximates the Q-function. Experience replay and target networks stabilize training.

- Deep policy gradient: Neural networks parameterize directly. PPO clips the policy update to prevent destructive large steps.

- Model-based deep RL: A neural network learns the environment dynamics , enabling planning within a learned model. Particularly relevant for WFM, where the "environment" (arrival processes, agent behavior) has learnable structure.

WFM Applications

Real-Time Routing as MDP

State: — queue lengths per skill group, agent availability/status, current time interval.

Actions: Assign arriving interaction to agent j, hold in queue, route to overflow, offer callback.

Reward:

A Q-learning or actor-critic agent learns routing policies that outperform static rules (longest-idle, most-skilled) by adapting to real-time conditions. The key advantage: RL routing considers downstream effects — assigning a bilingual agent to an English call now may leave Spanish callers waiting later.

Schedule Optimization

Shift scheduling as RL: each "episode" is a planning period. The agent sequentially assigns shifts to employees, observing the evolving coverage profile and constraint satisfaction. The reward penalizes understaffing, overstaffing, and constraint violations.

Policy gradient methods are natural here because the action space (shift assignment for each employee) is large and structured. The policy can be parameterized to respect hard constraints (labor law, consecutive working days) by construction.

A/B Testing with Bandits

Traditional A/B testing fixes a 50/50 split and waits for statistical significance. Bandits adapt:

| Method | Exploration Strategy | Best For |

|---|---|---|

| Fixed A/B test | Equal allocation | Clean causal estimates |

| Thompson Sampling | Posterior sampling | Rapid convergence with uncertainty |

| UCB | Optimistic estimates | Strong worst-case guarantees |

| Epsilon-greedy | Random exploration | Simple implementation |

WFM applications: testing new IVR menus, routing algorithms, hold music, callback offer timing, or coaching interventions. Bandits minimize the "regret" — the cost of traffic allocated to inferior treatments during the experiment.

Exploration-Exploitation in Production

The central tension: exploring suboptimal actions generates information but costs service quality. In a live contact center, poor routing decisions create real customer wait times.

Mitigation strategies:

- Constrained exploration: Only explore when queue depth is below a safety threshold

- Batch exploration: Collect data during off-peak periods, update policies before peak

- Transfer learning: Pre-train on historical data or simulation, fine-tune with limited live exploration

- Conservative policy updates: PPO-style clipping ensures the new policy stays close to the proven baseline

Worked Example

Problem: A contact center has 3 skill groups (billing, technical, general) and wants to learn a routing policy that minimizes average wait time while maintaining FCR above 80%.

Setup:

- State:

- Actions: Route to {billing specialist, technical specialist, general agent, hold}

- Reward:

Simulation training:

- Build a discrete-event simulator calibrated to historical arrival patterns, AHT distributions, and transfer rates

- Train a DQN agent for 500,000 episodes in simulation

- Evaluate against the production rule (longest-idle routing) over 10,000 simulated days

Results:

| Metric | Longest-Idle | RL Policy | Improvement |

|---|---|---|---|

| Avg wait time | 48 sec | 37 sec | −23% |

| FCR rate | 81% | 84% | +3pp |

| Agent utilization | 78% | 76% | −2pp (acceptable) |

| Transfer rate | 14% | 9% | −5pp |

Deployment: Shadow mode for 2 weeks (RL policy recommends, production rule executes, outcomes logged). Then gradual rollout: 10% → 25% → 50% → 100% with automatic rollback if wait time exceeds baseline + 10%.

Practical Challenges

- Sample efficiency: RL requires millions of interactions to converge. WFM systems generate thousands per day — not millions. Simulation pre-training is essential.

- Sim-to-real gap: Simulators simplify agent behavior, abandonment, and arrival correlations. Policies trained in simulation may underperform in production. Domain randomization (varying simulator parameters) partially mitigates this.

- Reward shaping: The reward function encodes business priorities. A misspecified reward (e.g., optimizing utilization without a wait-time penalty) produces a policy that technically maximizes reward but destroys service quality. Reward design requires WFM domain expertise, not just ML expertise.

- Non-stationarity: Contact center dynamics change — new products launch, agent skills evolve, customer behavior shifts. RL policies need continuous retraining or meta-learning approaches that adapt rapidly to distributional shifts.

- Explainability: A neural network policy that routes calls cannot explain why it chose agent 47 for this interaction. In regulated environments or union shops, this opacity may be unacceptable. Attention mechanisms and SHAP-based post-hoc explanations partially address this.

Maturity Model Position

- Level 2 (Developing): Rules-based routing with manual tuning (longest-idle, most-skilled-first)

- Level 3 (Advanced): Data-driven rule selection; basic A/B testing of routing strategies

- Level 4 (Leading): Bandit-based adaptive experimentation; RL-trained routing policies deployed via simulation pre-training

- Level 5 (Innovating): End-to-end deep RL routing with continuous online learning; meta-RL that adapts to new queue types without retraining; RL-driven schedule optimization integrated with real-time routing

See Also

- Markov Chains and Decision Processes in WFM

- Dynamic Programming for WFM

- Artificial Intelligence in WFM

- Machine Learning for Workforce Forecasting

- Simulation in Workforce Management

- Operations Research in Workforce Management

References

- Sutton, R.S. & Barto, A.G. (2018). Reinforcement Learning: An Introduction. 2nd ed. MIT Press.

- Mnih, V. et al. (2015). "Human-level control through deep reinforcement learning." Nature, 518(7540), 529-533.

- Schulman, J. et al. (2017). "Proximal Policy Optimization Algorithms." arXiv:1707.06347.

- Russo, D. et al. (2018). "A Tutorial on Thompson Sampling." Foundations and Trends in Machine Learning, 11(1), 1-96.

- Williams, R.J. (1992). "Simple statistical gradient-following algorithms for connectionist reinforcement learning." Machine Learning, 8(3-4), 229-256.

- Auer, P., Cesa-Bianchi, N. & Fischer, P. (2002). "Finite-time Analysis of the Multiarmed Bandit Problem." Machine Learning, 47(2-3), 235-256.