Markov Chains and Decision Processes in WFM

Markov Chains and Decision Processes in WFM develops the mathematics of memoryless stochastic systems and their application to workforce management. Markov chains model systems that transition between states with probabilities depending only on the current state — not the history. This property, deceptively simple, underlies queueing theory, attrition modeling, skill progression tracking, and the real-time routing decisions that determine moment-by-moment service quality.

The critical insight for WFM practitioners: every Erlang C calculation you run is a Markov chain computation. The M/M/c queue is a birth-death process — a specific type of continuous-time Markov chain. Understanding this connection transforms Erlang from a black-box calculator into a transparent model whose assumptions, limitations, and extensions become visible.

Overview

A Markov chain is a sequence of random states where the probability of transitioning to the next state depends only on the current state, not on how the system arrived there. This is the Markov property (or memorylessness):

For WFM, this means: the future evolution of a queue depends on how many calls are in the system right now — not on how they got there.

This property is both a simplification and a powerful modeling tool. It makes analysis tractable. It also forces the modeler to define "state" carefully — if history matters, the state definition must encode the relevant history.

Mathematical Foundation

Discrete-Time Markov Chains (DTMCs)

A DTMC with states is characterized by its transition matrix P, where:

Each row sums to 1 (the system must go somewhere). The k-step transition probabilities are given by the matrix power .

Example: An agent in a contact center cycles through states: Available (A), On Call (C), After-Call Work (W), On Break (B). Transition probabilities per 1-minute intervals:

| From \ To | Available | On Call | ACW | Break |

|---|---|---|---|---|

| Available | 0.70 | 0.25 | 0.00 | 0.05 |

| On Call | 0.00 | 0.75 | 0.25 | 0.00 |

| ACW | 0.60 | 0.00 | 0.30 | 0.10 |

| On Call → Break | — | — | — | — |

| Break | 0.20 | 0.00 | 0.00 | 0.80 |

This matrix encodes the operational reality: an available agent has a 25% chance of receiving a call in the next minute, a 5% chance of going on break, and a 70% chance of remaining available.

Steady-State Distribution

If a Markov chain is irreducible (every state is reachable from every other) and aperiodic (the system doesn't cycle with a fixed period), it converges to a unique steady-state distribution satisfying:

The steady-state distribution tells us the long-run fraction of time the system spends in each state. For the agent state model above, solving yields the fraction of time each agent spends available, on call, in ACW, and on break — which directly determines productive utilization and occupancy.

Continuous-Time Markov Chains (CTMCs)

In continuous time, the system can transition at any moment. Instead of a transition matrix, we have a rate matrix (or generator matrix) Q, where:

- for (transition rates between states)

- (rows sum to zero)

The time spent in state i before transitioning is exponentially distributed with rate . The steady-state satisfies .

The M/M/c Queue as a Birth-Death Process

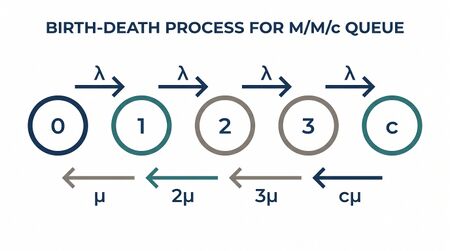

This is the connection that matters most for WFM. The M/M/c queue — the mathematical model behind Erlang C — is a birth-death process, a CTMC where transitions occur only between adjacent states.

States represent the number of customers in the system: .

- Birth rate (arrival): (constant, Poisson arrivals)

- Death rate (service completion): where c is the number of servers and μ is the per-server service rate

The rate diagram:

When , each additional customer adds one busy server, increasing the service rate. When , all servers are busy and additional customers queue — the service rate plateaus at .

Solving for the steady-state distribution of this birth-death process yields the Erlang C formula. The probability that all servers are busy and a new arrival must wait is:

where is offered load. This is not a separate formula — it is the steady-state probability of the birth-death Markov chain being in state .

Why this matters: Understanding Erlang C as a Markov chain makes its assumptions visible. The Poisson arrival assumption means the birth rate is constant and memoryless. The exponential service time assumption means the death rate from each server is memoryless. Violations of these assumptions (non-Poisson arrivals during peak ramps, heavy-tailed handle times, agent heterogeneity) are violations of the Markov chain structure, and understanding this points directly to the appropriate extensions (Erlang-A for abandonment, simulation for non-Markovian cases).

WFM Applications

Attrition Modeling with Markov Chains

Agent attrition is not a single rate — it varies by tenure. New agents (0–90 days) leave at much higher rates than tenured agents (2+ years). This is naturally modeled as a Markov chain.

States: Tenure bands — Nesting (0–30 days), Ramp (31–90 days), Developing (91–180 days), Proficient (181–365 days), Tenured (365+ days), Separated (absorbing state).

Monthly transition matrix:

| From \ To | Nesting | Ramp | Developing | Proficient | Tenured | Separated |

|---|---|---|---|---|---|---|

| Nesting | 0.00 | 0.82 | 0.00 | 0.00 | 0.00 | 0.18 |

| Ramp | 0.00 | 0.00 | 0.88 | 0.00 | 0.00 | 0.12 |

| Developing | 0.00 | 0.00 | 0.00 | 0.93 | 0.00 | 0.07 |

| Proficient | 0.00 | 0.00 | 0.00 | 0.00 | 0.96 | 0.04 |

| Tenured | 0.00 | 0.00 | 0.00 | 0.00 | 0.97 | 0.03 |

| Separated | 0.00 | 0.00 | 0.00 | 0.00 | 0.00 | 1.00 |

From this matrix:

- Expected time to separation from each state can be computed using the fundamental matrix , where Q is the transient portion of the transition matrix.

- Probability of reaching tenured status from initial hiring: multiply through the survival probabilities: 0.82 × 0.88 × 0.93 × 0.96 = 0.67. Only 67% of hires reach the Tenured state.

- Headcount projection: Starting with 50 new hires, multiply the initial state vector by to project the distribution across tenure bands at month k.

See: Annual Attrition, Speed to Proficiency Curve, Onboarding Costs

Skill Proficiency Progression

The Speed to Proficiency Curve can be modeled as a Markov chain where states represent proficiency levels and transitions represent competency development.

States: Novice (handles 60% of full workload), Intermediate (80%), Proficient (95%), Expert (100%), Disengaged (absorbing — agent stops developing due to boredom or burnout).

Transition probabilities depend on coaching intensity, call complexity, and individual learning rate. The model answers: Given a cohort of 30 new agents, how many months until 80% reach Proficient? What coaching investment changes the transition probabilities enough to accelerate this?

Queue State Evolution

At the interval level, the number of calls in the system follows a Markov chain. The state at the start of each 15-minute interval — (calls in queue, agents available, agents in ACW) — determines the service level distribution for that interval.

This is the mathematical foundation of Real-Time Operations: the ROC analyst watching the wallboard is observing the state of a Markov chain and making decisions based on the current state.

Markov Decision Processes for Real-Time Routing

A Markov Decision Process (MDP) extends a Markov chain with actions and rewards:

- States: Queue conditions, agent availability, time of day

- Actions: Route call to agent A, route to agent B, hold in queue, offer callback

- Transition probabilities: — the probability of reaching state given state and action

- Rewards: — service quality score, cost, or composite metric

The optimal policy maximizes the expected cumulative reward:

where is a discount factor (how much the system values immediate vs. future performance).

WFM application: A routing engine faces a call. Three agents are available: Agent 1 (fast but low quality), Agent 2 (average), Agent 3 (slow but high quality). The MDP-optimal policy considers not just this call but the downstream impact — routing to Agent 3 ties up a slow agent, increasing wait times for subsequent calls. The optimal action depends on the current queue depth, the time of day (peak vs. off-peak), and the forecast for the next 30 minutes.

This is exactly the problem that Next Generation Routing and skill-based routing engines attempt to solve. The MDP framework provides the rigorous mathematical foundation.

Worked Example: Agent State Model and Utilization

Setup: A center has 100 agents. Each agent cycles through 4 states with continuous-time rates:

- Available → On Call: rate 12/hr (average 5 minutes between calls)

- On Call → ACW: rate 15/hr (average 4 minutes per call)

- ACW → Available: rate 30/hr (average 2 minutes ACW)

- Available → Break: rate 1/hr (average 1 break per hour of available time)

- Break → Available: rate 4/hr (average 15-minute breaks)

Rate matrix Q:

| State | Available | On Call | ACW | Break |

|---|---|---|---|---|

| Available | −13 | 12 | 0 | 1 |

| On Call | 0 | −15 | 15 | 0 |

| ACW | 30 | 0 | −30 | 0 |

| Break | 4 | 0 | 0 | −4 |

Solve with :

From the equations:

- →

- →

- →

Normalizing: → →

| State | Steady-State Probability | Agents (of 100) |

|---|---|---|

| Available | 0.408 | 40.8 |

| On Call | 0.327 | 32.7 |

| ACW | 0.163 | 16.3 |

| Break | 0.102 | 10.2 |

Interpretation:

- Productive utilization (On Call + ACW) = 49.0%. This means 49 of 100 agents are handling contacts at any moment.

- Occupancy (On Call + ACW) / (Available + On Call + ACW) = 49.0% / 89.8% = 54.6%. Occupancy excludes break time from the denominator.

- Available pool = 40.8 agents ready for the next call. This is the supply side of the Erlang C calculation.

If management wants to increase occupancy to 70%, the model shows what must change: increase the arrival rate (calls per available hour) from 12 to a higher value, or decrease ACW time. The Markov chain quantifies the exact relationship.

Maturity Model Position

Markov chain concepts map to the WFM Labs Maturity Model:

- Level 1 (Reactive): Markov structure is invisible — practitioners use Erlang calculators without knowing they are solving Markov chains.

- Level 2 (Established): Practitioners understand agent state models and can interpret utilization/occupancy through the lens of state distributions.

- Level 3 (Advanced): Attrition and proficiency modeled as explicit Markov chains with transition matrices estimated from data. Queue dynamics understood as birth-death processes.

- Level 4 (Optimized): MDP-based routing decisions. Stochastic state models inform intraday staffing adjustments. Transition probabilities updated with Bayesian methods.

- Level 5 (Autonomous): Reinforcement learning agents operate on MDP formulations, learning routing and staffing policies from data. Continuous model updating and policy improvement.

See Also

- Operations Research in Workforce Management

- Erlang C

- Erlang B

- Erlang-A

- Poisson Process in Contact Centers

- Traffic Intensity and Server Utilization

- Annual Attrition

- Speed to Proficiency Curve

- Real-Time Operations

- Next Generation Routing

- Dynamic Programming for WFM

- WFM Labs Maturity Model

References

- Koole, G. Call Center Optimization. MG Books, 2013. — Chapters on queueing as Markov chains, MDP-based routing.

- Puterman, M.L. Markov Decision Processes: Discrete Stochastic Dynamic Programming. Wiley, 2005.

- Hillier, F.S. and Lieberman, G.J. Introduction to Operations Research, 11th ed. McGraw-Hill, 2021. — Chapters on Markov chains and queueing theory.

- Gross, D. et al. Fundamentals of Queueing Theory, 5th ed. Wiley, 2018.

- Gans, N., Koole, G., and Mandelbaum, A. "Telephone Call Centers: Tutorial, Review, and Research Prospects." Manufacturing & Service Operations Management 5(2), 2003. — Bridges queueing theory and call center operations.