Dynamic Programming for WFM

Dynamic Programming for WFM applies Richard Bellman's principle of optimality to workforce management problems where decisions are made sequentially over time. Unlike single-period optimization (solve one schedule, deploy it), dynamic programming (DP) recognizes that today's decisions constrain tomorrow's options. Every WFM operation with a planning horizon — multi-day scheduling, intraday staffing adjustments, VTO decisions, overtime allocation — is fundamentally a sequential decision problem.

Overview

Contact center operations unfold over time. A schedule built for Monday affects what is feasible on Tuesday (agents who worked a closing shift cannot open the next morning). A VTO decision at 10:00 AM changes what is possible at 2:00 PM. An overtime offer today affects the overtime budget for the rest of the week.

Most WFM platforms treat these as independent decisions — optimize Monday's schedule, then Tuesday's, then Wednesday's — ignoring the coupling between stages. Dynamic programming provides the mathematical framework to optimize the entire sequence jointly.

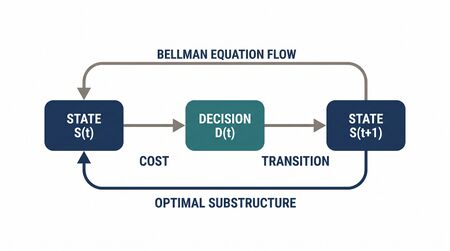

Bellman formalized this in 1957 with the principle of optimality: An optimal policy has the property that, whatever the initial state and initial decision, the remaining decisions constitute an optimal policy with regard to the state resulting from the first decision. This sounds abstract, but the operational implication is concrete: solve backward from the end of the planning horizon, and at each stage make the decision that minimizes the cost of that stage plus the optimal future cost.

Mathematical Foundation

The Sequential Decision Framework

A dynamic programming problem has five components:

- Stages (): The time periods over which decisions are made. In WFM, stages might be days in a scheduling horizon, intervals in an intraday plan, or decision points in a real-time process.

- States (): The information needed to make the decision at stage t. In WFM, states encode current staffing levels, agent fatigue/hours worked, queue conditions, and budget remaining.

- Decisions (): The actions available at stage t given state . In WFM: assign shifts, offer VTO, activate overtime, adjust routing.

- Transition function (): How the state evolves given the current state and decision. Deterministic DP has exact transitions; stochastic DP has probabilistic transitions.

- Cost function (): The immediate cost (or reward) at stage t for making decision in state .

Bellman's Equation

The value function represents the minimum total cost from stage t to the end, starting in state s:

with terminal condition:

Backward induction solves this by starting at the last stage and working backward:

- Set for all states.

- For : compute for every state s by evaluating all feasible decisions and selecting the one with minimum total cost.

The result is a complete optimal policy: for every stage and every possible state, the optimal decision is known.

Stochastic Dynamic Programming

When transitions are probabilistic (demand is uncertain, agent availability is random), Bellman's equation becomes:

The expectation is taken over the random state transition. This is the foundation of Markov Decision Processes (see Markov Chains and Decision Processes in WFM).

WFM Applications

Multi-Day Schedule Optimization

Problem: Build a 7-day schedule for 150 agents that minimizes total labor cost while meeting service level targets in every interval. Agent constraints couple the days: minimum 11-hour rest between shifts, maximum 5 consecutive working days, weekend rotation requirements.

DP formulation:

- Stages: Days 1 through 7.

- States: For each agent — hours worked so far this week, consecutive days worked, last shift end time. Aggregate state: coverage surplus/deficit carried forward.

- Decisions: Shift assignment for each agent on each day.

- Transition: State updates with new hours, new consecutive count, new shift end time.

- Cost: Labor cost for assignments plus penalty for coverage shortfalls.

Why DP helps: A greedy approach (optimize each day independently) produces solutions that violate multi-day constraints or incur unnecessary overtime on later days. DP considers the full week jointly.

In practice, the state space for 150 agents is too large for exact DP. Approximate methods — rolling horizon DP, state aggregation, or column generation with DP pricing — make it tractable. Column generation, specifically, uses DP to price individual agent schedules (see Column Generation in Scheduling).

Optimal Stopping: When to Pull Agents from Training

Problem: 20 agents are in a 3-day training program. Midway through Day 2, call volume surges. Pulling agents from training saves the service level today but delays their proficiency by a week (see Speed to Proficiency Curve). When is it optimal to interrupt training?

DP formulation:

- Stages: Decision intervals during the training period (e.g., every 30 minutes).

- States: (current queue state, training completion percentage, number of agents still in training).

- Decisions: Pull k agents from training (where ) or continue.

- Transition: Queue state evolves stochastically. Training completion advances deterministically. Pulled agents join the floor at reduced proficiency.

- Cost: Immediate service level penalty (if agents stay in training) + future proficiency cost (if agents are pulled).

The optimal policy takes the form: Pull agents from training if and only if the queue state exceeds a threshold that depends on how close training is to completion. Near the end of training, the threshold is high (almost done — don't pull). Early in training, the threshold is lower (less sunk cost in training hours).

Intertemporal VTO Decisions

Problem: At 8:00 AM, the intraday forecast shows 12 excess agent-hours across the day. The WFM analyst can offer Voluntary Time Off (VTO) now (cheaper — agents prefer morning VTO) or wait to see if demand materializes differently. Offering too much VTO early risks understaffing if demand spikes later. Offering too little wastes labor cost.

DP formulation:

- Stages: Decision intervals (e.g., hourly from 8 AM to 5 PM).

- States: (current staffing level, realized demand so far, VTO hours already offered, demand forecast for remaining intervals).

- Decisions: Number of VTO hours to offer in this interval.

- Transition: Staffing decreases by VTO offered. Demand in next interval is revealed (stochastic).

- Cost: Labor cost for excess staff + service level penalty for understaffing + VTO cost (typically lower than regular pay).

This is a stochastic DP because future demand is uncertain. The optimal policy balances the certainty of current excess against the uncertainty of future demand. It typically yields a non-obvious result: offer VTO aggressively in early intervals for the most predictable excess hours, but hold a staffing buffer for uncertain later intervals.

Dynamic Pricing of Overtime

Problem: The center needs 40 overtime hours for Saturday. Standard overtime pays 1.5x. If not enough agents volunteer at 1.5x, the rate must increase. How should the overtime premium escalate over time to fill the requirement at minimum total cost?

DP formulation:

- Stages: Days before Saturday (e.g., Wednesday, Thursday, Friday).

- States: (overtime hours filled so far, premium rate offered).

- Decisions: Premium rate for this round of offers.

- Transition: Agent acceptance probability depends on premium rate (higher rate → more volunteers, with diminishing returns).

- Cost: Premium rate × hours filled in this round.

Backward induction reveals: start with a moderate premium (1.5x), accept whatever fills, then escalate in later rounds only for the remaining gap. The optimal escalation path depends on the agent response curve — which can be estimated from historical acceptance data.

Worked Example: 3-Day Schedule Optimization

A small center has 10 agents and needs coverage over 3 days (Mon–Wed) across 2 shifts per day: Morning (M: 8 AM–4 PM) and Evening (E: 4 PM–12 AM). Each day requires 5 agents on Morning and 5 on Evening. An agent who works Evening cannot work Morning the next day (11-hour rest rule). Each agent works exactly 2 of the 3 days.

Greedy approach (optimize day by day):

Monday: Assign agents 1–5 to Morning, agents 6–10 to Evening. Cost: 10 agent-shifts.

Tuesday: Agents 6–10 worked Monday Evening, so they cannot work Tuesday Morning. Only agents 1–5 are available for Tuesday Morning. Assign agents 1–5 to Tuesday Morning again. But agents 1–5 have now worked 2 days — they are done for the week. Assign agents 6–10 to Tuesday Evening.

Wednesday: Agents 1–5 are exhausted (2 days worked). Agents 6–10 worked Tuesday Evening, so they cannot work Wednesday Morning. No agents are available for Wednesday Morning. The greedy approach fails.

DP approach:

Stage 3 (Wednesday): For every possible state (which agents are available, who worked Evening on Tuesday), compute the minimum-cost assignment.

Stage 2 (Tuesday): For every possible state after Monday, compute the assignment that minimizes Tuesday's cost plus the optimal Wednesday cost.

Stage 1 (Monday): Choose the Monday assignment that minimizes total 3-day cost.

The DP solution might assign: Monday — agents 1–5 Morning, agents 6–10 Evening. Tuesday — agents 6–10 Morning (they can, because 16 hours elapsed since Monday Evening ended at midnight), agents 1–5 Evening. Wednesday — agents 1–5 Morning (16 hours since Tuesday Evening), agents 6–10 Evening. Total: all agents work 2 of 3 days, rest rule is never violated, all coverage targets met.

The key insight: DP's backward induction detects the Wednesday constraint violation before making the Monday decision, while the greedy approach discovers it too late.

Connection to Reinforcement Learning

Reinforcement learning (RL) is often described as "dynamic programming without a model." Where DP requires knowing the transition function and cost function exactly, RL learns optimal policies by interacting with the environment and observing outcomes.

The connection is direct:

- DP value function ↔ RL value function

- Bellman's equation ↔ Bellman expectation/optimality equations in RL

- Backward induction ↔ Temporal difference learning, Q-learning

For WFM, this means: if you can build a simulator of your contact center (arrival patterns, handle times, agent behavior), you can train RL agents to discover optimal policies for routing, staffing adjustments, and intraday decisions — policies that would be intractable to compute via exact DP because the state space is too large.

See: Markov Chains and Decision Processes in WFM

Maturity Model Position

Dynamic programming concepts map to the WFM Labs Maturity Model as follows:

- Level 2 (Established): Practitioners recognize that scheduling decisions couple across days and understand why greedy approaches fail. Manual coordination substitutes for formal DP.

- Level 3 (Advanced): Scheduling engines use DP-based column generation to produce shift patterns. Analysts understand the forward-backward structure.

- Level 4 (Optimized): Stochastic DP informs intraday decisions — VTO optimization, overtime allocation, training interruption policies.

- Level 5 (Autonomous): RL systems trained via DP principles make real-time decisions autonomously, learning optimal policies from operational data.

See Also

- Operations Research in Workforce Management

- Schedule Optimization

- Column Generation in Scheduling

- Markov Chains and Decision Processes in WFM

- Speed to Proficiency Curve

- Real-Time Operations

- WFM Labs Maturity Model

References

- Bellman, R. Dynamic Programming. Princeton University Press, 1957. — The foundational text.

- Bertsekas, D.P. Dynamic Programming and Optimal Control, Vols. I and II, 4th ed. Athena Scientific, 2017. — The modern standard; rigorous treatment of deterministic, stochastic, and approximate DP.

- Puterman, M.L. Markov Decision Processes: Discrete Stochastic Dynamic Programming. Wiley, 2005. — Bridges DP and MDP theory.

- Koole, G. Call Center Optimization. MG Books, 2013. — Chapter on dynamic staffing and intraday decisions.

- Sutton, R.S. and Barto, A.G. Reinforcement Learning: An Introduction, 2nd ed. MIT Press, 2018. — The DP-RL connection, accessible treatment.