Bayesian Methods for Workforce Forecasting

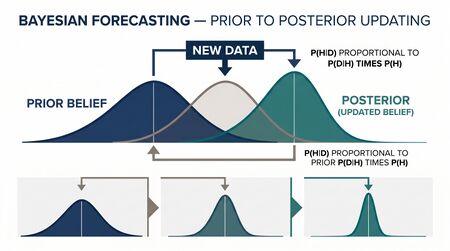

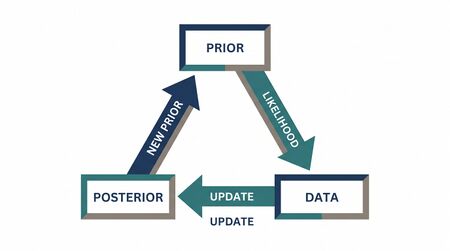

Bayesian Methods for Workforce Forecasting applies the Bayesian framework — start with prior beliefs, observe data, update to posterior beliefs — to demand forecasting, parameter estimation, and experimental design in workforce management. Where classical (frequentist) forecasting treats parameters as fixed unknowns, Bayesian forecasting treats them as random variables with distributions that sharpen as data accumulates. This distinction matters operationally: Bayesian methods produce probability distributions over forecasts, not point estimates, enabling staffing decisions grounded in calibrated uncertainty.

Overview

Every WFM forecast carries uncertainty. Classical methods handle this with confidence intervals and prediction intervals, but these are often misinterpreted and cannot easily incorporate prior knowledge. The 80% prediction interval around Tuesday's 10 AM volume doesn't account for the fact that marketing just announced a product launch, or that this queue is only 3 weeks old and has sparse history.

Bayesian forecasting addresses these limitations:

- Prior knowledge incorporation: A new queue has only 15 days of history. Classical methods struggle. Bayesian methods combine this sparse data with informative priors — perhaps derived from similar queues with longer histories.

- Sequential updating: As intraday data arrives, the forecast updates in real time. The 10 AM actual volume was 15% above forecast — what does this imply for 11 AM, noon, and the rest of the day?

- Principled uncertainty quantification: Bayesian credible intervals have a direct probability interpretation. A 90% credible interval for Tuesday 10 AM volume of [180, 220] means: given the model and data, there is a 90% probability that the true volume falls in this range.

- Hierarchical modeling: Multiple queues share structural patterns (weekly seasonality, holiday effects) while differing in scale and specifics. Hierarchical Bayesian models "borrow strength" across queues — small queues get regularized by the group pattern.

Mathematical Foundation

Bayes' Theorem

The foundation is elementary but powerful:

where:

- is the prior — what we believe about parameter θ before seeing data

- is the likelihood — the probability of observing data D given parameter θ

- is the posterior — updated belief about θ after seeing data

- is the marginal likelihood (normalizing constant)

Intuition: Start with what you believe. Observe reality. Update proportionally to how well each possible parameter value explains what you observed. Prior beliefs that are consistent with the data survive; those that aren't get down-weighted.

Conjugate Priors

When the prior and posterior belong to the same distribution family, computation simplifies dramatically. Key conjugate pairs for WFM:

| Data Model | Conjugate Prior | Posterior | WFM Application |

|---|---|---|---|

| Poisson (call arrivals) | Gamma | Gamma | Arrival rate estimation |

| Normal (AHT) | Normal-Inverse-Gamma | Normal-Inverse-Gamma | Handle time distribution |

| Binomial (abandonment) | Beta | Beta | Abandonment rate |

| Exponential (patience) | Gamma | Gamma | Customer patience time |

Example (Poisson-Gamma): Call arrivals in a 15-minute interval follow Poisson(λ). The prior on λ is Gamma(α₀, β₀). After observing n intervals with total count Y:

The posterior mean is — a weighted average of the prior mean and the data mean . With more data, the posterior concentrates around the data mean. With sparse data, the prior pulls the estimate toward a sensible baseline.

Prior Elicitation

Choosing the prior is the distinctive feature (and frequent criticism) of Bayesian methods. For WFM:

- Weakly informative priors: Encode only basic domain knowledge. "Arrival rate is positive and probably between 50 and 500 per interval" → Gamma(2, 0.01).

- Empirical Bayes: Estimate priors from data across similar queues. If 20 queues have average arrival rates ranging from 80 to 300, use this distribution as the prior for a new 21st queue.

- Informative priors: Incorporate specific knowledge. "This queue's volume is typically 80% of Queue A's volume" → prior centered at 0.8 × Queue A's current estimate.

Bayesian Updating Mechanics

For a Normal model with known variance (simplified for exposition):

Prior:

Data: Observe n data points with sample mean and known variance .

Posterior:

where:

Intuition: The posterior mean is a precision-weighted average of the prior mean and the data mean. "Precision" = 1/variance. More precise information (whether prior or data) gets more weight. The posterior variance is always smaller than both the prior variance and the data variance alone — combining information reduces uncertainty.

WFM Applications

Adaptive Demand Forecasting

Problem: The forecast for Wednesday is 2,000 calls. By 10 AM, 600 calls have arrived — 15% above the run-rate implied by the forecast. Should the afternoon staffing plan change?

Classical approach: Compare actual to forecast. If deviation exceeds a threshold, reforecast or make an ad hoc adjustment. No formal framework for how much to adjust.

Bayesian approach: The forecast is the prior. Intraday actuals are the data. The posterior is the updated forecast.

Model: Total Wednesday volume where and (reflecting forecast uncertainty).

By 10 AM, we observe a partial realization. Let be the cumulative fraction of daily volume expected by time t. At 10 AM, (28% of daily volume typically arrives by 10 AM). Observed volume by 10 AM: 600 calls. Implied daily total: 600 / 0.28 = 2,143.

Using the Bayesian update with the implied total as a noisy observation:

The posterior mean shifts from 2,000 to 2,057 — a 2.9% increase. The posterior uncertainty narrows from σ₀ = 200 to σₙ = 170. As more data arrives through the day, the posterior tightens further.

Operational use: Feed the posterior distribution into Erlang C to compute staffing requirements. Instead of one staffing number, generate a distribution of staffing needs. Staff to the 85th percentile of the posterior for risk-adjusted coverage.

Hierarchical Models Across Queue Families

Problem: A contact center operates 12 queues spanning 3 product families. Queue volumes range from 50/day (niche product) to 1,500/day (flagship). Forecasting the small queues is difficult — high variance, sparse data. Can information from large queues help?

Hierarchical Bayesian model:

Level 1 (observation): for queue q at time t.

Level 2 (queue parameters):

Level 3 (family hyperparameters): where is queue q's product family.

The family-level hyperparameters and encode the shared structure within each product family. Small queues borrow strength from larger siblings — their parameter estimates are "shrunk" toward the family mean, reducing variance at the cost of small bias.

Result: The niche queue with 50 calls/day gets a forecast that combines its own sparse data with the seasonal pattern of its 1,500-call sibling. Forecast accuracy for small queues improves substantially.

Empirical Bayes for New Channels

Problem: The center launches a webchat channel. After 2 weeks, only 10 business days of data exist. How to forecast?

Empirical Bayes approach:

- Estimate the distribution of forecasting parameters (seasonality, trend, day-of-week effects) across all existing channels with long history.

- Use this distribution as the prior for the new channel's parameters.

- Update with the 10 days of webchat data.

The webchat forecast combines the 10 days of channel-specific data with the typical patterns observed across all channels. As webchat accumulates more history, the data dominates and the prior fades.

This is far more principled than the common practice of "assume the new channel looks like the phone channel" — it uses the distribution of parameters across channels, not a single channel's parameters.

Bayesian A/B Testing for Routing Rules

Problem: Two routing rules are under test. Rule A routes by longest-available-agent. Rule B routes by predicted-best-match (skill-weighted). After 5 days, Rule B shows 3% lower AHT. Is this real or noise?

Frequentist approach (p-value): Compute a t-test. If p < 0.05, declare significance. Problem: p-values don't answer the operational question ("what is the probability that Rule B is better?").

Bayesian approach:

Prior: (no prior preference, wide uncertainty).

Likelihood: Observed AHT difference with standard error from the data.

Posterior:

Interpretation: There is a 93% probability that Rule B produces lower AHT than Rule A. The expected improvement is 12.5 seconds. A 95% credible interval for the improvement is [−3.8, 28.8] seconds.

Operational decision: This directly answers the question management cares about: "How confident are we that Rule B is better, and by how much?" Unlike a p-value, the posterior probability has a direct probability interpretation.

Credible Intervals vs. Confidence Intervals for Staffing

A 95% confidence interval (frequentist) means: if we repeated this analysis many times, 95% of such intervals would contain the true value. It does not mean there is a 95% probability the true value is in this specific interval.

A 95% credible interval (Bayesian) means: given the model and data, there is a 95% probability the true value is in this interval. This is what WFM practitioners actually want when making staffing decisions.

For staffing: "There is a 95% probability that Tuesday 10 AM volume is between 180 and 220" is actionable. "If we repeated this forecast process many times, 95% of intervals would contain the true volume" is not actionable for the one Tuesday you're staffing.

Worked Example: Bayesian Arrival Rate Estimation

Setup: A new queue (webchat support) has been live for 5 days. Observed daily volumes: 85, 92, 78, 103, 88 (mean = 89.2, variance = 81.7).

From 15 established queues, average daily volumes range from 50 to 500 with overall mean 180 and standard deviation 120.

Empirical Bayes prior: Model daily volume as Poisson with rate λ. The prior on λ is Gamma with parameters chosen to match the established queue distribution. Using method of moments: Gamma(α₀ = 2.25, β₀ = 0.0125), giving prior mean = 180 and prior variance = 14,400.

Likelihood: 5 days with total count Y = 446. Posterior: Gamma(α₀ + Y, β₀ + n) = Gamma(448.25, 5.0125).

Posterior mean: 448.25 / 5.0125 = 89.4 calls/day. Posterior 90% credible interval: [81.5, 97.8] calls/day.

With only 5 days of data, the data dominates (because the data is very informative — 446 total observations). The prior pulled the estimate only slightly from 89.2 to 89.4. But the prior's real value is in the uncertainty quantification: the credible interval is tighter than a pure frequentist interval because the prior excludes implausible values (negative rates, rates above 500).

If the queue had only 1 day of data (Y = 85), the posterior mean would be (2.25 + 85) / (0.0125 + 1) = 86.1 — the prior has more influence, pulling toward the established queue average but only slightly since even 1 day of Poisson data with count 85 is moderately informative.

Contrast with Frequentist Approach

| Dimension | Frequentist | Bayesian |

|---|---|---|

| Parameters | Fixed unknowns | Random variables with distributions |

| Prior knowledge | Not formally incorporated | Encoded in prior distribution |

| Uncertainty | Confidence intervals (coverage probability) | Credible intervals (posterior probability) |

| Small samples | Wide intervals, low power | Priors regularize estimates |

| Sequential updating | Must adjust for multiple comparisons | Natural via posterior updating |

| Interpretation | Technical (long-run frequency) | Intuitive (probability of this value) |

| Computation | Usually closed-form or simple optimization | May require MCMC sampling |

| Subjectivity | In model choice | In model choice AND prior choice |

Neither approach is universally superior. For well-behaved problems with abundant data, they converge to the same answers. Bayesian methods shine when data is sparse, prior knowledge is strong, or sequential updating is needed — all common in WFM.

Maturity Model Position

Bayesian methods map to the WFM Labs Maturity Model:

- Level 1 (Reactive): No formal uncertainty quantification. Point forecasts only.

- Level 2 (Established): Prediction intervals from classical methods. Awareness that forecasts have uncertainty.

- Level 3 (Advanced): Bayesian updating for intraday forecast adjustment. Credible intervals used for staffing decisions. Empirical Bayes for new queues.

- Level 4 (Optimized): Hierarchical Bayesian models across queue families. Bayesian A/B testing for routing and scheduling experiments. Full posterior distributions feed stochastic scheduling.

- Level 5 (Autonomous): Online Bayesian learning systems that continuously update demand models. Automated regime detection via posterior monitoring. Bayesian optimization for hyperparameter tuning in ML forecasting models.

See Also

- Operations Research in Workforce Management

- Forecasting

- Exponential Smoothing

- ARIMA Models

- Hierarchical Forecasting

- Forecast Accuracy Metrics

- Erlang C

- WFM Labs Maturity Model

References

- Gelman, A. et al. Bayesian Data Analysis, 3rd ed. CRC Press, 2013. — The standard Bayesian textbook; hierarchical models, MCMC, model checking.

- McElreath, R. Statistical Rethinking, 2nd ed. CRC Press, 2020. — Accessible introduction to Bayesian methods with worked examples.

- Hyndman, R.J. and Athanasopoulos, G. Forecasting: Principles and Practice, 3rd ed. OTexts, 2021. — Classical forecasting with discussion of Bayesian extensions.

- Koole, G. Call Center Optimization. MG Books, 2013. — Arrival rate estimation and uncertainty quantification for staffing.

- Hubbard, D.W. How to Measure Anything, 3rd ed. Wiley, 2014. — Calibrated uncertainty estimation and value-of-information analysis.