WFM Labs Risk Score™

WFM Labs Risk Score™

The WFM Labs Risk Score™ is a proprietary framework developed by Ted Lango for quantifying the operational risk embedded in any capacity plan. It produces a single composite indicator — scaled 0 to 100 — that communicates the balance between service-level protection, employee experience, and cost control in business terms rather than as a point-estimate FTE figure.

Most contact-center capacity plans are built on deterministic assumptions: one forecast, one shrinkage rate, one handle-time estimate. When reality deviates — and it always does — the plan either overspends or misses service targets. The Risk Score exists to quantify how likely the plan is to break and how badly it will break when it does.[1]

As contact centers move up the WFM Labs Maturity Model™ curve, risk-informed capacity planning becomes a core capability — shifting the operation from fragile, single-point staffing estimates to resilient, probability-based workforce strategies. The Risk Score is a Level 3–4 capability in that maturity progression.

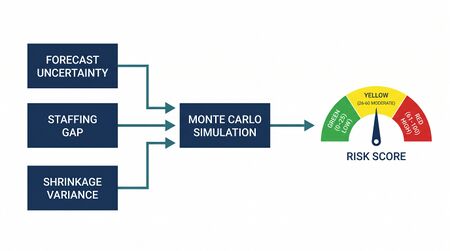

Architecture: Three Analytical Pillars

The Risk Score combines three analytical components, each targeting a different dimension of capacity-plan fragility:

- Monte Carlo Simulation — models demand variability and staffing uncertainty across thousands of scenarios.

- Staffing Gap Analysis — measures the structural distance between planned capacity and required capacity at the interval level.

- Shrinkage Variance Contribution — isolates the risk introduced by unstable or poorly understood shrinkage behavior.

These three pillars feed a weighted composite formula that produces the final score. The architecture is deliberately modular: each component can be inspected independently, making the score auditable and actionable rather than a black-box number.

How the Risk Score Is Calculated

The composite Risk Score is a weighted sum of three normalized sub-scores:

Risk Score = (w₁ × MCS) + (w₂ × SGA) + (w₃ × SVC)

Where:

- MCS = Monte Carlo Simulation sub-score (0–100)

- SGA = Staffing Gap Analysis sub-score (0–100)

- SVC = Shrinkage Variance Contribution sub-score (0–100)

- w₁, w₂, w₃ = component weights (default: 0.50, 0.30, 0.20; must sum to 1.0)

Default weights reflect the relative explanatory power each component has shown in back-testing across multi-site contact center operations. Monte Carlo simulation carries the heaviest weight because it captures the broadest set of interacting uncertainties. Staffing gap analysis captures structural planning errors that simulation alone may miss. Shrinkage variance, while narrower in scope, is the single most common source of plan failure in operations below Level 3 maturity.[2]

Weights are configurable. Operations with highly volatile shrinkage (e.g., seasonal BPO environments with 80%+ annual attrition) may increase w₃ to 0.30 and reduce w₁ accordingly. The key constraint is that the weights must sum to 1.0 and no single weight may exceed 0.60 to prevent any one component from dominating the composite.

Component 1: Monte Carlo Simulation (MCS)

Purpose

Traditional capacity plans produce a single staffing number for each planning interval. Monte Carlo simulation replaces this point estimate with a probability distribution of outcomes, answering the question: "Given everything we know about the variability of our inputs, what is the range of plausible staffing outcomes and how likely is each one?"[3]

Input Distributions

The simulation draws from probability distributions fitted to historical data for each key input:

- Contact volume — typically modeled with a Poisson or negative binomial distribution at the interval level, with parameters estimated from 8–12 weeks of historical data.

- Average handle time (AHT) — modeled with a log-normal distribution, capturing the right-skewed nature of handle times where a small number of complex contacts extend the tail.

- Agent shrinkage — modeled with a beta distribution bounded between 0 and 1, with shape parameters derived from observed daily shrinkage rates.

- Occupancy — constrained by the Erlang C relationship between offered load and agent count, but allowed to vary as volume and AHT shift.

- Attrition / turnover — modeled as a binomial process where each agent has a probability of departure in each planning period, calibrated to trailing 90-day attrition rates.

Simulation Process

For each planning interval (typically 15- or 30-minute intervals across the planning horizon):

- Draw a random sample from each input distribution.

- Calculate required staffing using the Erlang C (or WFM Labs Erlang-O™) model with the sampled inputs.

- Record the resulting service level, occupancy, and FTE requirement.

- Repeat 10,000 times per interval to build a stable output distribution.

The result is a probability distribution of staffing outcomes for every interval in the plan. From this distribution, several risk metrics are extracted:

- P(miss) — the probability that the planned headcount will fail to meet the service-level target.

- Expected shortfall — the average FTE gap in scenarios where the plan fails (analogous to Conditional Value at Risk in finance).[4]

- Tail severity — the magnitude of the worst 5% of simulated outcomes, measuring catastrophic downside.

Converting to the MCS Sub-Score

The MCS sub-score combines these metrics into a single 0–100 value:

MCS = 40 × normalize(P(miss)) + 35 × normalize(Expected Shortfall) + 25 × normalize(Tail Severity)

Normalization maps each metric to [0, 100] using empirically calibrated reference ranges. A plan where P(miss) < 10%, expected shortfall < 2 FTE, and tail severity < 5 FTE would score near 0 (very low risk). A plan where P(miss) > 50% with expected shortfall > 10 FTE would score near 100 (extreme risk).

Component 2: Staffing Gap Analysis (SGA)

Purpose

Monte Carlo simulation captures stochastic risk — the risk from random variation in inputs. Staffing Gap Analysis captures structural risk — systematic misalignment between planned capacity and required capacity that exists even before any randomness is introduced.[5]

Methodology

The SGA component performs interval-by-interval comparison between two quantities:

- Planned capacity — the number of productive agent-hours available after accounting for scheduled shrinkage (training, meetings, breaks, PTO).

- Required capacity — the agent-hours needed to meet the service-level target, calculated from the base forecast using Erlang C or WFM Labs Erlang-O™.

For each interval i:

Gap(i) = Planned(i) − Required(i)

Negative gaps indicate understaffing; positive gaps indicate overstaffing. Both directions carry risk: understaffing risks service-level misses, while systematic overstaffing signals wasted budget that will eventually trigger headcount reductions, creating future fragility.

Gap Metrics

From the vector of interval-level gaps, the SGA component extracts:

- Deficit frequency — the percentage of intervals where Gap(i) < 0. High deficit frequency means the plan is structurally thin.

- Deficit magnitude — the average size of negative gaps, measured in FTE-hours. Large deficits mean even a small demand increase will overwhelm the plan.

- Deficit clustering — the degree to which negative gaps cluster in consecutive intervals (measured using run-length encoding). Clustered deficits are harder to recover from than isolated ones because intraday levers like break optimization lose effectiveness when the gap persists for hours.

- Surplus efficiency — the ratio of surplus intervals used for productive off-phone activities versus idle surplus. Operations that convert surplus into training or coaching have lower effective risk.

Converting to the SGA Sub-Score

SGA = 30 × normalize(Deficit Frequency) + 30 × normalize(Deficit Magnitude) + 25 × normalize(Deficit Clustering) + 15 × (1 − normalize(Surplus Efficiency))

A plan with no structural deficits and efficient surplus utilization scores near 0. A plan with 40%+ deficit intervals clustered during peak hours scores near 100.

Component 3: Shrinkage Variance Contribution (SVC)

Why Shrinkage Gets Its Own Component

Shrinkage is the single largest source of capacity-plan error in most contact centers. A plan built on 30% shrinkage that actually experiences 35% has effectively lost one out of every six planned productive hours. Unlike volume variance — which WFM teams actively monitor — shrinkage variance often goes undetected until the damage is done.

The SVC component isolates shrinkage-specific risk because:

- Shrinkage is controllable in ways that volume and AHT are not — it responds to scheduling policy, attendance management, and training cadence.

- Shrinkage variance is asymmetric — it almost always deviates upward from plan (more shrinkage than expected, rarely less).

- Shrinkage is the variable most likely to be assumed constant in traditional plans, making it a hidden fragility.

Methodology

The SVC component measures three dimensions of shrinkage risk:

- Shrinkage volatility — the coefficient of variation (CV) of daily actual shrinkage rates over the trailing 30 days. A CV above 0.15 indicates unstable shrinkage behavior.

- Plan-vs-actual bias — the systematic gap between planned shrinkage rate and observed shrinkage rate. A persistent positive bias (actuals > plan) means the plan is systematically over-counting productive hours.

- Shrinkage composition risk — the concentration of shrinkage in unplanned categories (unplanned absence, system downtime, unexplained aux time). Unplanned shrinkage is harder to predict and adjust for than planned shrinkage (training, meetings, breaks).

Converting to the SVC Sub-Score

SVC = 35 × normalize(Shrinkage Volatility) + 35 × normalize(Plan-vs-Actual Bias) + 30 × normalize(Composition Risk)

An operation with stable, well-understood shrinkage that matches plan scores near 0. An operation with volatile, bias-prone, unplanned-heavy shrinkage scores near 100.

Interpreting the Risk Score

The composite Risk Score maps to four risk bands:

| Score Range | Risk Band | Interpretation | Typical Action |

|---|---|---|---|

| 0–25 | Low | Capacity plan is resilient across most plausible scenarios. High confidence in service-level attainment. | Monitor normally. Use surplus for development activities. |

| 26–50 | Moderate | Plan holds under normal conditions but is sensitive to demand spikes or shrinkage increases. | Ensure intraday flexibility levers are available. Pre-approve overtime triggers. |

| 51–75 | Elevated | Plan is fragile. Service-level misses are likely in 1–3 weeks of the planning period. Cost of recovery (overtime, vendor staffing) will be significant. | Revise plan. Add buffer FTEs or reduce planned shrinkage. Activate contingency staffing. |

| 76–100 | Critical | Plan will almost certainly fail. Multiple compounding risks present. | Escalate to leadership. Delay non-essential off-phone activities. Emergency hiring or vendor activation. |

What constitutes a "good" score depends on risk appetite. A startup BPO with thin margins may accept a score of 45 as a deliberate cost-optimization choice. A healthcare payer during open enrollment may require scores below 20. The Risk Score does not prescribe a universal threshold — it makes the risk visible so decision-makers can choose deliberately rather than discover risk through service failures.

Worked Example: Scoring a Q3 Capacity Plan

Consider a 200-seat customer service operation planning for Q3. The WFM team has produced a capacity plan and wants to assess its risk profile before submitting it for approval.

Step 1: Monte Carlo Simulation

The simulation runs 10,000 iterations per interval across the 13-week Q3 horizon. Key results:

- P(miss) = 32% — in roughly one-third of simulated scenarios, the plan fails to meet the 80/20 service-level target.

- Expected shortfall = 6.2 FTE — when the plan fails, the average gap is about 6 agents.

- Tail severity = 14 FTE — in the worst 5% of scenarios, the gap reaches 14 agents.

After normalization: MCS = 58

Step 2: Staffing Gap Analysis

Interval-level comparison reveals:

- Deficit frequency = 22% — about one in five intervals is structurally understaffed.

- Deficit magnitude = 3.1 FTE-hours — modest individual gaps.

- Deficit clustering = 0.65 (moderate) — deficits concentrate in Monday/Tuesday morning peaks.

- Surplus efficiency = 0.40 — only 40% of surplus intervals are used for productive off-phone work.

After normalization: SGA = 44

Step 3: Shrinkage Variance

Analysis of trailing 30-day shrinkage data shows:

- Shrinkage volatility (CV) = 0.18 — above the 0.15 threshold, indicating unstable behavior.

- Plan-vs-actual bias = +2.3 percentage points — actual shrinkage consistently exceeds plan (32.3% actual vs. 30.0% planned).

- Composition risk = 0.55 — over half of shrinkage comes from unplanned categories.

After normalization: SVC = 62

Step 4: Composite Score

Using default weights (0.50, 0.30, 0.20):

Risk Score = (0.50 × 58) + (0.30 × 44) + (0.20 × 62) = 29.0 + 13.2 + 12.4 = 54.6

Result: 54.6 — Elevated Risk

The plan falls in the Elevated band. The dominant risk driver is shrinkage variance (SVC = 62), followed by simulation-revealed demand sensitivity (MCS = 58). The structural gap is moderate (SGA = 44).

Recommended actions:

- Correct the shrinkage bias — adjust planned shrinkage from 30% to 32.5% to eliminate the systematic under-estimation.

- Address shrinkage composition — investigate the unplanned absence and aux-time drivers contributing to volatility.

- Add 4–6 FTE buffer for the Monday/Tuesday peak cluster identified in the gap analysis.

- Re-run the Risk Score after adjustments. Target: below 40.

Sensitivity Analysis

The Risk Score supports sensitivity analysis by varying one input at a time while holding others constant. This reveals which inputs the plan is most sensitive to and where risk-reduction effort will have the highest return.

Common sensitivity tests:

- Volume sensitivity — increase forecast volume by 5%, 10%, and 15% and observe Risk Score movement. If a 5% volume increase pushes the score from Moderate to Elevated, the plan has thin volume margins.

- Shrinkage sensitivity — increase planned shrinkage by 2 and 5 percentage points. Operations where a 2pp shrinkage increase causes a 15+ point Risk Score jump have dangerous shrinkage exposure.

- AHT sensitivity — increase AHT by 10% and 20%. Plans built on recent AHT trends that are declining may be at risk if the trend reverses.

- Attrition sensitivity — model the impact of losing 5% and 10% of the agent population mid-quarter. This test is particularly important for BPO operations with volatile tenure profiles.

Sensitivity analysis transforms the Risk Score from a static assessment into a dynamic planning tool. It answers: "Where should I invest my next dollar of risk mitigation — in hiring buffer, in shrinkage management, or in forecast accuracy improvement?"

Integration with Capacity Planning Workflow

The Risk Score is not a standalone artifact — it integrates into the capacity planning cycle at specific decision points:

- Plan Draft — after the WFM team produces the initial capacity plan, the Risk Score is calculated as a quality gate before submission to leadership.

- Plan Review — leadership reviews the Risk Score alongside the plan, using it to inform approval decisions and budget allocation.

- Scenario Planning — alternative plans (aggressive hiring, conservative hiring, vendor supplement) are each scored, enabling apples-to-apples risk comparison.

- Mid-Cycle Recalibration — the Risk Score is recalculated monthly (or more frequently) as actual performance data updates the input distributions. A rising Risk Score mid-quarter triggers early intervention.

- Post-Mortem — after the planning period ends, the predicted Risk Score is compared against actual outcomes. This back-testing loop calibrates the model and refines the normalization parameters over time.

Using the Risk Score in Decision-Making

The Risk Score changes the conversation between WFM and leadership from "how many FTEs do we need?" to "how much risk are we willing to accept?"

Budget conversations: Instead of defending a single FTE number, the WFM team presents a risk curve — "at 195 FTE the Risk Score is 62 (Elevated), at 205 FTE it drops to 38 (Moderate), at 215 FTE it reaches 22 (Low)." Leadership can then make an informed cost-risk tradeoff.[6]

Vendor decisions: When evaluating whether to supplement with vendor staffing, the Risk Score quantifies the risk reduction. If adding 20 vendor FTEs moves the score from 65 to 35, leadership can weigh that 30-point risk reduction against the vendor cost.

Operational investments: Investments in cross-skilling, schedule flexibility, or real-time automation can be justified by their Risk Score impact. If implementing a real-time adherence tool reduces the SVC component by 15 points, that improvement translates directly to the composite score.

Percentile staffing: The Risk Score naturally supports staffing to a percentile rather than the mean forecast. The simulation component makes explicit what percentile the current plan targets — staffing to the 50th percentile of simulated demand produces a very different Risk Score than staffing to the 75th percentile.

Relationship to Other WFM Labs Frameworks

The Risk Score does not operate in isolation. It connects to several other WFM Labs frameworks and wiki topics:

- WFM Labs Maturity Model™ — the Risk Score is a Level 3–4 capability. Organizations at Level 1–2 typically lack the data infrastructure to calculate it reliably.

- WFM Labs Erlang-O™ — plans built using Erlang-O (which incorporates operational overhead) consistently produce lower Risk Scores than plans built on traditional Erlang C, because Erlang-O already accounts for a portion of the variance that would otherwise appear as risk.

- Deterministic vs Probabilistic Models — the Risk Score is fundamentally a probabilistic tool. It cannot function meaningfully on top of purely deterministic planning assumptions.

- Simulation Tools for WFM — the Monte Carlo component requires simulation infrastructure, whether spreadsheet-based or purpose-built.

- Workforce Cost Modeling — the Risk Score's output connects directly to cost modeling by quantifying the expected cost of plan failure (overtime, vendor premium, lost revenue from missed service levels).

- Variance Harvesting — the Level 3 operating principle that turns variance into a planning input, providing the statistical foundation the Risk Score depends on.

Limitations and Caveats

The Risk Score is a model, and all models have boundaries:

- Data quality dependency — the score is only as reliable as the input distributions. Operations with fewer than 8 weeks of clean historical data should treat the score as directional rather than precise.

- Correlation assumptions — the default simulation treats input variables as independent. In reality, volume spikes and AHT increases often co-occur (e.g., during system outages). Advanced implementations should model correlations explicitly.

- Static weights — the default 50/30/20 weighting is empirically grounded but not universal. Operations should validate and potentially recalibrate weights through back-testing against their own historical outcomes.[7]

- Does not replace judgment — the Risk Score informs decisions but does not make them. A score of 60 in a stable, mature operation may be more manageable than a score of 40 in a chaotic, low-maturity one. Context always matters.

References

- ↑ Hubbard, D.W. (2014). How to Measure Anything: Finding the Value of "Intangibles" in Business. 3rd ed. Wiley. ISBN 978-1-118-53927-9.

- ↑ Cleveland, B. (2012). Call Center Management on Fast Forward. 3rd ed. ICMI Press.

- ↑ Vose, D. (2008). Risk Analysis: A Quantitative Guide. 3rd ed. Wiley. ISBN 978-0-470-51284-5.

- ↑ Jorion, P. (2006). Value at Risk: The New Benchmark for Managing Financial Risk. 3rd ed. McGraw-Hill.

- ↑ Reynolds, P. (2015). Call Center Staffing: The Complete, Practical Guide to Workforce Management. The Call Center School Press.

- ↑ Taleb, N.N. (2012). Antifragile: Things That Gain from Disorder. Random House. ISBN 978-1-4000-6782-4.

- ↑ Silver, N. (2012). The Signal and the Noise: Why So Many Predictions Fail — but Some Don't. Penguin Press.

See also

- WFM Labs Maturity Model™ — the five-level operating-maturity progression in which the Risk Score is a Level 3–4 capability.

- WFM Labs Erlang-O™ — the interval-staffing model that feeds the Risk Score.

- Variance Harvesting — the Level 3 operating principle that turns variance into a planning input.

- Capacity Planning Methods — overview of capacity planning approaches in WFM.

- Probabilistic Planning in WFM — the broader paradigm the Risk Score operates within.

- Simulation Tools for WFM — tooling options for running Monte Carlo simulations.

- Shrinkage — the single most common source of capacity-plan fragility.

- Service Level — the primary target the Risk Score protects.

- Staffing to Percentile vs Mean Forecast — the percentile-based staffing approach the simulation component enables.

- Scenario Planning and Contingency Staffing — using Risk Scores to compare alternative capacity scenarios.

- Erlang C — the foundational staffing model underlying the simulation component.

- Deterministic vs Probabilistic Models — the analytical paradigm shift the Risk Score represents.

- Workforce Cost Modeling — translating Risk Score outputs into financial terms.