Multi-Channel and Blended Operations

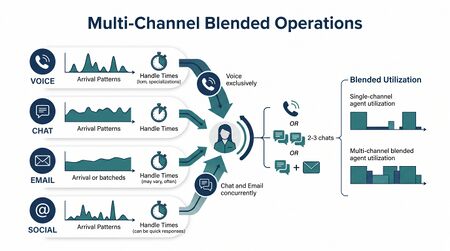

Multi-Channel and Blended Operations is the operational discipline of running a contact center where agents handle more than one channel of work — voice, chat, email, messaging, social, back-office tasks — concurrently or sequentially. The blending decision (dedicated agents per channel vs cross-channel agents) is one of the most consequential staffing-and-routing choices a Level 2-to-Level 4 operation makes, and the math behind it is materially different from single-channel staffing.

The core complication: channels have different real-time properties. Voice is synchronous and serial — one call at a time. Chat is synchronous but concurrent — an agent can handle 2-3 simultaneous chats. Email and back-office are asynchronous — service-level is measured in hours or days, not seconds, and capacity is interruptible. Treating these as substitutable units of agent time produces planning that fails in operation.

What practitioners build

A multi-channel operation has four design artifacts:

- Channel mix model. Forecast volume by channel; demand-to-FTE conversion that respects each channel's real-time properties.

- Blending policy. Which agents are dedicated to one channel vs cross-channel-eligible. Often varies by tenure, skill, and shift.

- Concurrency rule. For chat and concurrent-channel work, how many simultaneous interactions an agent may carry. The chat-concurrency parameter is to multi-channel what skill-mix is to single-channel — the operational-capacity dial.

- Cross-channel routing rule. How channels are prioritized when an agent is eligible for multiple channels — voice-first, chat-first, batch-priority, async-deferred. Often runtime-tunable in modern routing platforms.

The integrated artifact is a per-channel staffing plan that respects each channel's service-level target and the blending policy and the concurrency rule simultaneously.

Math: capacity per channel

The single most important math lesson in multi-channel: agent capacity per channel is not a constant.

For voice, an agent handling AHT of H minutes contributes 60/H interactions per hour at full occupancy. Standard Erlang-C / Erlang-A applies cleanly.

For chat with concurrency k, the picture changes. An agent can handle k simultaneous chats; each chat has individual AHT but the agent's wall-clock minutes are shared. Effective per-chat handle time as experienced by the customer is approximately:

- AHT_effective ≈ AHT_solo · (1 + δ(k))

where δ(k) is a quality-degrading multiplier capturing context-switch cost — typically 10-25% per additional concurrent chat under cognitive-load research.[1][2]

The agent's hourly contact capacity is approximately k · 60 / AHT_effective — but only if the agent is actually saturated with k simultaneous chats. At lower load, the practical concurrency is lower, and the staffing math needs to use a load-dependent effective capacity rather than a flat k.

For email and back-office, the math degenerates further. The "queue" is hours-to-days deep; service level is measured by completion-time percentile rather than answer-time percentile; the staffing problem is workload-shaping over a long horizon, not interval-by-interval queueing. Erlang doesn't apply at all; the right tool is a deterministic capacity-vs-aging model.

Math: the chat-concurrency parameter (and why it isn't just k)

Chat concurrency is a quality-and-throughput trade-off. Higher k increases throughput per agent but degrades the customer experience (chats sit waiting for an agent who is multi-tasking) and increases agent cognitive load.

The same cognitive-portfolio constraint that drives the N* equation in Pool Collab applies to chat agents: there is a sustainable-utilization ceiling on cognitive capacity that no amount of practice raises beyond a structural ~80-85%. Chat concurrency above k = 2-3 typically pushes agents past the sustainable cognitive-utilization line, producing measurable quality erosion in resolution rates and customer satisfaction.

Practitioner heuristic for chat concurrency: start at k = 2, validate quality and customer-wait-time metrics, raise to k = 3 only if both hold. Higher k is rarely defensible without strong evidence on quality.

Math: blending and the pooling benefit

When the same agent is eligible for voice and chat, the operation gains a pooling benefit across channels in addition to the within-channel pooling. The variance in voice and chat arrivals is partially uncorrelated; an agent who flexes between them absorbs surge in either channel.

But the blending pooling benefit is partially offset by the channel-asymmetry effect: voice is synchronous and pre-empts chat in most blending policies, so a chat agent handling 2 chats at the moment a voice call arrives must either pause the chats (degrading chat) or refuse the voice (degrading voice). The realized blending benefit is materially less than the theoretical pooling math suggests.

Practical sizing approach: use Erlang-A per channel at the channel-specific service target, then apply a blending-credit multiplier (typically 0.85-0.95) that captures the realized pooling benefit net of channel-asymmetry cost. The credit is empirical — measured against the actual blending policy in operation, not assumed from theory.

Math: async channels and the SLA shape

Email and back-office channels' service-level math is fundamentally different. The standard chat/voice SL form — X% answered in Y seconds — does not apply. Async SL takes the form:

- X% completed within Y hours/days

where Y is typically 24 hours (consumer email) or 4 business hours (premium support) or longer. The capacity question becomes: what daily processing rate prevents the aging-tail from breaching the SLA percentile?

This is a deterministic flow problem when arrivals are stable and a queueing-discipline problem when arrival variance is high. Little's Law in its long-run form (L = λ · W) sets the average inventory the operation must clear; the SL percentile sets the worst-case clearance discipline.

Async channels are highly substitutable with voice/chat slack time — agents waiting in queue with no real-time interactions can process async work productively. The operational design lever: schedule async work into voice/chat troughs to recover otherwise-idle capacity. This is the most common Level 3 multi-channel optimization and one of the highest-leverage capacity moves available.

Practitioner playbook

- Forecast per channel. Volume, AHT, and arrival pattern per channel. Channels have different seasonality, day-of-week, and intraday curves; one channel's forecast does not predict another's.

- Set service-level targets per channel. Different customer expectations per channel. Voice typically tightest (80/20 or similar); chat slightly looser (80/45); email/async by hour percentile.

- Decide the blending policy. Dedicated, blended-with-priority, or fully-flexed. Blending policy depends on tenure (typically only proficient agents handle multiple channels well), skill density, and tooling support.

- Calibrate concurrency. Set chat k based on quality and wait-time metrics, not on max-throughput math. Validate empirically.

- Stage the routing. Voice usually pre-empts chat by default. Tune the priority rule and any cooldown logic to balance channel SLs.

- Schedule async into slack. Map async-channel capacity into voice/chat troughs. Track async-aging discipline daily.

- Validate the staffing math. Re-run Erlang-A per channel with the blending credit and concurrency-adjusted capacity. The calculated FTE is the floor; planning often adds margin for the channel-asymmetry effect.

Common failure modes

- Blending without measuring concurrency safely. Chat k set at 3 or 4 because the platform supports it, with no validation that quality holds. Customer satisfaction silently erodes.

- Treating channels as substitutable units of agent time. "We have 50 agent-hours; we have 50 hours of demand; we're staffed." Ignores channel-specific real-time properties. Async demand and synchronous demand are not interchangeable units.

- Ignoring async SLAs. Async-channel aging is invisible day-to-day. The SLA breaches show up at percentile reporting time. Treat async SLAs as first-class measurement; do not let them be the trailing-indicator that surprises leadership.

- Voice pre-emption assumed always-on. Some platforms allow voice to interrupt chat; some do not. The blending policy in the routing config determines reality. Audit explicitly.

- Mis-tuned concurrency ramp. New chat agents put on k = 2 from day one. Concurrency is a learned skill; ramp it from k = 1 over weeks 1-6, then to k = 2 once base proficiency is real. Pushed concurrency at low tenure produces both poor service and high attrition.

- Channel-segregated forecasting that ignores cross-channel substitution. Customers move between channels — a chat that doesn't resolve becomes a call. Forecasts that treat each channel as independent miss the substitution and the demand spillover.

- Reporting headline SL across channels. "We hit 80%." Across all channels combined hides per-channel breaches. Each channel needs its own SL trace.

Maturity Model Position

- Level 1 — Initial (Emerging Operations) — Single channel only or unblended channels with no shared planning. Channel teams operate as silos. Cross-channel customer journeys not visible to operations.

- Level 2 — Foundational (Traditional WFM Excellence) — Multi-channel exists but planning is per-channel, additive. Concurrency set by platform default or vendor recommendation. Blending policy informal. Async-channel SLAs reported but not actively managed.

- Level 3 — Progressive (Breaking the Monolith) — Channels planned together. Blending policy explicit and tenure-aware. Concurrency calibrated against quality data. Async work scheduled into synchronous-channel troughs. Per-channel SLs reported with discipline.

- Level 4 — Advanced (The Ecosystem Emerges) — Routing across channels integrates real-time agent state, channel queue state, and customer-context. Blending policies dynamic — adjusting tenure-and-skill mix by interval. The cognitive-load constraint respected for chat-concurrency design. Customer-journey analytics inform cross-channel substitution and demand spillover.

- Level 5 — Pioneering (Enterprise-Wide Intelligence) — Multi-channel is part of an integrated supply-and-demand orchestration layer. Channels co-optimized with pool decisions (which channel work routes to AI, to Pool Collab, to Pool Spec). Concurrency, blending, and routing tuned continuously against business-value-aware objectives.

References

- Koole, G. (2013). Call Center Optimization. MG Books. Open access at https://www.cs.vu.nl/~koole/ccmath/book.pdf. The blending and multi-channel chapters are the practitioner reference.

- Aksin, Z., Armony, M., & Mehrotra, V. (2007). "The modern call center: A multi-disciplinary perspective on operations management research." Production and Operations Management 16(6), 665-688. Foundational treatment of chat-concurrency and blending math.

- Tezcan, T., & Behzad, B. (2012). "Robust design and control of call centers with flexible interactive voice response systems." Manufacturing & Service Operations Management 14(3), 386-401. Blending math under uncertainty.

- Gans, N., Koole, G., & Mandelbaum, A. (2003). "Telephone call centers: tutorial, review, and research prospects." Manufacturing & Service Operations Management 5(2), 79-141.

- Mandelbaum, A., & Zeltyn, S. (2009). "Staffing many-server queues with impatient customers: Constraint satisfaction in call centers." Operations Research 57(5), 1189-1205. Multi-class staffing relevant to mixed-channel operations.

See Also

- Digital Messaging — The customer-facing channels this operations discipline staffs

- Omnichannel Customer Engagement — The customer-experience counterpart to blended operations

- Skill-Based Routing — peer page; channel-routing is a special case

- Pooling Theory — peer page; pooling math behind blending benefit

- Long-Run Workforce Sizing — peer page

- Multi-Skill Scheduling — scheduling layer that consumes multi-channel demand

- Cognitive Portfolio Model (N*) — the cognitive constraint behind chat-concurrency

- Erlang-A — per-channel staffing baseline

- Service Level — per-channel SL targets

- Cross-Training and Skill Mix Strategy — cross-channel skill investment

- Three-Pool Architecture — pool-aware channel routing

- Workforce Forecasting — channel-specific forecast inputs

- Capacity Planning Methods — operational layer

- Next Generation Routing — routing maturity arc

- Variance Harvesting — async-into-slack is a Variance Harvesting pattern

- Multi Site and Network Capacity Planning

- ↑ Aksin, Z., Armony, M., & Mehrotra, V. (2007). "The modern call center: A multi-disciplinary perspective on operations management research." Production and Operations Management 16(6), 665-688.

- ↑ Tezcan, T. (2008). "Optimal control of distributed parallel server systems under the Halfin-Whitt regime." Mathematics of Operations Research 33(1), 51-90.