Information Theory for Workforce Intelligence

Information Theory for Workforce Intelligence applies Claude Shannon's mathematical theory of communication to workforce management problems. Shannon's framework (1948) provides tools to measure uncertainty, quantify the information content of signals, detect distribution shifts, and assess the value of additional data. For WFM, this translates to: How predictable is demand? How much does one channel's volume tell us about another? When has the demand pattern fundamentally changed? Is investing in better forecasting data worth the cost?

Overview

Workforce management runs on information — demand forecasts, adherence data, quality scores, customer sentiment signals. But not all information is equally valuable, and not all uncertainty is equally reducible. Information theory provides the mathematical framework to quantify these distinctions.

The core concepts:

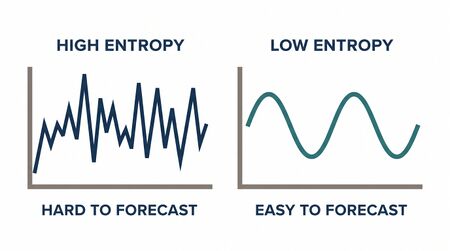

- Entropy measures how uncertain (or unpredictable) a random variable is. High-entropy demand patterns are harder to forecast. Low-entropy patterns are stable and predictable.

- Mutual information measures how much knowing one variable reduces uncertainty about another. High mutual information between chat and phone volumes means they share a common demand driver.

- KL divergence measures how different two probability distributions are. A sudden increase in KL divergence between the current week's volume distribution and the historical baseline signals a regime change.

- Cross-entropy is the loss function underlying most classification ML models used in WFM (churn prediction, escalation classification, quality scoring).

These are not abstract concepts — they have direct operational interpretations for WFM practitioners.

Mathematical Foundation

Shannon Entropy

For a discrete random variable X with possible values and probabilities :

measured in bits (when using log base 2).

Intuition: Entropy is the average number of yes/no questions needed to determine the outcome. A fair coin has entropy 1 bit. A loaded coin (90% heads) has entropy 0.47 bits — less uncertain, fewer questions needed. A deterministic outcome has entropy 0 — no questions needed.

For continuous distributions, differential entropy replaces the sum with an integral:

A normal distribution with standard deviation σ has differential entropy — entropy increases with variance, as expected.

Mutual Information

Mutual information measures the shared information between two random variables:

Equivalently:

Intuition: Mutual information is the reduction in uncertainty about X when you observe Y (and vice versa). If , X and Y are independent — knowing one tells you nothing about the other. If , Y completely determines X.

KL Divergence

The Kullback-Leibler divergence measures how one probability distribution P diverges from a reference distribution Q:

Properties:

- always (Gibbs' inequality)

- if and only if P = Q

- KL divergence is not symmetric: in general

Intuition: KL divergence measures the "information cost" of using distribution Q when the true distribution is P. In WFM: if you staff based on last year's demand distribution (Q) but this year's actual distribution (P) has shifted, the KL divergence quantifies how much your staffing model is wrong.

Cross-Entropy

Cross-entropy between distributions P and Q:

Cross-entropy is the expected number of bits needed to encode data from P using a code optimized for Q. It equals the true entropy plus the divergence penalty for using the wrong distribution.

ML connection: When training a classification model (escalation prediction, churn scoring), cross-entropy loss is the standard objective function. Minimizing cross-entropy = minimizing the KL divergence between the model's predictions and reality = making the model's probability estimates as close to truth as possible.

Entropy Rate

For a stochastic process , the entropy rate captures the asymptotic per-symbol uncertainty:

For a stationary process, this equals — the conditional entropy of the next observation given the full history. A low entropy rate means the process is highly predictable from its history.

WFM Applications

Entropy of Arrival Patterns: How Predictable Is Demand?

Problem: Two queues have the same average daily volume (500 calls) but different variability. Queue A follows a tight pattern (always 480–520). Queue B swings between 300 and 700. Which queue is harder to forecast?

Entropy analysis: Discretize daily volumes into bins (e.g., 50-call buckets). Compute the entropy of each queue's volume distribution.

Queue A: Most probability mass in 2 bins → entropy ≈ 1.2 bits. Queue B: Probability spread across 8 bins → entropy ≈ 2.8 bits.

Queue B has 2.3× the entropy of Queue A — it requires more than twice the information to predict. Staffing Queue B requires larger buffers, more frequent intraday adjustments, and more conservative planning.

Practical use: Rank all queues by arrival pattern entropy. High-entropy queues get more analyst attention, larger safety staffing margins, and more frequent forecast updates. Low-entropy queues can be forecast with simple methods and tighter buffers.

Mutual Information Between Channels

Problem: When chat volume spikes, does phone volume spike too? Does email volume predict next-week phone volume?

Analysis: Compute — the mutual information between same-interval phone and chat volumes.

Example calculation: Using 6 months of 15-minute interval data:

| Channel Pair | Mutual Information (bits) | Interpretation |

|---|---|---|

| Phone ↔ Chat (same interval) | 0.82 | Strong relationship — shared demand driver |

| Phone ↔ Email (same interval) | 0.31 | Weak relationship — different patterns |

| Chat today ↔ Phone tomorrow | 0.15 | Minimal predictive value across days |

| Phone ↔ Phone (lag 1 interval) | 1.45 | Strong autocorrelation within channel |

Operational insight: The high phone-chat mutual information (0.82 bits) means a chat volume spike should trigger an immediate phone staffing check — they share a common demand driver (likely a service outage or product issue). The weak phone-email relationship means email staffing can be managed independently.

Feature selection for ML forecasting: Mutual information quantifies which input features carry the most information about the target variable. When building a forecasting model, rank candidate features by mutual information with the target (e.g., volume in the next interval). Keep high-MI features, drop low-MI features. This is more principled than correlation analysis because mutual information captures non-linear relationships.

KL Divergence for Distribution Monitoring (Regime Detection)

Problem: Has the demand pattern changed? The forecast was built on 12 months of history, but the last 3 weeks feel different. Is it noise or a structural shift?

KL divergence monitor: Each week, compute the KL divergence between the current week's interval-level volume distribution and the trailing 12-month baseline.

| Week | KL Divergence (bits) | Status |

|---|---|---|

| Week 1 | 0.02 | Normal (within noise) |

| Week 2 | 0.03 | Normal |

| Week 3 | 0.05 | Elevated — watch |

| Week 4 | 0.12 | Alert — regime change likely |

| Week 5 | 0.18 | Confirmed shift |

When KL divergence exceeds a threshold (calibrated from historical false-positive rates), trigger a forecast rebuild using only recent data. This is more rigorous than ad hoc "the forecast is off" intuition — it quantifies exactly how much the distribution has shifted.

Extension: Monitor KL divergence separately for:

- Volume distribution (has demand changed?)

- AHT distribution (has call complexity changed?)

- Arrival pattern (has the intraday shape changed?)

- Abandonment distribution (has customer patience changed?)

Each detects a different type of regime change requiring different operational responses.

Information Value for Measurement Decisions

Problem: The WFM team is considering investing in speech analytics to classify call types in real time, enabling more granular volume-by-type forecasts. Is the investment worth the improvement in forecast accuracy?

Value of information framework:

- Current entropy: Forecast the total volume with entropy = 3.2 bits.

- Conditional entropy with call-type data: If call types were known, = 2.1 bits.

- Information gain: = 3.2 − 2.1 = 1.1 bits.

- Translate to forecast accuracy: The 1.1-bit information gain corresponds to roughly 25% reduction in forecast error variance (calibrated from historical analysis).

- Translate to staffing cost: 25% better forecast accuracy → ~4% reduction in staffing buffer → $280K annual labor savings for a 500-agent center.

- Compare to investment: Speech analytics platform costs $150K/year.

Decision: Net value = $280K − $150K = $130K/year. The information is worth acquiring.

This framework — quantifying information value before committing to measurement investments — is core to the Applied Information Economics methodology. Information theory provides the mathematical scaffolding. See also: Bayesian Methods for Workforce Forecasting for the related concept of value of information through Bayesian decision theory.

Cross-Entropy Loss in ML Models for WFM

When training ML models for WFM applications — escalation prediction, churn scoring, quality classification — cross-entropy loss is the standard training objective:

where is the true label and is the model's predicted probability.

Intuition: Cross-entropy penalizes confident wrong predictions heavily and rewards calibrated probability estimates. A model that says "90% chance of escalation" when escalation actually occurs gets a small loss. The same model saying "10% chance" gets a large loss.

WFM relevance: Well-calibrated probability estimates matter more than binary classification accuracy for WFM decisions. An escalation prediction model with calibrated probabilities enables staffing the escalation queue proportionally — 100 calls predicted with 30% escalation probability → expect 30 escalations → staff accordingly. A model that is accurate but miscalibrated (says 30% but the true rate is 50%) leads to understaffing.

Worked Example: Entropy-Based Queue Difficulty Ranking

Setup: A center operates 5 queues. For each, compute the entropy of the daily volume distribution using 90 days of data.

| Queue | Avg Daily Volume | Std Dev | Entropy (bits) | Rank (hardest first) |

|---|---|---|---|---|

| Sales Inbound | 800 | 250 | 4.2 | 1 (hardest) |

| Technical Support | 1,200 | 300 | 3.8 | 2 |

| Billing | 600 | 80 | 2.1 | 4 |

| General Inquiry | 400 | 180 | 3.5 | 3 |

| Account Closure | 50 | 40 | 1.8 | 5 (easiest) |

Interpretation:

- Sales Inbound has the highest entropy despite not having the highest volume — its variability relative to its mean is extreme (CoV = 0.31). This queue needs the most conservative staffing buffers and most frequent forecast updates.

- Billing has low entropy — predictable, stable. Simple forecasting methods suffice.

- General Inquiry has moderate volume but high entropy — likely driven by unpredictable external events. Consider mutual information analysis with external signals (product launches, outage reports) to reduce uncertainty.

Action: Allocate analyst attention proportionally to entropy. The Sales Inbound forecast should be reviewed daily; the Billing forecast can be reviewed weekly.

Maturity Model Position

Information theory concepts map to the WFM Labs Maturity Model:

- Level 1 (Reactive): No formal uncertainty measurement. Forecasts treated as deterministic.

- Level 2 (Established): Basic variability measures (standard deviation, coefficient of variation) used for buffer sizing.

- Level 3 (Advanced): Entropy-based queue difficulty ranking. Mutual information for channel relationship analysis. KL divergence for regime detection.

- Level 4 (Optimized): Information value analysis for measurement investments. Cross-entropy-optimized ML models for WFM classification tasks. Feature selection via mutual information.

- Level 5 (Autonomous): Continuous distribution monitoring with automated regime change detection and forecast model switching. Information-theoretic optimal experimental design for routing and scheduling experiments.

See Also

- Operations Research in Workforce Management

- Forecasting

- Forecast Accuracy Metrics

- Bayesian Methods for Workforce Forecasting

- Discrete Event Simulation

- WFM Labs Maturity Model

References

- Shannon, C.E. "A Mathematical Theory of Communication." Bell System Technical Journal 27, 1948. — The founding paper.

- Cover, T.M. and Thomas, J.A. Elements of Information Theory, 2nd ed. Wiley, 2006. — The standard textbook.

- Hubbard, D.W. How to Measure Anything, 3rd ed. Wiley, 2014. — Value of information and applied information economics.

- MacKay, D.J.C. Information Theory, Inference, and Learning Algorithms. Cambridge University Press, 2003. — Bridges information theory, Bayesian inference, and machine learning.

- Koole, G. Call Center Optimization. MG Books, 2013. — Demand variability analysis and forecasting quality measurement.