Fairness in Algorithmic Scheduling

Fairness in Algorithmic Scheduling examines how workforce scheduling algorithms distribute benefits and burdens across employees, and what it means — mathematically and operationally — for those distributions to be "fair." As scheduling decisions increasingly depend on optimization algorithms and AI systems, the question of fairness shifts from managerial judgment to algorithmic design. This page formalizes fairness concepts, identifies impossibility results that constrain what any algorithm can achieve, and provides practical frameworks for auditing and improving WFM scheduling fairness.

Overview

Every scheduling algorithm makes trade-offs. An optimizer minimizing total cost may consistently assign undesirable shifts to the same subset of employees — typically those with the least seniority, the most flexible availability, or characteristics that happen to correlate with protected attributes. The algorithm isn't trying to be unfair; it's exploiting patterns in the data to minimize cost. But the effect on employees is the same.

Fairness in scheduling matters for three reasons:

- Legal and regulatory — Disparate impact doctrine (U.S.), indirect discrimination (EU), and the emerging EU AI Act all constrain algorithmic decision-making that systematically disadvantages protected groups.

- Operational — Perceived unfairness drives attrition. Attrition drives hiring and training costs. The "optimal" schedule that burns out half the workforce isn't optimal.

- Ethical — Employees are stakeholders, not interchangeable resources. Dignified treatment of human workers is a baseline, not an optimization objective.

The challenge: fairness is not a single, unambiguous criterion. Multiple definitions exist, they conflict with each other, and they conflict with efficiency. Understanding these tensions is prerequisite to navigating them.

Mathematical Foundation

Formal Fairness Definitions

Let denote a protected attribute (e.g., gender, age group, tenure bracket), denote the scheduling outcome (shift quality, overtime hours, weekend assignments), and denote the algorithm's assignment.

Demographic Parity (Statistical Parity): The distribution of scheduling outcomes is independent of the protected attribute.

In WFM terms: the fraction of weekend shifts assigned to employees over 50 should equal the fraction assigned to employees under 50. This is the simplest criterion but also the crudest — it ignores legitimate reasons for differential treatment (different availability, different skill sets).

Equalized Odds: Conditional on the "true label" (the legitimate scheduling factor), outcomes are independent of the protected attribute.

In WFM terms: among employees with identical availability and skill profiles, scheduling outcomes should be independent of protected attributes. This allows differential treatment when it stems from legitimate factors.

Individual Fairness (Dwork et al., 2012): Similar individuals should receive similar outcomes.

where measures outcome distance, measures individual similarity, and is a Lipschitz constant. Two agents with similar skills, tenure, and preferences should receive schedules of similar quality. The difficulty lies in defining "similar" — the choice of distance metric encodes values.

Envy-Freeness (from fair division theory): No agent prefers another agent's schedule to their own. Formally:

where is agent 's utility for agent 's schedule. Perfect envy-freeness is typically infeasible in scheduling because shifts are indivisible goods. The practical target is approximate envy-freeness or envy-freeness up to one item (EF1) — no agent envies another by more than the value of one shift assignment.

Max-Min Fairness: Maximize the utility of the worst-off employee.

This is the Rawlsian criterion — improve the worst schedule before improving average schedule quality. It connects to lexicographic optimization: first maximize the minimum, then maximize the second-minimum, and so on.

Impossibility Results

Kleinberg, Mullainathan, and Raghavan (2016) proved that three natural fairness conditions cannot simultaneously hold except in degenerate cases:

- Calibration within groups

- Balance for the positive class

- Balance for the negative class

The implication: no scheduling algorithm can satisfy all fairness criteria simultaneously. Every system must choose which fairness criteria to prioritize, and that choice is fundamentally a value judgment, not a technical one.

Chouldechova (2017) proved that except when base rates are equal across groups, it is impossible to simultaneously achieve equal false positive rates and equal false negative rates. In scheduling terms: if one group has genuinely different availability patterns, you cannot simultaneously equalize both "rate of receiving undesirable shifts among those available" and "rate of missing desirable shifts among those qualified."

These impossibility results are not theoretical curiosities. They have direct operational implications: any vendor claiming their scheduling algorithm is "completely fair" is either using a narrow definition or making false claims.

Constrained Optimization Framework

Fairness enters scheduling optimization as a constraint or objective. Consider a shift assignment LP:

Fairness as constraint: Add bounds on maximum unfairness.

where is the group with attribute , is schedule quality for agent , and is the maximum allowed disparity.

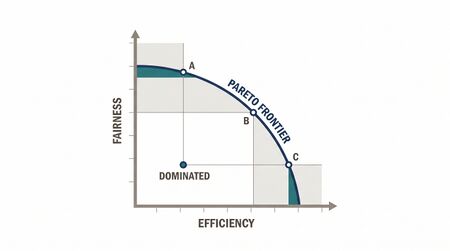

Fairness as objective: Use multi-objective optimization to explore the Pareto frontier between cost efficiency and fairness.

The Pareto frontier reveals the price of fairness — how much efficiency must be sacrificed for each unit of fairness improvement. In practice, the first units of fairness are often cheap (small efficiency cost), while perfect fairness may be prohibitively expensive.

WFM Applications

Equitable Overtime Distribution

Overtime is simultaneously a burden (work-life disruption) and a benefit (additional pay). Fair distribution requires understanding which frame applies. Common approaches:

- Round-robin: Offer overtime sequentially through a rotating list. Simple, transparent, but ignores preferences — some agents want overtime, others don't.

- Preference-weighted rotation: Agents indicate overtime willingness; algorithm distributes proportionally to willingness while maintaining equitable minimum and maximum exposure.

- Constrained optimization: Minimize overtime cost subject to a max-min fairness constraint on the cumulative overtime gap across agents.

Fair Schedule Quality Allocation

"Schedule quality" encompasses start time desirability, weekend freedom, consecutive day-off patterns, and shift length preferences. Algorithms that maximize coverage efficiency tend to assign desirable schedules to agents whose skills create the most flexibility for the optimizer — effectively penalizing generalists.

Fairness intervention: define a composite schedule quality score for each agent and add a variance-bounding constraint:

This ensures schedule quality doesn't concentrate among a privileged subset.

Bias Detection in AI-Driven Routing

Skill-based routing algorithms that learn from historical data can perpetuate or amplify biases. If historically, certain agents received simpler calls (leading to better handle times), the algorithm may continue routing easier calls to those agents — creating a performance gap that appears meritocratic but is actually self-reinforcing.

Auditing approach: measure the difficulty-adjusted performance of each agent group. If Group A handles calls with average complexity score 3.2 while Group B handles calls with average complexity 4.1, raw performance comparisons are meaningless.

Seniority vs. Equality Trade-Offs

Many scheduling systems grant seniority-based preference priority. This creates a tension: seniority advantages are "fair" by one definition (earned through tenure) and "unfair" by another (systematically disadvantaging newer employees, who may disproportionately be younger, from minority groups, or recent immigrants).

The resolution is not to eliminate seniority but to bound its effect. A constrained formulation might grant seniority priority on primary shift preference but enforce equality on secondary quality metrics (weekend frequency, split-shift exposure).

Worked Example

Problem: A 200-agent center schedules weekly. Analysis reveals that agents in the "flexible availability" pool (those who listed wide availability) receive schedules with 22% lower quality scores than agents with restricted availability. The flexible pool is 68% female; the restricted pool is 71% male. A gender-neutral scheduling rule produces gender-disparate outcomes.

Audit Methodology:

Step 1: Compute average schedule quality by gender, controlling for availability width.

| Group | Avg Quality (raw) | Avg Quality (availability-adjusted) |

|---|---|---|

| Male | 78.3 | 76.1 |

| Female | 61.2 | 68.4 |

The raw gap is 17.1 points; after controlling for availability width, the gap drops to 7.7 points. The remaining gap is not explained by availability and requires investigation.

Step 2: Decompose the residual gap. Analysis reveals the optimizer systematically assigns flexible-pool agents to "filler" shifts (the irregular shifts needed to patch coverage gaps). Among agents with identical availability, those assigned more filler shifts happen to be clustered in teams with less senior managers who submit preferences later in the optimization sequence.

Step 3: Intervene. Add a fairness constraint bounding the quality gap between gender groups to ≤3 points after availability adjustment:

Step 4: Measure cost. The fairness-constrained schedule costs 1.8% more than the unconstrained optimum. The Pareto analysis shows that reducing the gap from 7.7 to 3.0 costs 1.8%, but reducing it from 3.0 to 0.0 would cost an additional 4.2%. The 3-point threshold represents a practical trade-off.

Regulatory Context

EU AI Act

The EU AI Act (effective 2026) classifies AI systems used in "employment, workers management and access to self-employment" as high-risk. Scheduling algorithms that assign shifts, determine overtime eligibility, or evaluate performance fall under this classification. Requirements include:

- Risk management: Documented identification of fairness risks

- Data governance: Training data examined for biases

- Transparency: Employees informed that algorithmic scheduling is in use

- Human oversight: Meaningful ability to override algorithmic decisions

- Bias testing: Regular audits for disparate impact

WFM vendors operating in or serving EU markets must comply. This is not optional or aspirational — it carries enforcement mechanisms.

U.S. Framework

No comprehensive federal AI regulation exists as of 2026, but existing frameworks apply:

- Title VII: Disparate impact doctrine applies to algorithmic scheduling regardless of intent

- State laws: Illinois BIPA, New York City Local Law 144, and proposed state AI regulations add additional constraints

- EEOC guidance: Employers remain liable for discriminatory outcomes even when using third-party scheduling software

Maturity Model Position

| Level | Description |

|---|---|

| Level 1 (Manual) | No fairness measurement; schedule quality distribution unknown |

| Level 2 (Developing) | Basic metrics tracked (overtime distribution by group); manual review for obvious disparities |

| Level 3 (Defined) | Formal fairness criteria selected; regular audits conducted; documented fairness policy |

| Level 4 (Quantitative) | Fairness constraints embedded in scheduling optimizer; Pareto frontier between fairness and cost explored |

| Level 5 (Optimizing) | Multiple fairness criteria balanced dynamically; intersectional analysis; continuous monitoring with automated alerts; regulatory compliance automated |

See Also

- Operations Research in Workforce Management

- Multi-Objective Optimization

- Schedule Optimization

- Employee Scheduling

- Schedule Quality Metrics

- AI and Employment

- Self-Scheduling and Flexible Workforce Models

References

- Chouldechova, A. (2017). "Fair prediction with disparate impact: A study of bias in recidivism prediction instruments." Big Data, 5(2), 153–163.

- Dwork, C., Hardt, M., Pitassi, T., Reingold, O., & Zemel, R. (2012). "Fairness through awareness." Proceedings of ITCS 2012.

- Kleinberg, J., Mullainathan, S., & Raghavan, M. (2016). "Inherent trade-offs in the fair determination of risk scores." Proceedings of ITCS 2017.

- Bertsimas, D., Farias, V.F., & Trichakis, N. (2011). "The price of fairness." Operations Research, 59(1), 17–31.

- Rawls, J. (1971). A Theory of Justice. Harvard University Press.

- European Parliament (2024). Regulation (EU) 2024/1689 (AI Act).