A/B Testing for WFM Experiments

A/B Testing for WFM Experiments brings experimental methodology to workforce management decisions that are traditionally made by intuition, vendor recommendation, or copying what another center did. When a WFM team asks "does flexible break scheduling improve adherence?" or "does skills-based routing reduce AHT?" — the only rigorous answer comes from a controlled experiment. This page covers experimental design, sample size calculation, pitfalls specific to contact center environments, and both frequentist and Bayesian approaches to analyzing WFM experiments.

Overview

WFM is rich with questions that look answerable from observational data but are not. A center switches from fixed to flexible break scheduling and adherence improves by 2 percentage points. Did the flexibility cause the improvement? Or did it coincide with a seasonal volume drop, a new supervisor, a system upgrade, or the departure of chronically non-adherent agents? Observational analysis cannot separate these effects. Experiments can.

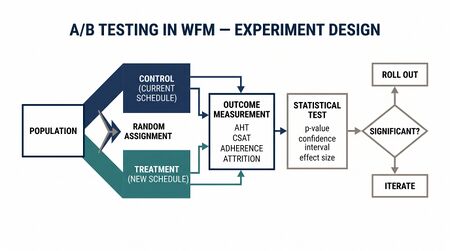

The core logic of A/B testing is straightforward: randomly assign units (agents, teams, intervals, days) to treatment and control groups, apply the intervention to the treatment group only, measure outcomes, and test whether the difference exceeds what chance alone would produce. Randomization is the mechanism that eliminates confounders — not by measuring them, but by ensuring they are equally distributed across groups.

Contact centers present unique experimental challenges. Agents share queues, so treated agents can affect control agents through queue dynamics (spillover). Performance varies by day-of-week, creating cyclical noise. Agents know they are being observed (Hawthorne effect). Sample sizes are often modest — a 500-agent center provides less statistical power than a consumer web experiment with millions of users. These challenges are solvable but require deliberate experimental design.

Mathematical Foundation

Hypothesis Testing Framework

A WFM experiment tests a null hypothesis against an alternative:

- : The treatment has no effect.

- : The treatment has an effect.

The two error types:

- Type I error (): Concluding the treatment works when it does not. Conventionally set at 0.05.

- Type II error (): Missing a real effect. Power = , conventionally 0.80.

Sample Size Calculation

For a two-sample test of means (e.g., AHT or adherence), the required sample size per group is:

where is the standard deviation of the outcome metric, is the minimum detectable effect (MDE), for , and for 80% power.

WFM example: Testing whether a routing change reduces AHT. Current AHT = 420 seconds, seconds, MDE = 15 seconds (3.6% reduction).

This is 564 observations per group, not 564 agents. If each agent handles 40 calls per day and the experiment runs 10 days, 2 agents per group would provide sufficient observations — but this ignores within-agent correlation. When the unit of randomization is the agent (as it usually should be), the effective sample size depends on the intraclass correlation coefficient (ICC).

With agent-level randomization:

where is observations per agent, is observations per agent, and is the ICC. For AHT with typical ICC of 0.15-0.25 across agents, the required agent count is substantially higher than the naive observation-based calculation suggests.

Practical Rule of Thumb

For WFM experiments with agent-level randomization:

- Small effect (2-3% change): 200+ agents per group, 3-4 weeks

- Medium effect (5-8% change): 80-120 agents per group, 2-3 weeks

- Large effect (10%+ change): 30-50 agents per group, 2 weeks

These assume typical WFM metric variability. Always run the formal power calculation with your data.

Method

Step 1: Define the Experiment

Every WFM experiment requires:

- Hypothesis: "Flexible break scheduling (±15 min window) will improve schedule adherence by ≥2 percentage points compared to fixed break times."

- Randomization unit: Agent, team, or interval. Agent is preferred for most experiments. Team-level randomization reduces spillover but requires more teams for adequate power.

- Treatment: The specific intervention. Be precise — "flexible breaks" is vague. "Agents may move their 15-minute break ±15 minutes from scheduled time without adherence penalty" is testable.

- Outcome metric: Primary metric (schedule adherence) and guardrail metrics (AHT, quality score, utilization) to ensure the treatment does not improve the primary metric at the expense of others.

- Duration: Long enough to capture day-of-week effects (minimum 2 weeks), seasonal patterns, and novelty effects (agents may behave differently simply because something changed). Four weeks is a reasonable default for most WFM experiments.

Step 2: Randomize

True randomization is essential. Never use alternating assignment (Agent 1 → treatment, Agent 2 → control), alphabetical assignment, or supervisor choice. Use a random number generator to assign each agent to treatment or control.

Stratified randomization: If the center has meaningful subgroups (sites, tenure bands, skill groups), stratify the randomization to ensure balance within each subgroup. This is especially important in smaller experiments.

Pre-treatment balance check: After randomization, verify that treatment and control groups have similar baseline characteristics — same average tenure, adherence, AHT, quality score. Large imbalances suggest a randomization error. Small imbalances are expected and handled by the statistical test.

Step 3: Run the Experiment

- Blinding: Full blinding is usually impossible in WFM (agents know their break rules changed). Blind the analysts — whoever runs the statistical analysis should not know which group is treatment until after analysis.

- Contamination monitoring: Watch for control agents adopting treatment behavior (e.g., moving breaks despite not being told they can) or treatment agents reverting.

- Pre-registered analysis plan: Document the primary analysis, secondary analyses, and subgroup analyses before seeing results. This prevents p-hacking — running many tests and reporting only the significant ones.

Step 4: Analyze Results

Primary analysis: Two-sample t-test or regression model comparing treatment and control on the primary metric, with standard errors clustered at the randomization unit level.

Regression adjustment: Including pre-treatment baseline metrics as covariates reduces variance and increases power without biasing the treatment effect estimate:

Multiple comparisons: If testing multiple outcomes, adjust p-values (Bonferroni or Benjamini-Hochberg) to control false discovery rate.

Step 5: Sequential Testing

Fixed-horizon tests (wait for the full sample, then analyze once) waste time when the effect is large and waste resources when the effect is clearly absent. Sequential testing frameworks allow continuous monitoring:

- Group sequential designs: Analyze at pre-specified interim points (e.g., weekly) with adjusted significance boundaries (O'Brien-Fleming, Pocock). Stop early for overwhelming evidence of efficacy or futility.

- Always-valid p-values: Methods that allow checking results at any time while controlling Type I error.

- Bayesian monitoring: Compute posterior probability of the treatment effect exceeding a threshold. Stop when the posterior probability exceeds a decision threshold (e.g., 95%).

Sequential testing is particularly valuable in WFM because experiments consume operational attention and management patience is finite.

WFM Applications

Routing Rule Comparison

Test whether skills-based routing outperforms round-robin. Randomization unit: incoming contacts (randomly route to skills-based or round-robin). Outcome: AHT, FCR, quality score, customer satisfaction. Duration: 2 weeks, minimum 5,000 contacts per group. Pitfall: ensure the same agent can receive contacts under both routing rules without confusion — or randomize at the agent level and route all contacts for treatment agents via one rule.

Schedule Pattern Test

Compare 4×10 vs. 5×8 shift patterns. Randomization unit: agent (volunteers randomly assigned to one pattern). Outcome: schedule adherence, unplanned absence rate, agent satisfaction, productivity. Duration: 4-8 weeks (shift pattern effects take time to manifest). Guardrail: ensure coverage equity — do not systematically under-staff certain intervals due to the experiment.

Coaching Method Evaluation

Test automated real-time coaching nudges vs. weekly supervisor-led coaching. Randomization unit: agent. Outcome: quality score improvement over 4 weeks. Pitfall: supervisor awareness — if supervisors know which agents receive automated coaching, they may unconsciously change their own coaching intensity for those agents (compensatory rivalry or resentful demoralization).

IVR Change Impact

Test a new IVR menu design. Randomization unit: incoming call (A/B split at the IVR). Outcome: self-service completion rate, transfer rate, customer effort score. Duration: 2 weeks, 10,000+ calls per group. This is the closest WFM gets to a standard web A/B test.

Break Flexibility

Test flexible vs. fixed break scheduling. See worked example below.

Pitfalls

Spillover Effects

The biggest threat to WFM experiments. If treatment agents take longer breaks (using flexibility), their absence increases queue pressure on control agents, artificially depressing control group adherence. The observed treatment effect is biased upward — the treatment looks better than it actually is.

Mitigations:

- Randomize at the team or site level to create physical separation

- Use a "buffer" — exclude agents on the same team as treatment agents from the control group

- Model spillover explicitly using interference-aware estimators

- If the experiment is large enough that treatment agents constitute >20% of the queue's staffing, the spillover is significant and team-level randomization is required

Novelty and Hawthorne Effects

Any change — even a negative one — can temporarily improve performance because agents know they are being observed or because novelty increases engagement. Run experiments long enough for the novelty to wear off (typically 2-3 weeks). If feasible, compare performance in week 1 vs. weeks 3-4 to estimate the novelty decay.

Day-of-Week and Seasonality

Monday adherence differs from Friday adherence. If the experiment launches on different days for treatment and control, day-of-week effects confound the results. Always launch simultaneously and run for complete weeks. Account for holidays, pay periods, and seasonal volume changes in the analysis.

Multiple Testing

A WFM team running 6 experiments simultaneously at has a probability of at least one false positive. Adjust significance levels when running multiple simultaneous experiments or testing multiple outcomes within one experiment.

Worked Example

A 400-agent inbound support center tests whether flexible break scheduling improves adherence.

Design:

- Treatment: 200 agents may shift their two 15-minute breaks ±15 minutes from scheduled time without adherence penalty.

- Control: 200 agents keep fixed break times (standard policy).

- Stratification: Randomize within tenure bands (0-6 months, 6-12, 12+) and shift types (morning, afternoon, split).

- Duration: 4 weeks (20 business days).

- Primary outcome: Schedule adherence percentage.

- Guardrail metrics: AHT, quality score, utilization.

Power calculation: Current mean adherence: 87.3%, SD: 6.2 percentage points. MDE: 2 percentage points. ICC across agents: 0.20. With 200 agents per group and 20 days of data:

Effective sample size per group accounts for clustering. With 200 agents per group, the design achieves 85% power to detect a 2-point adherence difference.

Results (week 4):

| Metric | Treatment | Control | Difference | 95% CI | p-value |

|---|---|---|---|---|---|

| Schedule adherence | 89.8% | 87.5% | +2.3 pp | [1.1, 3.5] | 0.001 |

| AHT (seconds) | 418 | 421 | −3 | [−9, 3] | 0.34 |

| Quality score | 4.12 | 4.09 | +0.03 | [−0.08, 0.14] | 0.59 |

| Utilization | 82.1% | 82.8% | −0.7 pp | [−1.9, 0.5] | 0.24 |

Analysis:

- Primary outcome significant: 2.3 percentage point adherence improvement (p = 0.001).

- Guardrail metrics: no significant degradation in AHT, quality, or utilization.

- Novelty check: Week 1 treatment effect was 3.1 pp, weeks 3-4 averaged 2.1 pp. Some novelty decay but effect persists.

- Subgroup: Effect strongest for agents with 6-12 months tenure (+3.1 pp) and weakest for agents with <6 months tenure (+1.2 pp, not significant — new agents may not yet have stable break patterns).

Decision: Roll out flexible breaks to all agents. Expected annual adherence improvement: ~2 percentage points. At scale, this reduces shrinkage by approximately 0.8 FTE equivalents and improves agent satisfaction (secondary benefit for retention).

Implementation

import numpy as np

import pandas as pd

from scipy import stats

from statsmodels.stats.power import TTestIndPower

# Power calculation

power_analysis = TTestIndPower()

effect_size = 2.0 / 6.2 # MDE / SD (Cohen's d)

sample_size = power_analysis.solve_power(

effect_size=effect_size,

alpha=0.05,

power=0.80,

alternative='two-sided'

)

print(f"Required sample size per group (naive): {sample_size:.0f}")

# Adjust for clustering (ICC)

icc = 0.20

obs_per_agent = 20 # 20 business days

design_effect = 1 + (obs_per_agent - 1) * icc

agent_sample = sample_size * design_effect / obs_per_agent

print(f"Design effect: {design_effect:.1f}")

print(f"Required agents per group: {agent_sample:.0f}")

# Randomization with stratification

def stratified_randomize(df, strata_cols, treatment_col='treatment'):

"""Stratified random assignment to treatment (1) and control (0)."""

df = df.copy()

df[treatment_col] = np.nan

for _, group in df.groupby(strata_cols):

n = len(group)

assignments = np.array([1] * (n // 2) + [0] * (n - n // 2))

np.random.shuffle(assignments)

df.loc[group.index, treatment_col] = assignments

return df

agents = stratified_randomize(agents_df, strata_cols=['tenure_band', 'shift_type'])

# Analysis: regression-adjusted treatment effect

import statsmodels.api as sm

model = sm.OLS.from_formula(

'adherence ~ treatment + baseline_adherence + C(tenure_band) + C(shift_type)',

data=results_df

).fit(cov_type='cluster', cov_kwds={'groups': results_df['agent_id']})

print(model.summary())

# Bayesian A/B test (optional)

# Thompson sampling / Beta-Binomial for binary metrics

# For continuous metrics, use PyMC

import pymc as pm

with pm.Model() as ab_model:

mu_control = pm.Normal('mu_control', mu=87, sigma=5)

mu_treatment = pm.Normal('mu_treatment', mu=87, sigma=5)

sigma = pm.HalfNormal('sigma', sigma=5)

pm.Normal('obs_control', mu=mu_control, sigma=sigma,

observed=control_data)

pm.Normal('obs_treatment', mu=mu_treatment, sigma=sigma,

observed=treatment_data)

diff = pm.Deterministic('diff', mu_treatment - mu_control)

trace = pm.sample(2000, return_inferencedata=True)

prob_positive = (trace.posterior['diff'] > 0).mean().values

print(f"Posterior probability treatment > control: {prob_positive:.3f}")

Key libraries:

- scipy.stats — t-tests, Mann-Whitney, chi-squared tests

- statsmodels — regression analysis with clustered standard errors, power calculations

- PyMC — Bayesian A/B testing with full posterior inference

- Eppo / GrowthBook — open-source experimentation platforms with sequential testing

Maturity Model Position

| Level | Capability | Experimentation Application |

|---|---|---|

| Level 1 — Reactive | No experimentation | "We tried it and it seemed to work" |

| Level 2 — Managed | Before/after comparisons | Compare metrics before and after a change, no control group |

| Level 3 — Proactive | Basic A/B tests | Randomized experiments with fixed-horizon analysis |

| Level 4 — Advanced | Sequential and Bayesian testing | Continuous monitoring, early stopping, posterior probabilities |

| Level 5 — Optimized | Experimentation platform | All operational changes flow through an experiment pipeline with automated analysis |

See Also

- Statistical Process Control — monitoring metrics for detecting changes, complements experimentation

- Bayesian Methods for Workforce Forecasting — Bayesian framework applied to A/B test analysis

- Causal Inference for Workforce Decisions — observational causal methods for when experiments are not feasible

- Forecasting — forecast accuracy as an outcome metric in forecasting method experiments

- Schedule Optimization — schedule designs as experimental treatments

References

- Kohavi, R., Tang, D., & Xu, Y. (2020). Trustworthy Online Controlled Experiments: A Practical Guide to A/B Testing. Cambridge University Press. The definitive reference for applied A/B testing.

- Deng, A., Xu, Y., Kohavi, R., & Walker, T. (2013). "Improving the sensitivity of online controlled experiments by utilizing pre-experiment data." Proceedings of WSDM 2013. Regression adjustment for experiments.

- Johari, R., Pekelis, L., & Walsh, D. (2017). "Always valid inference: Continuous monitoring of A/B tests." Operations Research, 65(5), 1260-1269. Sequential testing theory.

- Rubin, D.B. (1974). "Estimating causal effects of treatments in randomized and nonrandomized studies." Journal of Educational Psychology, 66(5), 688-701. The potential outcomes framework.