Workforce Demand Signal Architecture

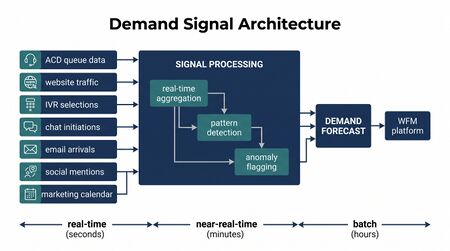

Workforce demand signal architecture refers to the design of data pipelines, event streams, and integration patterns that carry upstream business signals — including marketing campaign activations, product release events, CRM behavioral triggers, and external economic indicators — from their originating systems into workforce management demand forecasting models. Traditional Forecasting Methods rely primarily on historical contact volume patterns to project future demand; demand signal architecture extends this by incorporating leading indicators that precede and cause volume changes, enabling earlier and more accurate demand anticipation. Kleppmann's Designing Data-Intensive Applications establishes the foundational principles of event-driven data architecture — stream processing, log-based message passing, and fault-tolerant data pipeline design — that underpin modern demand signal implementations.[1] Confluent's 2023 practitioner analysis of real-time event streaming for contact centers describes deployment patterns for Apache Kafka–based demand signal pipelines in production contact center environments.[2]

Conceptual Foundation

Demand Signals vs. Historical Volume

Classical contact center forecasting operates on a pull model: historical volume data is extracted from ACD systems, smoothed, and projected forward using time-series methods. This approach is inherently reactive — it detects patterns after they have occurred and extrapolates them into the future. It cannot anticipate demand changes caused by events that have not yet materialized in historical data.

Demand signal architecture introduces a push model for certain categories of demand drivers. Known, scheduled, or detectable events that cause volume changes are encoded as signals and pushed to the forecasting system when they occur or become known, allowing the forecast to update before the volume impact arrives. The combination of historical pattern modeling and event signal integration produces a forecast with lower error in the presence of structured, predictable demand variation.

The Signal Taxonomy

Demand signals fall into several categories by source and lead time:

| Signal Category | Examples | Typical Lead Time | Volume Impact Lag |

|---|---|---|---|

| Marketing campaigns | Email deployment, paid media activation, promotional launches | 1–4 weeks | Hours to days |

| Product events | Software releases, billing system changes, service outages | Days to hours | Minutes to hours |

| CRM behavioral triggers | Large cohort reaching renewal date, payment failure batch, loyalty tier change | Days | Days to hours |

| Seasonal and calendar | Holidays, fiscal periods, end-of-month | Weeks to months | Predictable pattern |

| External economic | Rate changes, regulatory announcements, competitive actions | Variable | Days to weeks |

| Operational events | Agent training days, system maintenance windows | Days | Direct capacity effect |

Not all demand signals are equally tractable. Marketing campaign signals are typically well-structured (campaign metadata is available in campaign management platforms) and causally linked to volume (email deployment → increased inbound inquiries). External economic signals are harder to operationalize because the causal relationship between macro indicators and contact center volume is indirect and variable.

Event-Driven Architecture for Demand Signals

Log-Based Message Passing

Kleppmann describes log-based message brokers — in which producers append events to an ordered, durable log and consumers read from the log at their own pace — as the foundational pattern for reliable event-driven data pipelines.[3] Apache Kafka is the dominant implementation of this pattern. In demand signal architecture, each upstream system (CRM, marketing platform, product system) publishes events to dedicated Kafka topics; the WFM forecasting system consumes these topics and translates events into forecast adjustments.

Key properties of log-based architectures relevant to demand signal pipelines:

- Durability — events are persisted in the log for a configurable retention period; consumers can replay events to recover from failures or reprocess historical signals

- Decoupling — producers and consumers are independent; a forecasting system failure does not affect the marketing platform's ability to publish campaign events

- Multiple consumers — the same demand signal can be consumed by the WFM forecasting system, the Real-Time Operations intraday management system, and the Reporting and Analytics Framework independently

- Consumer lag monitoring — the offset gap between the latest published event and the consumer's current position provides an operational health signal for the pipeline

Signal Schema Design

Each demand signal event should carry a standardized schema that includes:

- Event type identifier (campaign launch, product release, outage)

- Event timestamp (when the triggering action occurred)

- Effective start and end times (the window during which volume impact is expected)

- Anticipated volume impact (absolute or percentage, by contact type)

- Confidence level (high for planned events with historical precedent, low for novel events)

- Source system identifier and event metadata

Standardized schemas allow the forecasting system to process diverse signal types through a common ingestion pipeline, while retaining source-specific metadata for audit and model improvement.

CRM as a Demand Signal Source

Customer relationship management platforms are a rich source of structured demand signals because they contain scheduled customer lifecycle events that reliably drive contact volume. Examples include:

- Cohorts of customers approaching contract renewal dates (generating renewal inquiry contacts)

- Payment failure batches processed on defined billing cycles (generating payment issue contacts)

- Warranty expiration cohorts (generating support inquiry contacts)

- Loyalty program tier changes (generating account inquiry contacts)

CRM events can be exported as scheduled batch feeds or published as real-time events via webhook or API. The volume impact of CRM cohort events is estimable from historical contact rates for similar cohort events, providing quantifiable forecast adjustments.

Marketing Campaign Integration

Marketing campaign platforms (email service providers, demand-side platforms, CRM marketing modules) maintain campaign calendars and deployment schedules. Integrating these with the WFM forecasting pipeline requires:

- A data feed from the campaign platform that publishes campaign deployment events with send volume, target segment, and campaign type

- A historical response model that translates campaign type and send volume into expected contact volume by channel and time window

- A mechanism to inject the forecast adjustment into the WFM system with appropriate timing (before the campaign deploys)

The challenge in marketing integration is that the volume impact of campaigns varies significantly by offer type, customer segment, and campaign fatigue levels — requiring a calibrated model rather than a fixed volume multiplier. Forecasting Methods that incorporate campaign variables as regression features in time-series models (rather than treating them as manual overrides) produce more accurate and auditable adjustments.

Integration with WFM Forecasting Systems

Forecast Override Architecture

Demand signals can be integrated with WFM forecasting systems in two architectural patterns:

Model-embedded signals: Campaign and event variables are included as features in the forecasting model. The model is trained on historical data including event metadata, learning the volume impact of different event types directly. This produces internally consistent forecasts but requires retraining when new event types emerge.

Override layers: The base forecast (generated from historical patterns alone) is adjusted by a separate signal-driven override calculation. Each demand signal produces an additive or multiplicative adjustment applied on top of the base forecast for the affected time window. Override layers are more transparent and easier to audit but require ongoing calibration of override magnitudes.

Most production implementations combine both approaches: model-embedded signals for well-understood, high-frequency events and override layers for novel or low-frequency events.

Forecast Update Frequency

Demand signal architecture enables more frequent forecast updates than traditional weekly planning cycles. With event-driven signals, the forecast can update in near-real-time when triggering events occur:

- A product outage is detected → outage event published → WFM forecast for the next 4 hours updated immediately → Real-Time Operations team notified of expected volume surge

This capability is particularly valuable for Real-Time Operations and intraday management, where the current-period forecast is the basis for staffing decisions with sub-hour horizons.

Governance and Data Quality

Signal Validation

Upstream systems publishing demand signals may publish erroneous, duplicate, or malformed events. The demand signal pipeline should include:

- Schema validation at ingestion — events that do not conform to the required schema are rejected and routed to an error log for investigation

- Duplicate detection — events with matching event IDs are deduplicated before forecast adjustment

- Magnitude sanity checks — forecast adjustments that exceed defined thresholds (e.g., +200% volume impact) trigger human review before application

Audit Trail

Every forecast adjustment derived from a demand signal should be logged with the originating event, the adjustment applied, and the model or rule that translated the signal to an adjustment. This audit trail supports post-event accuracy review — comparing the actual volume impact to the predicted adjustment — and model improvement cycles.

Reporting Integration

Demand signal performance should be tracked as a standard operational metric:

- Signal accuracy (predicted impact vs. actual volume impact, by signal type)

- Signal timeliness (lead time between signal receipt and volume impact)

- Coverage (% of volume variation explained by tracked demand signals vs. unexplained residual)

Maturity Model Considerations

| Maturity Level | Demand Signal Architecture |

|---|---|

| L1–L2 | No demand signal integration; forecasts based entirely on historical volume patterns; known events manually entered as override adjustments |

| L3 | Structured manual override process for known events (campaigns, outages); calendar event integration for seasonal patterns |

| L4 | CRM and marketing platform batch integration; systematic campaign volume models; event-driven override layer in place |

| L5 | Real-time event streaming for demand signals; model-embedded signal features; automated forecast updates on signal receipt; signal accuracy monitoring as operational metric |

Related Concepts

- Forecasting Methods

- WFM Data Infrastructure and Integration Architecture

- Real-Time Operations

- Capacity Planning Methods

- Reporting and Analytics Framework

- WFM Ecosystem Architecture

- Intelligent Automation

- WFM Labs Maturity Model

References

- ↑ Kleppmann, M. (2017). Designing Data-Intensive Applications: The Big Ideas Behind Reliable, Scalable, and Maintainable Systems. O'Reilly Media.

- ↑ Confluent. (2023). Real-Time Event Streaming for the Contact Center. Confluent, Inc.

- ↑ Kleppmann, M. (2017). Designing Data-Intensive Applications: The Big Ideas Behind Reliable, Scalable, and Maintainable Systems. O'Reilly Media.