Real Time Threshold Alerts and Escalation Protocols

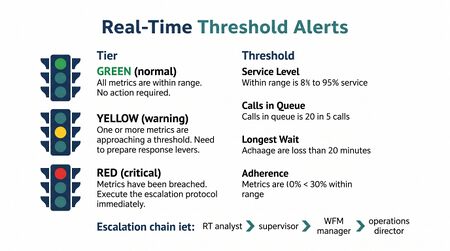

Real-time threshold alerts are automated or rule-based notifications generated by contact center monitoring systems when a key operational metric crosses a predefined boundary. Escalation protocols are the documented decision procedures that govern how the receiving party responds to each alert class—whether to act autonomously, escalate to a supervisor, or invoke incident management. Together, the alert architecture and the escalation protocol constitute the operational nervous system of real-time operations: threshold alerts surface problems that require attention; escalation protocols determine the appropriate response, authority level, and time horizon for resolution.[1] Without defined protocols, alerts generate noise rather than action, and operational response becomes inconsistent across analysts and shifts.

Alert Classes and Triggering Conditions

Threshold alerts in contact center environments are typically organized by the metric being monitored. The most operationally significant alert classes are described below.

Service Level Alerts

Service level alerts fire when the percentage of contacts answered within the target threshold falls below a defined floor. Common configurations:

- Warning threshold: service level below 80% of goal for one interval (e.g., below 64% when the goal is 80/20)

- Critical threshold: service level below 60% of goal for two consecutive intervals

- Recovery threshold: service level recovering above warning threshold, confirming an intervention has taken effect

Service level is a lagging indicator within an interval—it reflects contacts already handled. Alert systems that rely solely on service level react after the problem has materialized. More sophisticated implementations alert on predicted service level: a projection based on current queue depth, staffing, and real-time arrival rate.[2]

Average Speed of Answer (ASA) Alerts

Average speed of answer alerts trigger when mean wait time across active contacts exceeds a defined threshold. ASA is more sensitive than service level as an early-warning indicator during a developing queue failure, because it rises before service level degrades to critical levels. A representative triggering condition: ASA exceeding 150% of target for three consecutive minutes.

Queue Depth Alerts

Queue depth alerts fire when the number of contacts waiting in queue exceeds an absolute count (e.g., more than 25 contacts holding). Queue depth is an instantaneous metric rather than an interval average, making it well-suited for detecting sudden arrival spikes before they propagate into service level degradation. Queue depth thresholds must be calibrated to the operation's size and handle time—a threshold of 25 contacts is material in a 40-agent center and negligible in a 400-agent center.

Occupancy Alerts

Occupancy alerts operate in two directions. High-occupancy alerts (e.g., occupancy above 90% for two consecutive intervals) flag agent fatigue risk and reduced ability to absorb additional demand. Low-occupancy alerts (e.g., occupancy below 65% for three intervals) flag potential overstaffing and may trigger voluntary time-off offer sequences.

Adherence and Conformance Alerts

Adherence alerts fire at the agent or team level when the proportion of staff in the scheduled state falls below threshold. A center-wide adherence alert (e.g., adherence below 88% across the on-floor population) typically signals an unplanned absence cluster or a systemic schedule deviation, both of which affect staffing position.[3]

Average Handle Time Alerts

AHT alerts trigger when current interval AHT deviates materially from the planned value used in the staffing model—typically ±15–20%. An AHT spike can be caused by complex contact types, a process change, a system issue, or a discrete event (e.g., a billing error driving complex call types). Sustained AHT elevation degrades capacity even when headcount is adequate.

Abandonment Rate Alerts

Abandonment alerts fire when the percentage of callers disconnecting before being answered exceeds a threshold. Abandonment above defined levels (commonly 5–8%, depending on the operation) indicates a customer experience failure and often presages a call-back surge that compounds the original problem.

Alert Configuration Principles

Alert configuration requires balancing sensitivity against signal-to-noise ratio. Thresholds set too tightly generate alert fatigue—analysts habituate to firing alerts and response consistency degrades. Thresholds set too loosely fail to surface problems until they are severe.

Effective threshold configuration applies several principles:

- Sustained breach, not instantaneous: most alerts should require the threshold to be exceeded for two or more consecutive intervals before firing, reducing false positives from statistical noise

- Time-of-day calibration: thresholds appropriate for peak intervals may be inappropriate for off-peak; a well-configured system adjusts thresholds by interval group

- Metric correlation: a single metric crossing threshold may be noise; correlated thresholds (e.g., service level below goal AND queue depth above threshold simultaneously) carry higher signal fidelity

- Documented ownership: each alert class should have a named role responsible for acknowledgment within a defined response window

Escalation Protocol Structure

An escalation protocol is a documented decision procedure that maps each alert class to a response sequence. Effective protocols specify: who receives the alert, the expected acknowledgment time, the decision authority at each tier, and the conditions under which the alert escalates to the next tier.

Decision Tree Architecture

A well-structured escalation protocol operates as a decision tree with three principal branches:

- Autonomous response: the alert recipient has defined authority to act without additional approval. The response is selected from the approved lever cascade and executed immediately. Example: break resequencing, queue priority adjustment.

- Supervised response: the alert recipient identifies the appropriate response but requires authorization from a supervisor or team lead. Response is held pending approval. Example: mandatory overtime authorization.

- Incident declaration: the alert cannot be resolved through standard levers; the situation requires escalation to the Resource Optimization Center or operations leadership, and may trigger the Event Management process. Example: sustained multi-skill queue failure, facility event, platform outage.

Response Time Windows

Protocols should specify maximum response times by tier:

| Alert Tier | Acknowledgment Target | Response Initiation Target |

|---|---|---|

| Tier 1 (Autonomous) | 2 minutes | 5 minutes |

| Tier 2 (Supervised) | 5 minutes | 15 minutes |

| Tier 3 (Incident) | 10 minutes | Incident protocol timeline |

These windows are representative; actual targets vary by operation size and SLA sensitivity.

Escalation Conditions

Escalation from Tier 1 to Tier 2 is triggered when:

- The autonomous response has been applied and the metric has not recovered within two intervals

- The alert class is above Tier 1 authority by definition (e.g., any alert requiring overtime authorization)

- Multiple simultaneous alerts create a compound situation that exceeds the analyst's decision scope

Escalation from Tier 2 to Tier 3 (incident declaration) is triggered when:

- The situation affects multiple queues, skills, or sites simultaneously

- Standard levers have been exhausted without recovery

- An external cause (system outage, facility event) is identified that requires cross-functional coordination

Documentation Requirements

Every alert acknowledgment, response action, and escalation should be logged in real time. The alert log serves multiple downstream purposes: it feeds Variance Harvesting for root-cause analysis; it provides evidence for compliance and audit purposes; and it allows the operation to track which alert configurations are generating false positives versus true operational signals.

Integration with Intraday Management

Threshold alerts are not standalone tools. They function as the triggering mechanism for the Intraday Management cycle—surfacing the variances that require reforecasting or lever activation. An alert architecture without a connected intraday management process produces alerts that are acknowledged but not acted upon. Conversely, an intraday management process without alert integration relies on the analyst to identify problems manually, which introduces detection lag and cognitive load.

The connection between alerting and intraday management should be explicit in the operation's Daily ROC Routine documentation: which alerts trigger which review steps, and what authority the on-duty Real-Time Analyst holds for each response class.

Maturity Model Considerations

| Maturity Level | Alert and Escalation Characteristics |

|---|---|

| L1 — Reactive | No automated alerting. Problems identified visually or by escalation from floor supervisors. No documented escalation protocol. Response ad hoc and inconsistent. |

| L2 — Foundational | Basic threshold alerts configured in WFM platform. Alert thresholds static and uniform across all intervals. Escalation protocol documented but applied inconsistently. Alert log maintained manually. |

| L3 — Integrated | Alert thresholds calibrated by interval group and metric class. Sustained-breach logic reduces false positives. Escalation protocol enforced via SOP with documented response windows. Alert log integrated into post-day variance review. |

| L4 — Optimized | Predictive alerts based on projected metric values, not just observed. Correlated alert logic reduces noise. Automated Tier 1 responses executed without analyst initiation. Alert resolution tracked and reported. |

| L5 — Adaptive | Alert architecture extended to agentic workforce metrics. Escalation protocols account for human-AI capacity blends. Self-adjusting thresholds based on real-time operating conditions. |

Related Concepts

- Real-Time Operations

- Intraday Management

- Real-Time Analyst Role and Responsibilities

- Real-Time Staffing Visualization and Wallboards

- Daily ROC Routine

- Resource Optimization Center (ROC)

- Event Management

- Variance Harvesting

- Service Level

- Occupancy

- Adherence and Conformance

- Average Handle Time

- Overtime and Voluntary Time Off (VTO) Management

- WFM Labs Maturity Model

References

- ↑ Fugate, D. (2018). Real-Time Management Fundamentals. ICMI Press.

- ↑ Gans, N., Koole, G., & Mandelbaum, A. (2003). Telephone call centers: Tutorial, review, and research prospects. Manufacturing & Service Operations Management, 5(2), 79–141.

- ↑ Fugate, D. (2018). Real-Time Management Fundamentals. ICMI Press.