Complexity Theory for WFM Practitioners

Complexity Theory for WFM Practitioners explains why some workforce management problems are fundamentally harder than others, what that hardness means in practice, and why it matters for every WFM professional who uses optimization software. This page translates computational complexity from a branch of theoretical computer science into operational intuition: why the scheduler sometimes runs for hours, why "optimal" doesn't always mean "best possible," and why certain problem configurations are inherently more difficult than others.

Overview

A WFM planner asks the vendor: "Why does the optimizer take 45 minutes for Monday's schedule but only 3 minutes for Saturday's?" The answer lies in computational complexity — the study of how problem difficulty scales with problem size and structure.

The central insight: some problems are fundamentally harder than others, not because our algorithms are bad, but because the problems themselves resist efficient solution. Shift scheduling, multi-skill rostering, and many other core WFM problems belong to a class of problems (NP-hard) for which no efficient exact algorithm is known — and, most researchers believe, no such algorithm exists.

This isn't a reason for despair. It's a reason for informed expectations. Understanding complexity explains:

- Why solver time limits exist and are necessary

- Why WFM software uses heuristics and metaheuristics rather than exhaustive search

- Why "optimal" in a vendor demo may mean "best found within a time budget"

- Why adding a few constraints can transform a solvable problem into an intractable one

Mathematical Foundation

Decision Problems and Complexity Classes

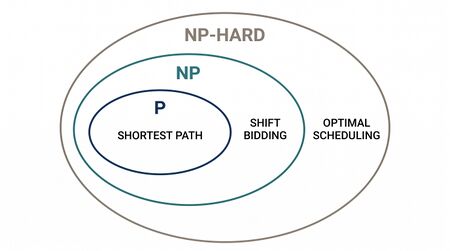

Complexity theory classifies decision problems (yes/no questions) into classes based on the computational resources required to solve them. The key classes relevant to WFM:

P (Polynomial Time): Problems solvable in time for some constant , where is the input size. These are "efficiently solvable" problems. Doubling the input size increases runtime by a bounded factor.

WFM examples in P:

- Shortest path for agent commute optimization — Dijkstra's algorithm solves it in

- Maximum matching on a bipartite graph — assignable agents to shifts when each agent fits exactly one skill group, solvable in

- Linear programming — core staffing requirement calculations, solvable in polynomial time (ellipsoid method, interior point methods)

- Network flow — load balancing across sites, solvable in

NP (Nondeterministic Polynomial Time): Problems where a proposed solution can be verified in polynomial time. If someone hands you a complete schedule and claims it satisfies all constraints, you can check that claim quickly. But finding such a schedule from scratch may be hard.

NP-hard: Problems at least as hard as the hardest problems in NP. No known polynomial-time algorithm exists. Every NP problem can be reduced to an NP-hard problem.

NP-complete: Problems that are both in NP and NP-hard. These are the "hardest problems in NP" — if you solve any one of them efficiently, you've solved all of them.

The relationship: . Whether (i.e., whether every problem whose solution can be verified quickly can also be solved quickly) is the most important open question in computer science. The overwhelming consensus: .

The Verification Asymmetry

The P vs NP distinction maps to an everyday WFM experience: checking a schedule is easy; building an optimal one is hard.

Given a completed schedule, a WFM planner can verify in minutes:

- Does every interval meet minimum staffing?

- Does every agent have required breaks and meal periods?

- Are labor law constraints satisfied?

- Does total cost equal the claimed amount?

Each check is polynomial-time — scan the schedule, verify each constraint. But generating the schedule that simultaneously satisfies all these constraints while minimizing cost may require exploring an exponential number of possibilities.

This asymmetry is exactly the P vs NP gap. Verification (checking a proposed answer) is in P. Search (finding the best answer) is NP-hard.

NP-Hardness Proofs Through Reduction

A problem is NP-hard if every known NP-hard problem can be reduced to — meaning an efficient solution to would imply efficient solutions to all NP-hard problems. Reductions work by transforming one problem into another while preserving the difficulty.

Graph Coloring → Multi-Skill Scheduling:

Graph coloring asks: can the vertices of a graph be colored with colors such that no two adjacent vertices share a color? This is NP-complete for .

Multi-skill scheduling reduces to graph coloring:

- Agents are vertices

- Two agents are "adjacent" (share an edge) if they cannot be scheduled simultaneously (conflicting skill requirements, same time slot, same workstation)

- "Colors" are schedule assignments (shift-skill combinations)

- Finding the minimum number of schedule patterns (colors) needed is at least as hard as graph coloring

Since graph coloring is NP-hard, multi-skill scheduling is at least NP-hard. No polynomial-time exact algorithm exists unless P = NP.

Bin Packing → Shift Consolidation:

Bin packing asks: given items of various sizes and bins of capacity , what is the minimum number of bins needed? This is NP-hard.

Shift consolidation reduces to bin packing:

- Agents are items (with "sizes" representing their availability constraints)

- Shifts are bins (with "capacity" representing coverage needs)

- Minimizing the number of distinct shift types while covering all requirements is equivalent to minimizing bins

What NP-Hardness Does NOT Mean

Common misconceptions:

- Does NOT mean "impossible to solve." It means no efficient exact algorithm is known. Heuristics, approximation algorithms, and solvers with time limits produce excellent solutions every day.

- Does NOT mean "every instance is hard." NP-hardness is about worst-case complexity. Many real WFM instances have structure (regularity, symmetry, sparsity) that makes them much easier than the worst case.

- Does NOT mean "exponential time required." Branch-and-bound solvers often find optimal solutions quickly by pruning the search tree. The pathological cases are rare.

- Does NOT mean "bigger is always harder." A 1,000-agent problem with simple constraints may solve faster than a 50-agent problem with complex multi-skill requirements.

What NP-hardness DOES mean: as problem size and constraint complexity grow, you will eventually hit a wall where exact solutions require impractical computation time. Planning for that wall — through time limits, heuristics, and approximation algorithms — is engineering prudence, not intellectual surrender.

Complexity of Specific WFM Problems

| WFM Problem | Complexity | Why | Practical Impact |

|---|---|---|---|

| Single-skill staffing (Erlang) | P | Closed-form formula | Solves instantly |

| Linear staffing optimization | P | LP solvable in polynomial time | Solves in seconds for large instances |

| Shift assignment (integer) | NP-hard | Reduces to set cover | Requires ILP solver with time limits |

| Multi-skill scheduling | NP-hard | Reduces to graph coloring | Heuristics often necessary for large instances |

| Shift generation with breaks | NP-hard | Reduces to bin packing with conflicts | Column generation helps but worst case is hard |

| Rostering (cyclic) | NP-hard | Reduces to Hamiltonian cycle | Pattern-based heuristics used |

| Optimal skill-based routing | NP-hard (general) | Reduces to online bipartite matching | Real-time heuristics required |

| Agent-to-team assignment | NP-hard | Reduces to partitioning | Metaheuristics (SA, GA) common |

| Break optimization | NP-hard | Reduces to scheduling with machine-dependent processing | Constraint programming effective |

WFM Applications

Why Solver Time Limits Exist

Every commercial WFM optimizer has a time limit setting (even if hidden from users). This exists because the problems are NP-hard: without a time limit, the solver might run for days, weeks, or longer trying to prove optimality.

The solver reports two numbers: the best solution found and the optimality gap (the proven bound on how far the solution could be from optimal). When you see "optimality gap: 1.5%," the solver is saying: "I found a solution, and I can prove that no solution exists that is more than 1.5% better."

Understanding this changes how practitioners interact with the software:

- A 0% gap means the solution is provably optimal — no further improvement is possible

- A 2% gap means the solution might be optimal (and often is), but the solver hasn't eliminated all possibilities

- A 10% gap suggests the solver is struggling — consider simplifying constraints, increasing the time limit, or using a different algorithm

Why Adding Constraints Changes Everything

WFM planners often discover that adding "just one more constraint" transforms a 3-minute solve into a 3-hour solve. This isn't a software bug — it's complexity theory in action.

Consider shift scheduling with basic coverage constraints: an LP relaxation is tight, and the ILP solves quickly. Now add:

- Minimum rest between shifts: Creates coupling between consecutive days (the solution for Tuesday depends on Monday's assignments). Adds temporal complexity.

- Team-based scheduling: Agents on the same team must have overlapping shifts. Creates grouping constraints that reduce the optimizer's flexibility.

- Skill-pairing rules: At least one Level 3 agent must be present whenever a Level 1 agent is scheduled. Creates logical dependencies.

Each constraint shrinks the feasible region in ways that make the LP relaxation weaker (larger integrality gap), which means the branch-and-bound tree grows exponentially. The problem hasn't changed type — it's still NP-hard — but its practical difficulty has increased dramatically.

Why "Optimal" Requires Qualification

When WFM software reports an "optimal" schedule, it typically means one of:

- Provably optimal (gap = 0%): The mathematical guarantee that no better solution exists. Rare for large NP-hard instances.

- Near-optimal with bound (gap = X%): A solution within X% of provably optimal. The standard output of modern ILP solvers.

- Best found within time budget: A heuristic or metaheuristic solution with no formal guarantee. Common for very large problems.

- Optimal for a simplified model: The solution is optimal for the mathematical model, but the model may not perfectly capture all real-world constraints.

Informed practitioners ask: "Optimal according to what model, with what gap, under what time limit?" This question is more valuable than any solver output.

Worked Example

Problem: A planner manages a 300-agent center with 25 skill groups. They need to generate weekly schedules with the following constraints:

- Coverage requirements per skill per interval (96 intervals/day × 7 days)

- 8-hour and 10-hour shift options

- 30-minute and 60-minute lunch breaks

- Minimum 11 hours between shifts

- Maximum 5 consecutive working days

- Agent preferences (seniority-weighted)

Complexity analysis:

The base problem (coverage + shift selection) is NP-hard but well-structured for ILP solvers. With 300 agents × ~50 possible shift starts × 7 days = ~105,000 binary variables.

Adding lunch break placement: each shift has multiple valid break positions, multiplying variables. New variable count: ~300,000.

Adding inter-day rest constraints: coupling between days prevents decomposition. The solver cannot optimize each day independently.

Predicted difficulty:

- Without lunch/rest constraints: 5-10 minute solve (based on problem structure)

- With lunch, without rest: 20-40 minute solve

- Full constraint set: 2-4 hours to reach 2% gap; potentially infeasible to prove optimality

Strategy: Decompose the problem. First solve the weekly shift pattern assignment (which days, which shift lengths) ignoring break placement. Then solve break placement day-by-day — this second problem is smaller and can be solved exactly. The decomposition sacrifices theoretical optimality (the combined solution may not be globally optimal) but produces near-optimal solutions in a fraction of the time.

Maturity Model Position

| Level | Description |

|---|---|

| Level 1 (Manual) | No awareness of computational complexity; schedules built by hand |

| Level 2 (Developing) | Uses optimizer but treats it as a black box; no understanding of time limits or gaps |

| Level 3 (Defined) | Understands optimality gaps; sets time limits deliberately; knows which constraints drive difficulty |

| Level 4 (Quantitative) | Problem decomposition strategies employed; complexity-aware constraint modeling; formal analysis of quality-time trade-offs |

| Level 5 (Optimizing) | Algorithm selection based on problem structure; portfolio solvers that match algorithms to instance difficulty; formal complexity reduction through constraint reformulation |

See Also

- Systems Thinking — Complex-adaptive-systems view (distinct from this page's computational complexity)

- Operations Research in Workforce Management

- Approximation Algorithms for Scheduling

- Integer and Mixed-Integer Programming

- Column Generation

- Schedule Generation

- Constraint Programming

- Metaheuristics

References

- Garey, M.R. & Johnson, D.S. (1979). Computers and Intractability: A Guide to the Theory of NP-Completeness. W.H. Freeman.

- Cormen, T.H., Leiserson, C.E., Rivest, R.L., & Stein, C. (2009). Introduction to Algorithms (3rd ed.). MIT Press.

- Pinedo, M.L. (2016). Scheduling: Theory, Algorithms, and Systems (5th ed.). Springer.

- Kleinberg, J. & Tardos, É. (2006). Algorithm Design. Pearson.

- Arora, S. & Barak, B. (2009). Computational Complexity: A Modern Approach. Cambridge University Press.